Pinned

🚀New Video: I Tested GPT 5.5 vs Opus 4.7: What You Need to Know

OpenAI just dropped GPT 5.5 and the benchmarks look strong against Opus 4.7, but benchmarks only tell part of the story. I ran four head-to-head experiments in Codex and Claude Code to see how the models actually compare on speed, cost, and output quality. The results were not what I expected.

Pinned

🚀New Video: Claude + HyperFrames Just Solved Video Editing

In this video I'm showing you how to edit videos end to end using Claude Code as the orchestrator, with HyperFrames handling motion graphics and video-use handling the trimming. You drop in a raw video, tell it what you want in natural language, and it cuts the filler words, syncs animations to your exact timestamps, and renders the final video. I walk through the full setup, the prompting style that actually works, and how to iterate fast with the new timeline editor. GITHUB REPO

Pinned

🏆 Community Wins Recap | Apr 11 – Apr 17

From first AI roles and paying clients to live receptionist systems and enterprise training deals - this week inside AIS+ showed what happens when execution meets consistency. 🚀 Standout Wins of the Week inside AIS+ 👉 @Griffin Maklansky went from being laid off to landing a role as an AI Workflow Builder in just 1 month. 👉 Duy Nguyen moved from fear to action, built a full AI-operated business, and already landed 2 paying clients through word-of-mouth. 👉 @Narsis Amin built a fully working AI restaurant receptionist handling bookings, availability, and CRM logging end-to-end. 👉 Michael Wacht closed a deal to deliver AI training for 200 employees, stepping into enterprise-level impact. 👉 @Dion Wang received his first official testimonial, validating real client results and around 40 hours/month saved. 🎥 Super Win Spotlight | @Debbie DeMarco Bennett Debbie joined AIS+ at a moment when AI was starting to disrupt the business she had built for 13 years. Instead of staying scared, she decided to learn how to work with the technology. Since joining, she has: • Automated multiple parts of her business and freed up major time • Built her own admin dashboard and secure internal systems • Started DeMarco Bennett AI • Landed her first client and began rebuilding their business systems Her biggest shift? From thinking “I’m not technical enough” to realizing that with the right support, iteration, and community, she could absolutely build. Debbie’s journey is proof that you do not need a tech background - you need the willingness to learn, ask questions, and keep building. 🎥 Watch Debbie’s story 👇 ✨ Want to see wins like this every week? Step inside AI Automation Society Plus and start building assets that compound 🚀

AI Automation Selling Process

If you are running an AI automation agency, How long do you typically spend with the prospect before you close a deal?

Poll

6 members have voted

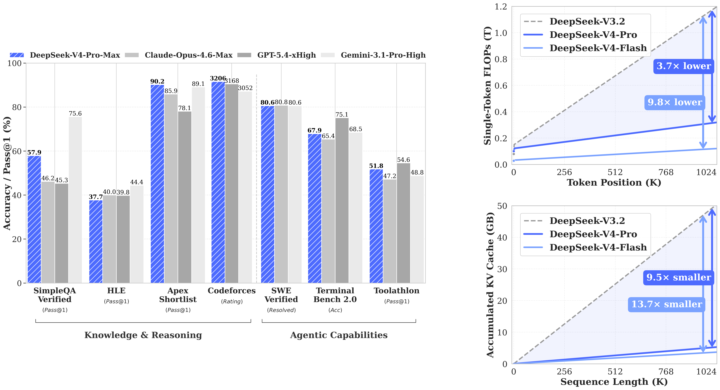

WOW! GPT-5.5 and most awaited Deepseek V4 Flash & Pro dropped today

What a Friday. For two years, if you were building anything serious with AI, you were building on Claude. Not because it was a rule — because it was the right call. Anthropic set the bar for coding. They set the bar for writing. They set the quiet default that if you cared about quality, you paid the Opus premium and didn't ask questions. I didn't either. The whole builder community ran on Claude for a reason. This week, that changed. GPT-5.5 shipped yesterday. DeepSeek V4 Pro shipped the same day. Inside twenty-four hours, the ceiling on agentic coding went up — and the open-weight floor came within striking distance of the closed frontier. Real contenders. Not "almost there." Actually here. Three things this changes for anyone building, and none of them are in the headlines yet. Coding: The default setting of "Claude writes the code, Claude runs the agents" breaks this week. GPT-5.5 is measurably better on the kind of long-running multi-step agent work that used to be Claude's moat. DeepSeek V4 Pro is within a fraction on real software engineering, at a price point where "run it myself" is genuinely on the table. Every tool in your stack that quietly assumed Anthropic — your IDE integrations, your review agents, your automation glue — is about to get reconsidered. That's good for you. Less lock-in. More leverage. Marketing and writing: The price-per-draft math just flipped. We've been rationing the good model forever — the flagship handles the brand-safe stuff, volume work gets the cheap model, and we've all quietly accepted that frontier-quality writing at scale isn't possible. That's over. Frontier-quality writing at open-weight pricing means every ad variant, every email rewrite, every landing-page test, every personalization loop runs at the top tier. The whole architecture of "one good draft, fifty cheap copies" starts feeling as dated as shared creative. Everything top-tier. Everything personalized. Everything testable. Agentic work: This is the one I am most excited about, and the most under-talked-about. For two years, "multi-model agent stacks" has been a slide in decks. Nobody actually builds them, because there hasn't been a real second option. GPT-5.5 for the reasoning step. DeepSeek V4 Pro for the long-context research step. Claude for the interpretive writing step. A cheap open model for the high-volume structured step. Not one runtime. A pipeline. Composed by you. Owned by you. That stops being a slide and starts being the default next month.

1-30 of 15,946

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by