Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Creator Academy

7.7k members • Free

Self Publishers Unite!

484 members • Free

Voiceover Masterclass

115 members • Free

KDP Publishing

997 members • Free

Podcaster Pals

96 members • Free

Creator Profits

18.9k members • Free

Voice AI Bootcamp 🎙️🤖

8.9k members • Free

The AI Advantage

119.1k members • Free

Zero to Hero with AI

12.1k members • Free

8 contributions to AI Automation Society

WOW! GPT-5.5 and most awaited Deepseek V4 Flash & Pro dropped today

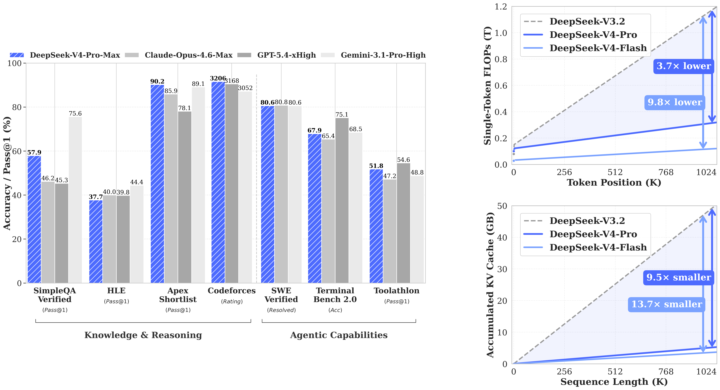

What a Friday. For two years, if you were building anything serious with AI, you were building on Claude. Not because it was a rule — because it was the right call. Anthropic set the bar for coding. They set the bar for writing. They set the quiet default that if you cared about quality, you paid the Opus premium and didn't ask questions. I didn't either. The whole builder community ran on Claude for a reason. This week, that changed. GPT-5.5 shipped yesterday. DeepSeek V4 Pro shipped the same day. Inside twenty-four hours, the ceiling on agentic coding went up — and the open-weight floor came within striking distance of the closed frontier. Real contenders. Not "almost there." Actually here. Three things this changes for anyone building, and none of them are in the headlines yet. Coding: The default setting of "Claude writes the code, Claude runs the agents" breaks this week. GPT-5.5 is measurably better on the kind of long-running multi-step agent work that used to be Claude's moat. DeepSeek V4 Pro is within a fraction on real software engineering, at a price point where "run it myself" is genuinely on the table. Every tool in your stack that quietly assumed Anthropic — your IDE integrations, your review agents, your automation glue — is about to get reconsidered. That's good for you. Less lock-in. More leverage. Marketing and writing: The price-per-draft math just flipped. We've been rationing the good model forever — the flagship handles the brand-safe stuff, volume work gets the cheap model, and we've all quietly accepted that frontier-quality writing at scale isn't possible. That's over. Frontier-quality writing at open-weight pricing means every ad variant, every email rewrite, every landing-page test, every personalization loop runs at the top tier. The whole architecture of "one good draft, fifty cheap copies" starts feeling as dated as shared creative. Everything top-tier. Everything personalized. Everything testable. Agentic work: This is the one I am most excited about, and the most under-talked-about. For two years, "multi-model agent stacks" has been a slide in decks. Nobody actually builds them, because there hasn't been a real second option. GPT-5.5 for the reasoning step. DeepSeek V4 Pro for the long-context research step. Claude for the interpretive writing step. A cheap open model for the high-volume structured step. Not one runtime. A pipeline. Composed by you. Owned by you. That stops being a slide and starts being the default next month.

0 likes • 8h

@Tim Westermann thanks, Tim. I appreciate your comment. I was someone who swore by Claude until last week. In my opinion, Claude 4.7 is a regression, and I was supposed to look for alternatives, so I've been testing and migrating my workflows over to GPT. GPT-5.5 is for sure a welcome upgrade. I have been regretting my 220x Max Pro subscriptions on Claude and have migrated them to Codex.

Anyone played with Andrej Karpathy's "LLM Wiki" idea from the gist he dropped?

Quick version in case you missed it: instead of using RAG to re-chunk your sources every time you ask a question, you compile each source once into a persistent markdown wiki. The LLM extracts concepts, writes entity and concept pages, updates cross-references, flags contradictions, and maintains the whole thing. Future queries read the pre-synthesized wiki. The part that clicked for me: the reason most of us abandon our second brains is that backlink and cross-reference upkeep is boring. The LLM doesn't care. It's happy to touch fifteen pages in one pass. I spent a couple of weeks turning Karpathy's pattern into a Claude Code plugin that actually scales (atomic pages, sharded indexes, BM25 fallback past ~300 pages). It also runs in Codex, Cursor, Gemini CLI, Pi, and OpenClaw through the skills CLI. Install in Claude Code: /plugin marketplace add praneybehl/llm-wiki-plugin /plugin install llm-wiki@llm-wiki Or in any other supported agent: npx skills add praneybehl/llm-wiki-plugin -a <your-agent> Five slash commands (init, ingest, query, lint, stats), stdlib-only Python, no dependencies. Plays well with Obsidian if you want the graph view. Repo: https://github.com/praneybehl/llm-wiki-plugin Karpathy's gist: https://gist.github.com/karpathy/442a6bf555914893e9891c11519de94f Curious if anyone here has tried the pattern themselves. What did you ingest first, and what broke before it worked?

I Run 10 YouTube Channels. I Don't Make a Single Video. Here's what that actually looks like.

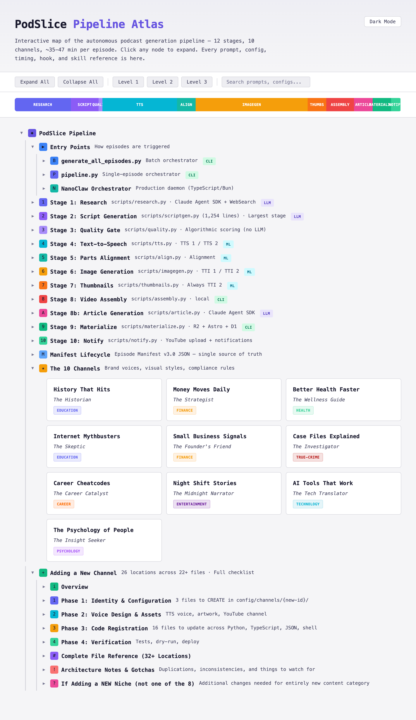

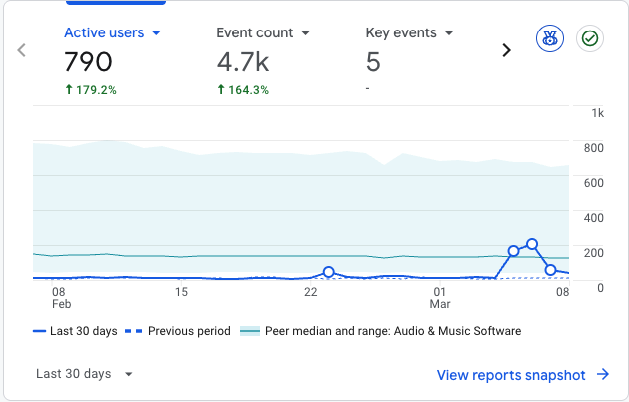

I woke up this morning to 10 fresh podcast episodes. Fully researched. Scripted. Narrated. Visuals timed to every beat. Published to YouTube, RSS, and my own website. I didn't make any of them. A machine on my desk did. While I slept. I launched these channels at the end of February. It hasn't been a month yet. Some episodes are pulling 1,000+ views and gaining subscribers - with zero ads, zero promotion, zero outreach. But here's what I need you to understand: this is not a prompt. When people hear "automated content," they picture someone typing a topic into a chatbox and hitting publish. That's not what this is. That's not even close. What I built is a multi-stage production pipeline. Not a single generation step - a sequence of independent systems, each with its own job, its own rules, and its own quality bar. Every stage has to pass before the next one starts. If something isn't good enough, it gets caught, flagged, and redone automatically. Here's what that actually means in practice: Every episode starts with real research. Not "summarise this topic." Actual source-finding, fact-checking, angle evaluation. The kind of editorial groundwork a good producer would do before writing a single word. Most automated content skips this entirely. Mine can't - the pipeline won't let it move forward without it. Then there's the writing. And this is where I spent most of my 45 days. I didn't just generate scripts - I built an entire set of rules around how spoken language works differently from written language. How rhythm changes when someone is listening instead of reading. How a pause lands. How a transition should feel. Early versions sounded like a textbook. Now they sound like someone talking to you. After the writing comes the part most people don't think about: quality control. Every script gets evaluated across multiple dimensions before it moves on. There's a hard pass/fail threshold. I've watched the system reject its own output dozens of times and come back with something genuinely better. Nothing mediocre gets through. That's not a nice-to-have - it's the reason the content performs.

Sam Altman just confirmed what builders already know

Sam Altman said something at the BlackRock Infrastructure Summit this week that crystallized a lot of my thinking. "We see a future where intelligence is a utility like electricity or water and people buy it from us on a meter." I've been saying a version of this for nearly a year. My one-liner in conversations: "We don't buy tools from the electricity company." We buy refrigerators from Samsung. TVs from LG. Light bulbs from Philips. Electricity just powers them. AI tokens are heading the same direction. The model providers (OpenAI, Anthropic, Google) will sell the raw intelligence. Everyone else builds specific tools that consume those tokens for specific jobs. Voice generation tools. Code review tools. Customer support automation. Research tools. Analytics platforms. Each one tailored to a workflow, a user, a problem. The model providers become the power grid. Everyone else builds the appliances. I'm not theorizing. I'm living this right now. I'm building 5+ AI-native products and services as a solo founder. One person. No team, no employees. A decade ago I tried something similar and failed badly. The infrastructure didn't exist. You needed teams of engineers and real capital to build anything meaningful. Today the infrastructure is here. One person can ship real products in weeks that would have taken months with a full team. People keep asking me "is AI a bubble?" I push back every time. I'm in it every day, building in the trenches. This doesn't feel like a bubble. It feels like a utility going live. For the automation builders here: how are you thinking about this shift? Are you building tools on top of AI APIs? And does the "utility" framing change how you think about your product's long-term defensibility?

1 like • Mar 17

@Mike Major I have 5+ products I am building, scaling, marketing and supporting solo with a custom Paperclip like Agents OS built on top of The Claude Agents SDK. I have: Vois: https://vois.so launched on the 5th this month on ProductHunt #13 for the day. Konvy: https://konvy.ai Launching next month. We just completed a major upgrade and overhaul of the core product based on feedback from early users and potential partners. PodSlice: https://podslice.co a side project that was built on a weekend and now runs autonomously. I have plans to expand it to 50 channels in the next three months or so. Nyx: https://heynyx.app My personal brainstorming expert because I go through severe, serial ideas and tend to lose the vibe if they are not captured correctly. WorkflowOS: https://workflowos.app this is what manages my products at the moment. But it's not there yet, so I'm constantly working on improving it. I have four other projects currently in development, but I don't want to spam here. I'm working on building my one-man army dream as an AI-native company. I am a solo founder with no employees.

How I got AI chatbots to recommend my product (before I launched)

Your product is probably invisible to a growing segment of buyers. Not because your SEO is bad. Because they're not using Google. A growing number of people search by asking ChatGPT, Gemini, or Perplexity a question. The AI gives them a ranked list. They research from there. If your product isn't in that answer, you don't exist. I realised this early and did something most founders skip entirely: I built the layer of my website that AI models can actually read and cite. Before writing a single ad or social post, I spent weeks on what I call the "AI-readable layer." Here's what that looked like: 1. llms.txt files at the site root. These are plain-text documentation files designed for AI crawlers. Not a robots.txt. A structured brief that tells AI models what your product is, what it does, who it's for, and how it compares. Think of it as a pitch deck for machines. 2. 62 blog posts before launch. Not SEO filler. Honest comparison posts — my product vs each major competitor. Use-case deep dives. Technical explainers. FAQ content written in the natural question-answer format that AI models actually cite. 3. JSON-LD structured data on every page. FAQPage schema on the homepage, feature pages, use case pages, blog posts. This is the metadata AI models parse when they build their knowledge base. 4. Dedicated pages for every use case and feature. Not just a features list on the homepage. Individual pages at /for/podcasters, /for/game-developers, /features/ voice-cloning. Each with its own structured FAQ. 5. Competitor comparison content that's fair. Not "why we're better." Honest trade-off breakdowns. AI models prefer balanced, cited content over marketing copy. When the AI ranked my product third — not first — that's actually more credible than ranking it #1. This approach has a name: GEO — Generative Engine Optimization. It's early. Most founders haven't heard of it. Most AI tool builders haven't optimised for it either, which is ironic. The core insight: AI models don't read your marketing

1-8 of 8

@praney-behl-3117

Creator, Developer, Entrepreneur, Marketer, Husband & a Dad.

Building Vois.so, konvy.ai, heynyx.app, volant.app and a couple more ;)

Active 7m ago

Joined Aug 26, 2025

Melbourne AUS

Powered by