✨🎉🙏✨🎉THANK YOU NATE!! 🎉✨🙏✨🎉

@Nate Herk @Nate Herk I am so grateful for your free AI education. What I learned from you, and Sabrina Romanov, as a combination, has taught me so much! Today, I won 2nd place in a worldwide app competition for women app builders! This app build would not have been possible without two of your videos specifically, the videos you made on Claude /superpowers and Claude /ultrathink. I had developed an SOP based on everything that was in those videos and it worked so perfectly. I used your teachings and one-shotted my very first demo production app, entered in a competition as a first time app builder. It’s a demo app so it uses fake data which I also learned about from you as a way to test out app workflow. I couldn’t believe it today when I placed second in this worldwide competition!! And thank you for your free education it was possible because of what I learned from you!! Honestly all of the Claude best practices, all of your context saving tips and tricks, and all of your systematized building methods or what enabled me to be successful. I just wanna say thank you @Nate Herk you’re honestly been so generous with your talent and time and giving away all this education for free and I really appreciate you!! And to everyone else who is reading this if you’ve never built an app before and you’re stuck in research mode, just do it. After actually getting in there and working with all the tools, everything in Nate’s videos really started to make sense. I now have a level of confidence I never had before and I’m really excited to see what I can do with this app. I’m planning to release it commercially. At this time last year, I had never even touched AI. Just six months ago I was only using ChatGPT like a search engine. Three months ago that I serious and started thinking about building an app. April 24, I started building my first project and I had a deadline of May 1To get it into the competition.

✨🎉THANK YOU TO NATE HERK. I LAUNCHED MY FIRST APP 🎉✨

I joined this community on Christmas Day of all days, and I've been here just over 4 months now. Since discovering vibe coding and really starting to dive into it in January, February, and March ,I have watched every single video produced by @Nate Herk @Nate Herk on YouTube. The thing about being a new AI builder is that it's so easy to get stuck in research mode, and that is where I was at *for months.* The dream I had to create something useful and real, was getting mired in thinking I needed to learn more and more before trying to launch something. Everything in Nate's videos started to click once I really started to dig into the tools, make mistakes, try to fix them, work around it, and persist through the disappointment when my first several tries didn't come out the way I wanted. Last night I shipped my first Progressive Web Application and I have Nate to thank. It supports the members of my Skool community by helping them come up with a structured plan for goal attainment. (Funny story: I used the prompt based version of that plan to actually build the PWA during the month of April.... that's so meta) 🤣 Tonight I am working on what will end up becoming my first real production app. It's being entered in a contest on Friday. Fingers crossed. The HUGE unlock for this big app deployment was Nate's video he did on using the Claude skill /superpowers I have been in a planning session with the /superpowers skill for about two hours refining everything on a local host screen until the UI/UX is just right. It then built an awesome deployment handbook for me and saved it in the local documents folder. How cool is that. The goal of using the /superpowers skill is that it takes 95% of the upfront time to nail down all the details and get them right so that when you use massive amount of tokens to make your build, you aren't disappointed with the output. I know that one-shot builds are pretty rare and I'm not expecting perfection, but this should get me 90% of the way there.

My First n8n Workflow – Hacker News Scraper 🚀

Just built my very first n8n workflow and I'm honestly hooked! 🎉 It's a simple Hacker News scraper that pulls the top 10 items on demand with a single click. Nothing fancy, but it feels amazing to automate something for the first time. The drag-and-drop flow in n8n makes it so intuitive even as a beginner. Next up, I want to automatically send these results to a Google Sheet or Telegram channel. Any tips from the community? 👇 Drop a 🔥 if you remember your first automation!

🏆 Community Wins Recap | May 2 – May 8

Big closes. AI Lead roles. SaaS momentum. Retainers. Equity. Real systems getting shipped. This week inside AIS+ was packed with builders turning reps into real opportunities 👇 🚀 Standout Wins of the Week inside AIS+ 👉 @James Tagalog landed an AI/Automation Lead role and jumped from $87K → $130K while realizing the interviews cared more about real-world thinking than memorized prep. 👉 Riaz Ahamed crossed $60K+ in client work since joining AIS+ as a complete beginner last year — now building GDPR-compliant Claude Code systems for EU clients. 👉 @Michael Elliott closed a $31K website rebuild + AI chatbot + retainer deal and shared the exact communication moves that helped secure the project. 👉 @Chris Atsu closed a €16K AI automation system for a marketing agency after holding firm through negotiation pressure. 👉 @Fernando Gómez shipped a real estate WhatsApp lead-classification system for a Málaga agency with €3.2K upfront + €299 MRR attached immediately. 🎥 Super Win Spotlight | @Jan Goergen Makinson Jan joined AIS+ after completing the AFT Challenge because he wanted to go deeper into AI automation and surround himself with builders actually doing the work. Since joining, he and his team have: • Built their own property-management SaaS using Claude Code + Lovable • Expanded the software for additional residential complexes in Cyprus • Signed a long-term AI documentation project with a new client • Turned that client relationship into both a monthly retainer AND equity in the company One of the biggest lessons Jan shared: You can learn tools from YouTube…But you can’t replace having helpful people around you when things get difficult.

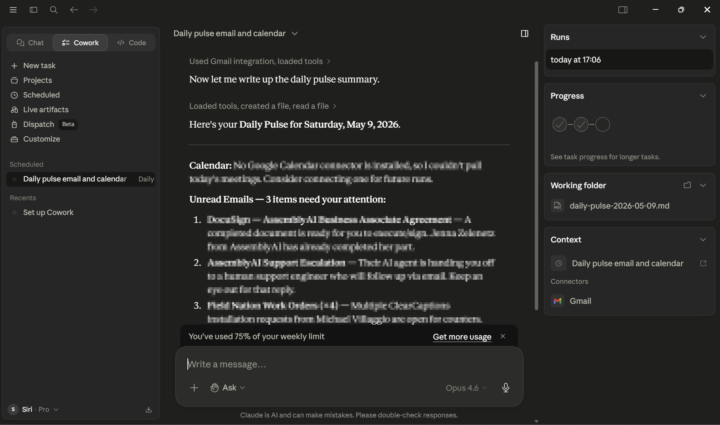

Day 6: Scheduled Automation - 7days AIS Challenge

I learned how Claude’s code loops and scheduled tasks work, and I used them to build a daily automation that reviews my inbox, summarizes new emails, checks the status of my active projects, and generates a task list for the day. Since the workflow runs on a daily, weekly, and monthly cycle, scheduled tasks were the best fit because they persist reliably. This automation now replaces the 30-60 minutes I used to spend skimming emails and organizing my day.

1-30 of 1,205

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by