Pinned

🎉 We have our FIRST graduate of the 7-Day Challenge!

Huge congrats to @Antra Verma for being the first to cross the finish line 👏 To celebrate, we're hooking her up with a FREE AIS shirt, and her official completion certificate is attached below 🏆 Let's give her a massive round of applause in the comments, she set the bar! Can't wait to see more of you submit your projects and join the graduate club. 👉 Want to take on the challenge? Head to the Classroom section or jump in HERE 👕 And if you want to grab some AIS merch for yourself, check it out HERE Cheers everyone! - Nate

Pinned

🚀New Video: 32 Claude Code Hacks in 16 Mins

I went from complete beginner to mass-producing workflows, websites, and AI agents in real time. This video covers 32 Claude Code hacks I actually use, sorted from beginner to pro. The best ones are saved for the end

Pinned

🏆 Weekly Wins Recap | Apr 18 – Apr 24

From high-ticket deals and agency SaaS launches to client systems, websites, and real-world automations - this week inside AIS+ was packed with serious builder energy. 🚀 Standout Wins of the Week 👉 Michael Wacht closed a $10K AI Readiness Assessment deal, sponsored by finance with training and system-integration readiness included. 👉 @Uros Pesic signed a £9K UK agency client for a 3-month ops audit and used multi-agent Claude Code to prep 20+ interviews in parallel. 👉 @Fernando Gómez turned a corporate social-media automation system into an agency SaaS with €2.5K setup + €100/month per client. 👉 @George Mbajiaku closed his first $1,300 client by shifting his pitch from “n8n builder” to “problem solver.” 👉 @Josh Holladay wrapped a 30-day client sprint and earned a retainer offer for ongoing strategy, builds, and AI education. 🎥 Super Win Spotlight | Balaji Iyer Balaji joined AIS+ knowing he could build something useful - but he needed structure, clarity, and confidence. Since joining, he has: • Set up his own cloud instance, Docker, Postgres, and self-hosted n8n • Built a real backend workflow from scratch • Created an app he now improves daily • Moved from “Can I really do this?” to “How can I make this better?” His biggest shift? Going from sitting on the sidelines → to finally building something he’s proud of. Balaji’s journey is proof that once you take the first step, momentum starts to build. 🎥 Watch Balaji’s story 👇 ✨ Want to see wins like this every week? Step inside AI Automation Society Plus and start building assets that compound 🚀

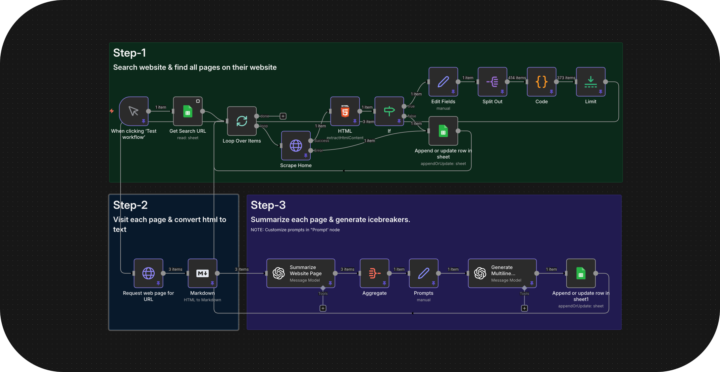

Why your cold emails get ignored (and how I fixed mine to book 9 calls/week)

I've been running cold email campaigns for clients for 3 years and the biggest shift I've seen isn't the tools. It's what actually gets a reply. Personalization used to mean scraping a name and company from LinkedIn, dropping it in the first line, and hitting send. "Hey {FirstName}, I noticed {Company} and thought..." That worked in 2022. It's dead now. Everyone's doing it and prospects can spot a mail merge from the subject line. What changed for me was treating personalization like actual research instead of a data field. Here's what I started doing: → I scrape the prospect's entire website. Not just the homepage. Blog posts, service pages, case studies, about page, even their contact form if it's there. → Then I feed all of that into OpenAI and have it analyze what they actually do, who they serve, and what problems they're likely dealing with. The AI doesn't just summarize. It finds the specific details nobody mentions in generic outreach. So instead of "I saw you work in logistics," the email opens with "Noticed you handle cross border freight into Mexico. Your blog mentioned customs delays eating 15% of delivery windows." That's the kind of line that gets opened because it doesn't sound like 500 other emails they got that week. The reply rates went from 2-3% with generic personalization to 8-10% with actual research. One prospect replied last week: "Your email won because you actually read our site. Everyone else sent the same template." The system I built does this automatically. Scrapes the website. Analyzes every page. Generates icebreakers that reference non-obvious details. It writes openers like a human who spent 20 minutes studying their business, except it does it for 1,000 prospects in an hour. Here's what I learned building this: Small prompt details make a massive difference. Having OpenAI shorten company names naturally (say "Stripe" not "Stripe Inc.") and reference specific pages beyond the homepage makes it feel real. The difference between "I saw your website" and "I saw your freight tracking dashboard lets customers get ETAs without calling" is everything.

0

0

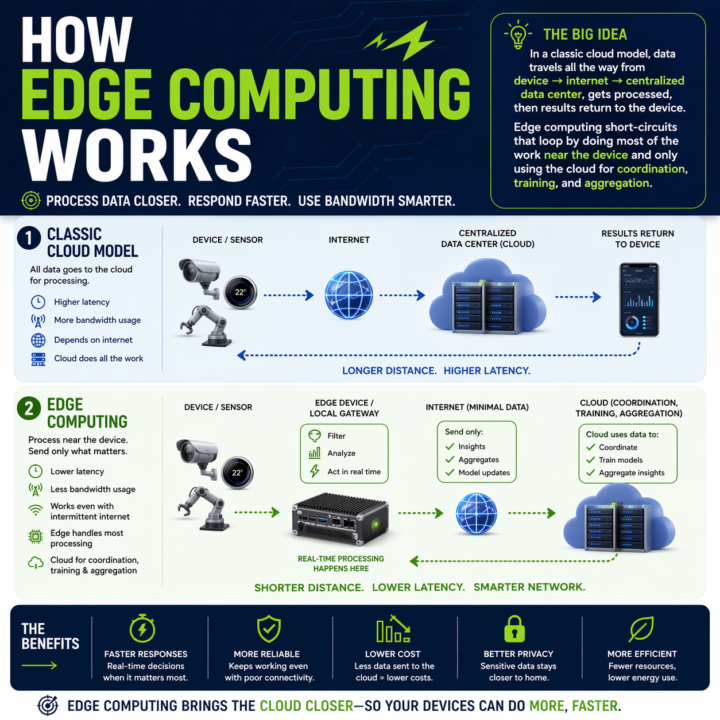

Your Claude API call is eating 1.2 seconds. Here is when that stops being acceptable.

Every automation you build has a round trip baked in. Claude API, OpenAI call, n8n webhook, vector DB lookup. Data leaves the device, travels to a data center, gets processed, comes back. On a good day a cached Claude call runs 600ms to 1.2s. On a bad day you are watching a spinner. Where that round trip breaks: - Real-time perception in vehicles or robotics - Industrial control loops that cannot wait on a network - Anywhere connectivity is spotty or intermittent - High-volume sensor data where shipping everything to the cloud burns bandwidth and budget Edge changes the math. Compute runs where the data is generated. Local work stays local. Cloud only sees what needs cross-site context. The split that is emerging: - Edge: real-time inference, event detection, filtering, local control - Cloud: model training, cross-site analytics, long-term storage, heavy compute On-device SLMs are usable now. Llama 3.1 8B on an M-series Mac via Ollama hits sub-100ms first token. Haiku-class reasoning is running on phones with NPUs. If your automation touches physical systems, live audio, live video, or anything latency-sensitive, you have a real placement decision to make: edge, cloud, or hybrid. The pattern I keep reaching for: route by cost of latency. If a one-second delay breaks the experience, run it local. If the step needs cross-context memory or a frontier model, send it up. Cheap decisions at the edge, expensive ones in the cloud, with the edge doing the filter so the cloud only sees signal. The architecture question has changed. Not "which cloud model do I call," but "where does each step of this pipeline need to run." What is running in your stack right now that should not be making a cloud round trip? What is stopping you from moving it?

1-30 of 16,076

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by