Pinned

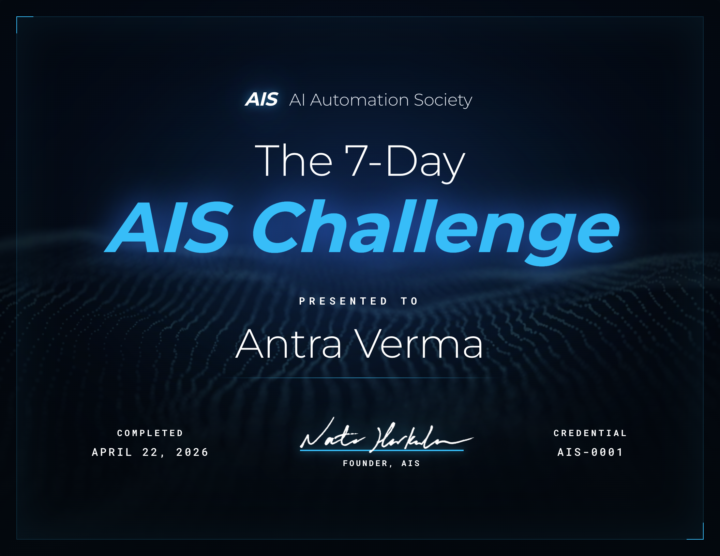

🎉 We have our FIRST graduate of the 7-Day Challenge!

Huge congrats to @Antra Verma for being the first to cross the finish line 👏 To celebrate, we're hooking her up with a FREE AIS shirt, and her official completion certificate is attached below 🏆 Let's give her a massive round of applause in the comments, she set the bar! Can't wait to see more of you submit your projects and join the graduate club. 👉 Want to take on the challenge? Head to the Classroom section or jump in HERE 👕 And if you want to grab some AIS merch for yourself, check it out HERE Cheers everyone! - Nate

Pinned

🚀New Video: 32 Claude Code Hacks in 16 Mins

I went from complete beginner to mass-producing workflows, websites, and AI agents in real time. This video covers 32 Claude Code hacks I actually use, sorted from beginner to pro. The best ones are saved for the end

Pinned

🏆 Weekly Wins Recap | Apr 18 – Apr 24

From high-ticket deals and agency SaaS launches to client systems, websites, and real-world automations - this week inside AIS+ was packed with serious builder energy. 🚀 Standout Wins of the Week 👉 Michael Wacht closed a $10K AI Readiness Assessment deal, sponsored by finance with training and system-integration readiness included. 👉 @Uros Pesic signed a £9K UK agency client for a 3-month ops audit and used multi-agent Claude Code to prep 20+ interviews in parallel. 👉 @Fernando Gómez turned a corporate social-media automation system into an agency SaaS with €2.5K setup + €100/month per client. 👉 @George Mbajiaku closed his first $1,300 client by shifting his pitch from “n8n builder” to “problem solver.” 👉 @Josh Holladay wrapped a 30-day client sprint and earned a retainer offer for ongoing strategy, builds, and AI education. 🎥 Super Win Spotlight | Balaji Iyer Balaji joined AIS+ knowing he could build something useful - but he needed structure, clarity, and confidence. Since joining, he has: • Set up his own cloud instance, Docker, Postgres, and self-hosted n8n • Built a real backend workflow from scratch • Created an app he now improves daily • Moved from “Can I really do this?” to “How can I make this better?” His biggest shift? Going from sitting on the sidelines → to finally building something he’s proud of. Balaji’s journey is proof that once you take the first step, momentum starts to build. 🎥 Watch Balaji’s story 👇 ✨ Want to see wins like this every week? Step inside AI Automation Society Plus and start building assets that compound 🚀

Please Read | Rules and Guidelines 📜

1) 🚫 No Business Promotions → NO “DM me for…” or "Comment 'Automation'" posts. 2) 🔗 No Linking Your Own Community/YouTube Videos 3) 🏷️ Title Specifically 4) 🔍 Search for Help First (searchbar) 5) 🙌 Stay Respectful 6) ❌ Enforced Clean‑Up Posts that break these rules will be removed without warning. If you ever have questions, feel free to ask. Let’s make this the best AI Automation community out there by sharing, collaborating, and learning together. 🚀

Finally Introducing myself

Hello builders, I have been playing around with Claude, trying to find what I want to do. I know it's more than generating images and videos. I guess I'm not aware to much of what I can build with Claude with no experience but just interest. So far, I see I can make websites, apps, carousels, and so on but I guess I'm not grasping it fully. Hard to describe. I guess maybe because I think there are so many steps that might be there or /and imposter syndrome.

0

0

1-30 of 16,054

skool.com/ai-automation-society

Learn to get paid for AI solutions, regardless of your background.

Powered by