Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Owned by Matthew

Practical AI training for work and life. Hands-on lessons with Claude, ChatGPT, and automation tools. Built for people ready to use AI.

Memberships

AI Bits and Pieces

716 members • Free

AI Automation Society

357.4k members • Free

Skoolers

190.6k members • Free

132 contributions to AI Bits and Pieces

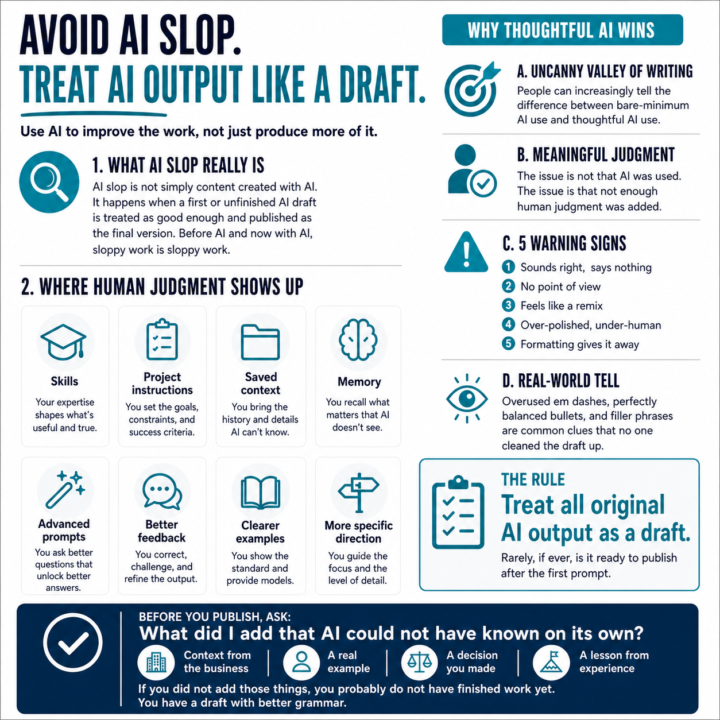

⚠️ AI Slop: Uncanny Valley of Writing

The latest buzzword is “AI slop.” And honestly, I think it is a useful one. But AI slop is not simply “content created with AI.” That misses the point. AI slop is what happens when someone treats the first or unfinished AI drafts as “good enough” and publishes it as the final version. Decent but unfinished content is not the antidote to procrastination. Before AI, and now with AI, sloppy work is sloppy work. We are quickly approaching a time where, in a professional environment, people can tell the difference between bare-minimum AI use and thoughtful AI use. You have probably heard of the uncanny valley problem with AI images, where something looks almost right, but still feels off. I believe something similar is starting to happen with AI writing. That same intuitive sense that tells people an image was generated by AI also starts to work against the person who presents unedited AI output as their own thinking. Not because they used AI. Because they did not add enough meaningful human judgment. And that judgment can show up in a lot of ways: - Skills. - Project instructions. - Saved context. - Memory. - Advanced prompts. - Better feedback to the LLM. - Clearer examples. - More specific direction. Thoughtful AI use creates better content, deeper meaning, and a sharper perspective. My rule is pretty simple: 👉 Treat all original AI output as a draft, and accept that it will rarely, if ever, be ready to publish after the first prompt. Always a draft. Here are five warning signs I look for: 1. It sounds right, but says nothing If you can delete the sentence and the meaning does not change, cut it. 2. There is no point of view If anyone could have written it, no one will remember it. 3. It feels like a remix AI is very good at summarizing what already exists. Your job is to add the experience, the example, or the opinion. 4. It is over-polished, but under-human Perfect grammar does not equal trust. Sometimes the post needs a shorter sentence.A rougher line.A little more of you.

AI Bits & Pieces is now 700 members strong!

We just crossed 700 members in AI Bits & Pieces, and I want to take a moment to say thank you. When this community started, the idea was simple: AI is becoming a life skill. For the AI Curious. For the AI Beginner. For the AI Enthusiast. For the AI Practitioner. For the business owner. For the person simply trying to keep up. For everyone. AI is becoming part of how we think, write, plan, research, learn, create, and make decisions. And for many people, the hardest part is not understanding every technical detail. The hardest part is knowing where to start. That is what AI Bits & Pieces is here for. A place to learn without feeling behind. A place to ask basic questions without judgment. A place to see real examples, practical workflows, and honest tool testing. A place where curiosity matters more than credentials. As the community grows, the goal remains the same: help people build practical AI fluency one step at a time. You don't need to learn everything by tomorrow. Just steady progress. Some members are brand new to AI. Some are using it every day. Some are building workflows, automations, content systems, or businesses. And some are simply trying to understand how this technology fits into their work and life. All of that belongs here. I also want to recognize and acknowledge everyone on the leaderboard! You are the people who continue to show up, comment, ask questions, share examples, and make this feel like a real learning community. That participation matters more than most people realize. Content helps. Tools help. But people make the community useful. So thank you for being here, whether you joined at member 7, member 70, or member 700. We are still early. And we are building AI fluency together, one bit and piece at a time.

Build your skills: Helping Non-Profits

Helping non-profits is one of the smartest ways to start in AI automation. You get real-world problems to solve, not theoretical ones. You sharpen your execution, build systems that actually get used, and learn what breaks outside of controlled environments. At the same time, you’re contributing to something that matters. The upside compounds: - Stronger portfolio with real outcomes - Referrals from trusted networks - Exposure without paid acquisition - Faster skill development under real constraints If you’re early, don’t wait for perfect clients. Go where the problems are real and the stakes matter. That’s where capability gets built.

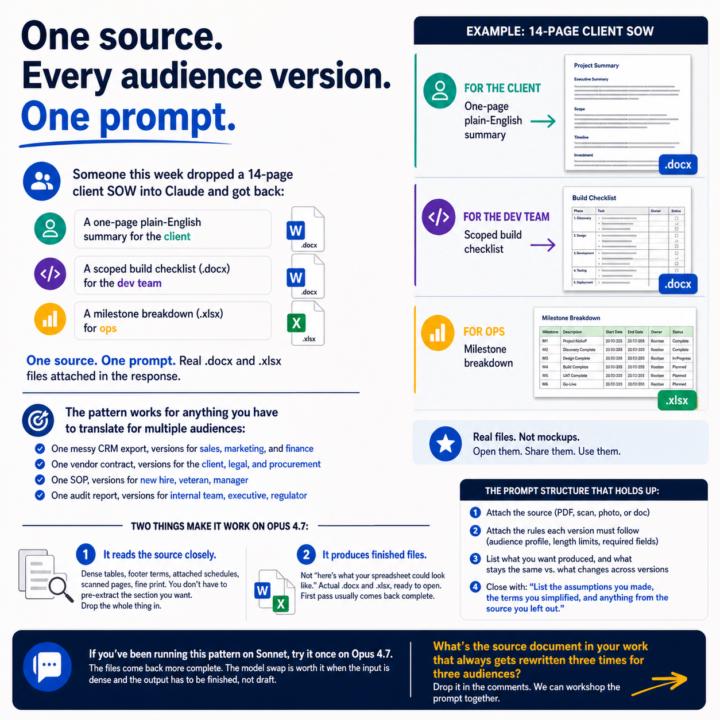

One source. Every audience version. One prompt.

Someone this week dropped a 14-page client SOW into Claude and got back: - A one-page plain-English summary for the client - A scoped build checklist (.docx) for the dev team - A milestone breakdown (.xlsx) for ops One source. One prompt. Real .docx and .xlsx files attached in the response. The pattern works for anything you have to translate for multiple audiences: - One messy CRM export, versions for sales, marketing, and finance - One vendor contract, versions for the client, legal, and procurement - One SOP, versions for new hire, veteran, manager - One audit report, versions for internal team, executive, regulator Two things make it work on Opus 4.7: 1. It reads the source closely. Dense tables, footer terms, attached schedules, scanned pages, fine print. You don't have to pre-extract the section you want. Drop the whole thing in. 2. It produces finished files. Not "here's what your spreadsheet could look like." Actual .docx and .xlsx, ready to open. First pass usually comes back complete. The prompt structure that holds up: - Attach the source (PDF, scan, photo, or doc) - Attach the rules each version must follow (audience profile, length limits, required fields) - List what you want produced, and what stays the same vs. what changes across versions - Close with: "List the assumptions you made, the terms you simplified, and anything from the source you left out." That last line is what lets you trust the output. You review the decisions Claude made instead of comparing every line against the source. If you've been running this pattern on Sonnet, try it once on Opus 4.7. The files come back more complete. The model swap is worth it when the input is dense and the output has to be finished, not draft. What's the source document in your work that always gets rewritten three times for three audiences? Drop it in the comments. We can workshop the prompt together.

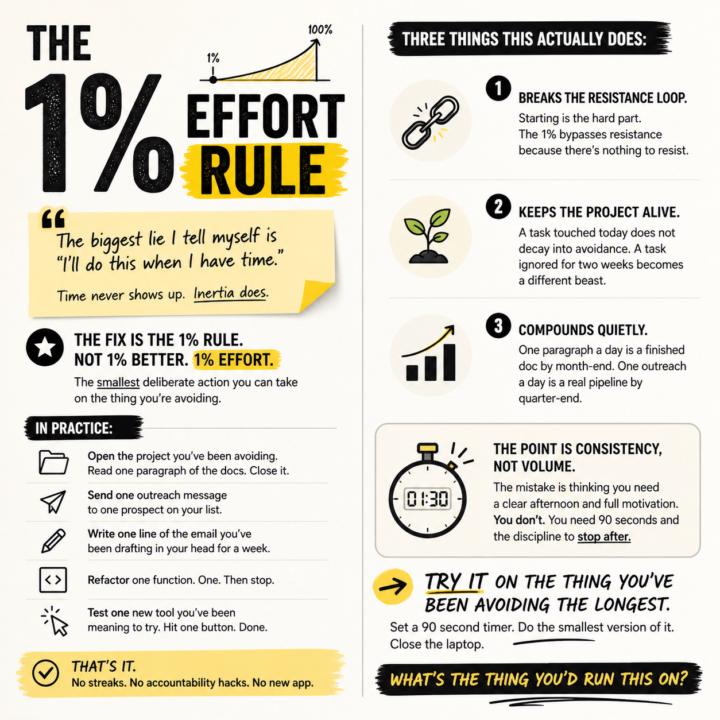

The 1% effort rule

The biggest lie I tell myself is "I'll do this when I have time." Time never shows up. Inertia does. The fix is the 1% rule. Not 1% better. 1% effort. The smallest deliberate action you can take on the thing you're avoiding. In practice: - Open the project you've been avoiding. Read one paragraph of the docs. Close it. - Send one outreach message to one prospect on your list. - Write one line of the email you've been drafting in your head for a week. - Refactor one function. One. Then stop. - Test one new tool you've been meaning to try. Hit one button. Done. That's it. No streaks. No accountability hacks. No new app. Three things this actually does: 1. Breaks the resistance loop. Starting is the hard part. The 1% bypasses resistance because there's nothing to resist. 2. Keeps the project alive. A task touched today does not decay into avoidance. A task ignored for two weeks becomes a different beast. 3. Compounds quietly. One paragraph a day is a finished doc by month-end. One outreach a day is a real pipeline by quarter-end. The point is consistency, not volume. The mistake is thinking you need a clear afternoon and full motivation. You don't. You need 90 seconds and the discipline to stop after. Try it on the thing you've been avoiding the longest. Set a 90 second timer. Do the smallest version of it. Close the laptop. What's the thing you'd run this on?

1-10 of 132

🔥

@matthew-sutherland-4604

AI Automation Architect @ ByteFlowAI | Skool Community Owner of “AI for Life” (Claude.ai, CoWork, Claude Code).

Active 6m ago

Joined Dec 14, 2025

Mid-West, United States

Powered by