Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Easy Machine AI

1.7k members • Free

CC Strategic AI

3.3k members • $27/month

Clief Notes

29.7k members • Free

13 contributions to Clief Notes

Think like an engineer, not a hack!

Just had a perfect reminder while building out a client workflow. Yeah, you could throw an LLM at generating a thumbnail for a PDF. Or... a little bash command: "magick bulletin-050326.pdf[0] -background white -alpha remove -alpha off bulletin-050326-tb.png" One line. One battle-tested tool. Instant result. This is the difference between hacking around with AI and actually shipping like an engineer. The Unix/Linux world is full of these quiet, rock-solid one-liners that have been refined for decades. ffmpeg, pdftk, exiftool, jq, ripgrep, parallel... the list goes on. Before you reach for the shiny new wrapper, ask yourself: what's the battle-tested command line way?(Pro tip: ask Claude or Grok to show you the classics. You'll be amazed how often the 20-year-old tool still wins.) Simple. Reliable. Fast. That's engineering. 🚀

0 likes • 2h

yeah Unix/Linux community has built some amazing builtin tools, and a lot of them are 50+ year old. I did a C++ course at QLD TAFE (like community college) in 2002. My teacher was a diehard Unix fan and wouldn't accept work if it had windows line endings. One of our assignments was writing an Ecommerce Store in SH. Not Bash... but SH, mine turned out amazing, but if you asked me today to redo it I've forgotten 99% of it. It was an amazing Terminal Ecommerce experience though. I got an A+

Stop using MCP's ... mostly

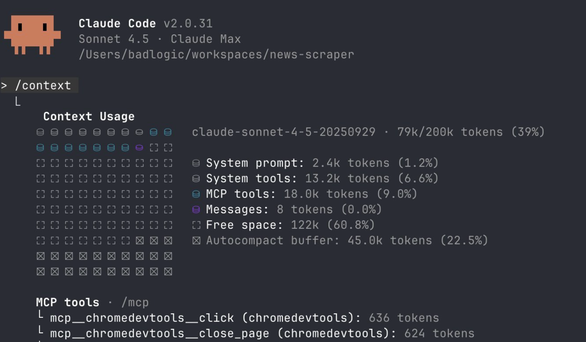

OK, maybe not all of them, but for the most part you should be able to eliminate 90% I stumbled across this old blog post from the creator of PI AI (https://pi.dev/) and it's genius. It shows you how to easily remove the common web browser MCP's and replace with code. WHY ? - Because when you run executable code you no longer need to use the complex MCP queries which are typically bloat for all use cases, it simply runs the code. 1 line. What You need, not what 1million users need. Unfortunately it was about a week late, I could have used this a week ago as I was having trouble scraping epic-games for Unreal Engine documentation. I tried a number of different scrapers, Skill-Seekers, one I built for SEO about 5 months ago... and then decided to use Firecrawl, however I had to pay for it as it's over 6000 pages. So $36 later... THE BLOG POST: https://mariozechner.at/posts/2025-11-02-what-if-you-dont-need-mcp/ having 4-6 MCP's connected really adds some overhead. I even noticed this issue with Codex, I had to uninstall Vercel (3x bigger than GitHub), and a bunch of others. So moral of the story, keep your MCP's very light, if any at all. Even the GitHub MCP is pretty much pointless unless you're doing heavy actions / worktrees. Using 5 agents on 5 different trees. etc. If you're just doing light Push.Pull.Merge.Commits. then you don't need GitHub MCP. And after reading this post you can safely get rid of Playwright, Puppeteer, Chrome MCP Browser tools etc. All scripts can be downloaded here: https://github.com/badlogic/browser-tools

🧪 New benchmark out

New benchmark out of Meta FAIR, Stanford, and Harvard called ProgramBench. The setup: you get a compiled executable plus its docs. Source code stripped. Rebuild the program from scratch in any language you want. Tests check input/output behavior against the original binary. 200 tasks, from small CLI tools up to FFmpeg, SQLite, and the PHP interpreter. 📊 Results across 9 models: Zero tasks fully solved. Opus 4.7 was the best, passing 95% of tests on only 3% of tasks. GPT 5.4, Gemini 3.1 Pro, and Haiku 4.5 hit 0% in that bucket. The interesting part is section 5. Even the model solutions that "worked" looked nothing like the human reference. Median 1,173 lines vs 3,068 in the original. Flat directories. Fewer functions, each one longer. GPT 5.4 wrote 96% of its final code in a single turn on most tasks and never modified existing files on roughly 40% of runs. 🎯 Why it matters for us: The benchmark separates writing code from designing software. Models can produce syntax all day. They cannot yet decompose a real system into coherent modules, pick the right abstractions, or organize a codebase the way a working engineer would. That gap is what computational orchestration points at. It is also where the durable value lives. 🛠 Try it: Pick an easier task from the repo (the paper flags nnn, fzf, gron, and jq as more tractable). Run it against Claude or your model of choice. Watch where you and the model split. Note the design decisions you make that the model never even raises. Post your runs and attempts to create a harness that would allow the model to do it. Wins, failures, weird outputs, all of it. 📍 Paper and Repo: ProgramBench I'm building something on top of this right now. More soon.

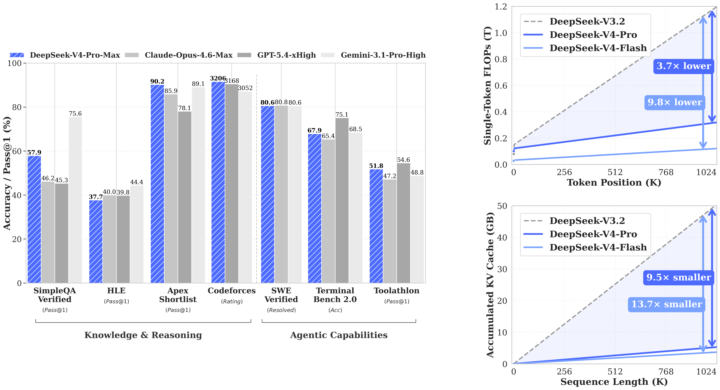

WOW! GPT-5.5 and most awaited Deepseek V4 Flash & Pro dropped today

What a Friday. For two years, if you were building anything serious with AI, you were building on Claude. Not because it was a rule — because it was the right call. Anthropic set the bar for coding. They set the bar for writing. They set the quiet default that if you cared about quality, you paid the Opus premium and didn't ask questions. I didn't either. The whole builder community ran on Claude for a reason. This week, that changed. GPT-5.5 shipped yesterday. DeepSeek V4 Pro shipped the same day. Inside twenty-four hours, the ceiling on agentic coding went up — and the open-weight floor came within striking distance of the closed frontier. Real contenders. Not "almost there." Actually here. Three things this changes for anyone building, and none of them are in the headlines yet. Coding: The default setting of "Claude writes the code, Claude runs the agents" breaks this week. GPT-5.5 is measurably better on the kind of long-running multi-step agent work that used to be Claude's moat. DeepSeek V4 Pro is within a fraction on real software engineering, at a price point where "run it myself" is genuinely on the table. Every tool in your stack that quietly assumed Anthropic — your IDE integrations, your review agents, your automation glue — is about to get reconsidered. That's good for you. Less lock-in. More leverage. Marketing and writing: The price-per-draft math just flipped. We've been rationing the good model forever — the flagship handles the brand-safe stuff, volume work gets the cheap model, and we've all quietly accepted that frontier-quality writing at scale isn't possible. That's over. Frontier-quality writing at open-weight pricing means every ad variant, every email rewrite, every landing-page test, every personalization loop runs at the top tier. The whole architecture of "one good draft, fifty cheap copies" starts feeling as dated as shared creative. Everything top-tier. Everything personalized. Everything testable. Agentic work: This is the one I am most excited about, and the most under-talked-about. For two years, "multi-model agent stacks" has been a slide in decks. Nobody actually builds them, because there hasn't been a real second option. GPT-5.5 for the reasoning step. DeepSeek V4 Pro for the long-context research step. Claude for the interpretive writing step. A cheap open model for the high-volume structured step. Not one runtime. A pipeline. Composed by you. Owned by you. That stops being a slide and starts being the default next month.

0 likes • 18d

@Praney Behl yeah I've been using it. Can't really tell the difference which is why i was going off benchmarks. I'm a Codex lover, I can't stand how finicky Claude is with context usage. Feels like I'm always diagnosing issues with itself and trying really hard not to give it detailed prompts. It's a frustrating use when I'm on Max. At least with codex I've never had to worry about it and I've never hit limits.

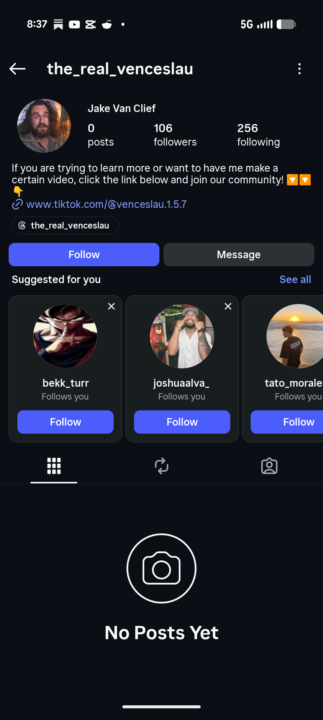

Fake Account Alert

Fake Account pretending to be me, report and deal with it how you can. https://www.instagram.com/the_real_venceslau?igsh=cG9rbTN2M3Iyd3Rx

1-10 of 13

@garratt-campton-2631

Solopreneur @ https://mantlekit.dev @ http://gcampton.com

Active 60m ago

Joined Mar 29, 2026

Powered by