Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Creator Academy

8.2k members • Free

Self Publishers Unite!

500 members • Free

Voiceover Masterclass

121 members • Free

KDP Publishing

1k members • Free

Podcaster Pals

97 members • Free

Creator Profits

19k members • Free

Voice AI Bootcamp 🎙️🤖

9k members • Free

The AI Advantage

121.8k members • Free

Zero to Hero with AI

12.4k members • Free

25 contributions to Clief Notes

best skills for youtube transcript scraping?

best skills for youtube transcript scraping? go!

5 likes • 9d

@Ricky Miskin may I please ask the end goal? thanks @David Vogel the reason I'm asking is that there are quite a few factors, especially when using the skill with, I'm guessing, Claude Code or Codex. Google is actively rejecting requests from OpenAI as well as Anthropic when it comes to YouTube. So if it's part of a workflow, or if you're trying to download transcripts for bulk videos, for any video-related stuff I generally go to Gemini. Gemini is the only model, unfortunately at the moment, that works with videos. I prefer AI Studio (Google AI Studio) for extracting a lot of information and transcribing the videos themselves. The other tool that is incredible, depending on your use case: I generally hook my coding agent to Notebook LM using the Notebook LM scale, and it turns the coding agent into something significantly smarter.

Afternoon Tea Session 2 was crazy fun

Ill be making a bigger write up here shortly on all this but lots of questions, answers and more questions. Play this in the car, on the way home from work or use it like another lesson!

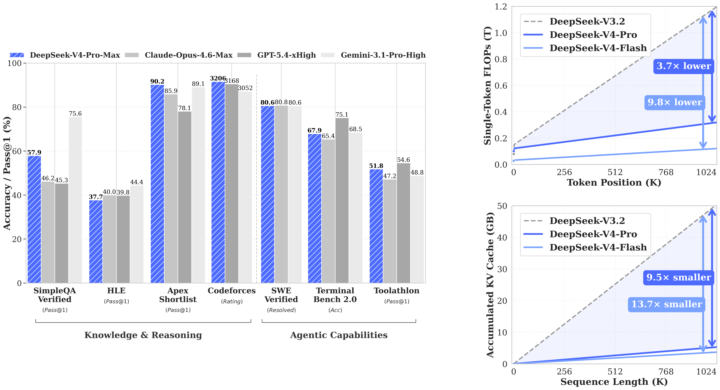

WOW! GPT-5.5 and most awaited Deepseek V4 Flash & Pro dropped today

What a Friday. For two years, if you were building anything serious with AI, you were building on Claude. Not because it was a rule — because it was the right call. Anthropic set the bar for coding. They set the bar for writing. They set the quiet default that if you cared about quality, you paid the Opus premium and didn't ask questions. I didn't either. The whole builder community ran on Claude for a reason. This week, that changed. GPT-5.5 shipped yesterday. DeepSeek V4 Pro shipped the same day. Inside twenty-four hours, the ceiling on agentic coding went up — and the open-weight floor came within striking distance of the closed frontier. Real contenders. Not "almost there." Actually here. Three things this changes for anyone building, and none of them are in the headlines yet. Coding: The default setting of "Claude writes the code, Claude runs the agents" breaks this week. GPT-5.5 is measurably better on the kind of long-running multi-step agent work that used to be Claude's moat. DeepSeek V4 Pro is within a fraction on real software engineering, at a price point where "run it myself" is genuinely on the table. Every tool in your stack that quietly assumed Anthropic — your IDE integrations, your review agents, your automation glue — is about to get reconsidered. That's good for you. Less lock-in. More leverage. Marketing and writing: The price-per-draft math just flipped. We've been rationing the good model forever — the flagship handles the brand-safe stuff, volume work gets the cheap model, and we've all quietly accepted that frontier-quality writing at scale isn't possible. That's over. Frontier-quality writing at open-weight pricing means every ad variant, every email rewrite, every landing-page test, every personalization loop runs at the top tier. The whole architecture of "one good draft, fifty cheap copies" starts feeling as dated as shared creative. Everything top-tier. Everything personalized. Everything testable. Agentic work: This is the one I am most excited about, and the most under-talked-about. For two years, "multi-model agent stacks" has been a slide in decks. Nobody actually builds them, because there hasn't been a real second option. GPT-5.5 for the reasoning step. DeepSeek V4 Pro for the long-context research step. Claude for the interpretive writing step. A cheap open model for the high-volume structured step. Not one runtime. A pipeline. Composed by you. Owned by you. That stops being a slide and starts being the default next month.

0 likes • 11d

@Jarad Nelson I can totally hear you. At my heart, I am a perfectionist as well, and in my past that was the only thing keeping me from moving forward. I have built more than 10 custom harnesses and they all work like a charm, but the amount of time it consumes to maintain and upgrade them—especially working with AI when things change every other day—is jarring. I have learned not to try to reinvent the wheel repeatedly and to stand on the shoulders of giants. Instead of creating my own harnesses, I try to find community-maintained harnesses that are flexible enough for me to build on top of while still being able to ingest upstream updates. Paperclip is a great example that I have grown to like; I have built several custom plugins on top that work in harmony with upstream changes. If you haven't come across it, I suggest you check it out. There are other similar projects, but for now I'm sticking with Paperclip as it provides most of what I want without getting in my way.

Introducing the Hermes-Stack

Consider this a thank you @Jake Van Clief for the inspiration and giving us the mindset and tools to grow our businesses. ***Disclaimer: this is an advanced setup. DONOT use until you're fully comfortable with Jake's method*** =============================== Many of us eventually hit the limit with basic chat interfaces. The agent forgets context between sessions, data security starts to feel risky, and the setup creates more friction than value. This stack addresses those issues directly It combines Hermes Agent with Cognee as the memory engine, hosted on a simple DigitalOcean Droplet and secured through Cloudflare Tunnel. The entire deployment follows Jake Van Clief’s Interpretable Context Methodology for clean, repeatable orchestration. The result is a private, self-improving AI agent that grows more capable over time while keeping your data and server fully under your control. The components stay minimal and transparent: Hermes Agent as the autonomous gateway, Cognee for structured relational memory, and Cloudflare Tunnel for secure outbound-only access. You deploy once using the ICM workflow, then the system handles the repetitive memory management and self-improvement loops. The agent becomes a genuine thinking partner instead of a one-off responder. That frees up your attention for the judgment and creative work only you can do. If you are running a self-hosted agent setup or exploring similar private stacks, I would like to hear what you are using and what friction you have solved. Drop your thoughts below.

1 like • 20d

@David Vogel thanks, David. This is very interesting. One question: the Hermes agent already learns over time. Why would you use something like Cogni with the Hermes agent? Isn't that functionality included out of the box with Hermes? Why are we thinking of stacking it on top? With multiple memory infrastructures, don't you think it could conflict with the native one?

Anyone played with Andrej Karpathy's "LLM Wiki" idea from the gist he dropped?

Quick version in case you missed it: instead of using RAG to re-chunk your sources every time you ask a question, you compile each source once into a persistent markdown wiki. The LLM extracts concepts, writes entity and concept pages, updates cross-references, flags contradictions, and maintains the whole thing. Future queries read the pre-synthesized wiki. The part that clicked for me: the reason most of us abandon our second brains is that backlink and cross-reference upkeep is boring. The LLM doesn't care. It's happy to touch fifteen pages in one pass. I spent a couple of weeks turning Karpathy's pattern into a Claude Code plugin that actually scales (atomic pages, sharded indexes, BM25 fallback past ~300 pages). It also runs in Codex, Cursor, Gemini CLI, Pi, and OpenClaw through the skills CLI. Install in Claude Code: /plugin marketplace add praneybehl/llm-wiki-plugin /plugin install llm-wiki@llm-wiki Or in any other supported agent: npx skills add praneybehl/llm-wiki-plugin -a <your-agent> Five slash commands (init, ingest, query, lint, stats), stdlib-only Python, no dependencies. Plays well with Obsidian if you want the graph view. Repo: https://github.com/praneybehl/llm-wiki-plugin Karpathy's gist: https://gist.github.com/karpathy/442a6bf555914893e9891c11519de94f Curious if anyone here has tried the pattern themselves. What did you ingest first, and what broke before it worked?

1-10 of 25

@praney-behl-3117

Creator, Developer, Entrepreneur, Marketer, Husband & a Dad.

Building Vois.so, konvy.ai, heynyx.app, volant.app and a couple more ;)

Active 18h ago

Joined Mar 10, 2026

Melbourne AUS

Powered by