Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

The AI Advantage

99.2k members • Free

4 contributions to The AI Advantage

Eager to make life easier

Hello I am from Canada(still lots of snow here in the North) eager to make my life easier ( personal and business

hello

Hi everyone, I’m Els from Belgium 👋 I joined this community because I want to truly understand AI — not just how to use it in a business, but how to use it in a smarter, more strategic way. Where I live, most people only focus on applying AI inside a company.But I believe AI can go much further than that — especially for building something of your own. In just one week here, I’ve already learned more than I would have from many expensive courses. Right now, I’m exploring how to use AI to: - create content faster - build online products - and grow a business with more freedom and structure Curious to connect with others who are also serious about growth and doing things differently 🚀

0 likes • 11h

@AI Advantage Team Great question — for me, content is the starting point. Right now, I’m focusing on creating consistent content to build visibility and trust.At the same time, I’m preparing my first ebooks, so content will naturally lead into products. Once that foundation is in place, I want to use AI more for automation — to save time and scale what’s already working. So for me, it’s:Content → Products → Automation I believe that order makes the growth more sustainable and structured 🚀

0 likes • 11h

@Mark Kurywczak To be honest, I’m still very new to AI, and at times it has felt overwhelming. Over the past two months, I’ve been searching for the right course or platform to help me turn AI into a practical tool in my life — something that gives me clarity and confidence in what I’m building. There were moments where I even doubted launching my ebooks, simply because everything around AI seemed so complex and fast-moving. But what I appreciate here is the step-by-step approach.It makes everything feel more structured and doable, and it’s already giving me more confidence that I’m on the right path. “My goal now is to move from learning into action and finally launch what I’ve already created.”

⚠️ The Biggest Mistake Entrepreneurs Make With AI

The biggest mistake entrepreneurs make with AI is simple. They chase tools instead of results. They try a dozen platforms. Test random prompts. Consume endless AI content. And still never build a real advantage. That is where people get stuck. Because AI is not about knowing every tool. It is about using the right AI to buy back time, reduce overwhelm, and create more leverage in the parts of the business that matter most. That is the shift. The entrepreneurs pulling ahead are not using AI to create more noise. They are using it to create more time. More time to think. More time to lead. More time to build. More time to focus on growth instead of getting buried in repetitive work. That is what most people miss. They use AI like a novelty instead of a system. They generate content faster, but do not improve workflow. They move quicker, but not smarter. They add output, but not leverage. And without leverage, speed just creates more chaos. The real opportunity with AI is not doing more for the sake of more. It is using AI to simplify work, shorten cycle time, improve marketing and sales execution, and remove the manual tasks that steal hours every week. That is why this matters now. AI is moving fast, but entrepreneurs do not need every update, every hack, or every new tool. They need a clear path to use AI in ways that actually improve how they operate. So the mistake is not ignoring AI completely. The mistake is using AI in a scattered way that keeps people busy instead of making them better. The entrepreneurs who win with AI will be the ones who stop asking, “What tool should I try next?” And start asking, “How can I use AI to get back time and multiply what I can do?” That is where the real advantage begins.

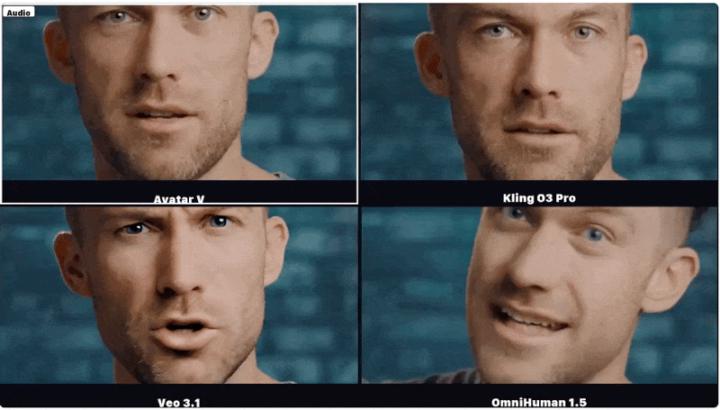

📰 AI News: HeyGen Just Unveiled an Avatar Model That Pushes AI Video Much Closer to “Real”

📝 TL;DR HeyGen says its new Avatar V model can generate long-form talking avatar videos from a single reference video, while preserving not just someone’s face, but their speaking style too. That is a big step because the gap is no longer just visual quality, it is whether AI video can feel recognizably human. 🧠 Overview HeyGen has introduced Avatar V, its latest avatar video generation system, built to create high-resolution talking-head videos from one reference video plus a driving audio track. The company says the model can preserve both static identity traits, like facial structure and texture, and dynamic traits, like speaking rhythm, expressions, and head movement. That matters because most avatar tools can mimic appearance, but often lose the subtle behavioral cues that make someone feel real. 📜 The Announcement HeyGen published Avatar V on April 8, 2026 as a research release describing the model architecture, training pipeline, demos, and benchmark results. According to the company, the system can generate avatar videos of arbitrary length, handle cross-scene generation, and outperform several leading methods across identity preservation, lip sync, and motion naturalness. It also says the model was trained through a five-stage pipeline that moved from broad video pretraining to more specialized alignment for avatar quality and human preference. ⚙️ How It Works • Single video reference - Avatar V uses one reference video to learn both how a person looks and how they naturally move while speaking. • Audio-driven generation - A driving audio signal tells the avatar what to say, while the model generates matching mouth movement, expressions, and timing. • Full video conditioning - Instead of compressing identity into a tiny summary, the model uses the full token sequence from the reference video for richer detail. • Longer context, better identity - HeyGen says longer reference clips help the model capture talking cadence, micro-expressions, and gestural habits more accurately.

1-4 of 4