Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Skoolyard 🧃

1k members • Free

Our Peaceful Liberation 🌎✌🏽

34 members • Free

AI Automation (A-Z)

153.4k members • Free

Free Skool Course

66.6k members • Free

AI Automation Society

350.2k members • Free

High Vibe Tribe

80.5k members • Free

New Earth Network

24 members • Free

New Earth Community

7.1k members • Free

AI Automation Agency Hub

314.2k members • Free

6 contributions to AI Automation Society

✨🎉THANK YOU TO NATE HERK. I LAUNCHED MY FIRST APP 🎉✨

I joined this community on Christmas Day of all days, and I've been here just over 4 months now. Since discovering vibe coding and really starting to dive into it in January, February, and March ,I have watched every single video produced by @Nate Herk @Nate Herk on YouTube. The thing about being a new AI builder is that it's so easy to get stuck in research mode, and that is where I was at *for months.* The dream I had to create something useful and real, was getting mired in thinking I needed to learn more and more before trying to launch something. Everything in Nate's videos started to click once I really started to dig into the tools, make mistakes, try to fix them, work around it, and persist through the disappointment when my first several tries didn't come out the way I wanted. Last night I shipped my first Progressive Web Application and I have Nate to thank. It supports the members of my Skool community by helping them come up with a structured plan for goal attainment. (Funny story: I used the prompt based version of that plan to actually build the PWA during the month of April.... that's so meta) 🤣 Tonight I am working on what will end up becoming my first real production app. It's being entered in a contest on Friday. Fingers crossed. The HUGE unlock for this big app deployment was Nate's video he did on using the Claude skill /superpowers I have been in a planning session with the /superpowers skill for about two hours refining everything on a local host screen until the UI/UX is just right. It then built an awesome deployment handbook for me and saved it in the local documents folder. How cool is that. The goal of using the /superpowers skill is that it takes 95% of the upfront time to nail down all the details and get them right so that when you use massive amount of tokens to make your build, you aren't disappointed with the output. I know that one-shot builds are pretty rare and I'm not expecting perfection, but this should get me 90% of the way there.

0 likes • just now

@Theresa Elliott That makes it even better. You didn’t just build an app. You used your own 28-day plan to prove it works from zero. That’s real signal. AND it reminds me of a film… Starting from nothing on April 1st and shipping something people are already using a few weeks later is no small thing. That’s how confidence compounds.

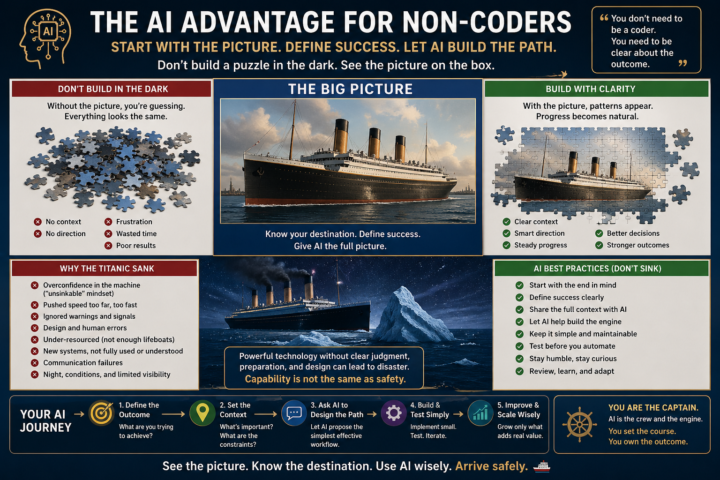

What I learned about AI, including AUTOMATION as a non-coder: THINK PUZZLES without a PICTURE (AI-AS Potential Customers)

SORRY FOLKS: This post was designed for start up /improvers in Tony, Dean and IGOR’s AI Advantage Club: I was going to take it down BUT: It could still be useful here… WHY: These folks are your future customer targets: Those that do AI, but struggle with the AUTOMATION side: TELL ME IF ITS NOT RELEVANT and I will take it down… A SIMPLE AI SYSTEM USED WELL, BEATS COMPLICATED USED BADLY. You do not need to be a coder to work well with AI You do not need to speak in technical language. You do not need to build complicated automations before you understand what you are actually trying to achieve. You need to talk to AI clearly. Tell it what you do. Tell it what you want. Tell it what success looks like. That is the bit many beginners miss. A lot of people approach AI like they are trying to solve one tiny puzzle piece at a time: “What prompt do I use for this?” “What command do I type?” “What tool do I connect?” “What automation should I build?” That can work, but it can also create confusion very quickly. A better way, especially for creative thinkers, is to start with the whole picture. Think of a jigsaw puzzle. If someone gives you a thousand pieces but does not show you the picture on the box, you might still make progress, but it will be slow, frustrating, and full of guesswork. Now imagine the picture is the Titanic. If the Titanic is still in Southampton, you have useful context. You can see the ship, the dock, the land, the colours, the structure. You can start to understand where the pieces belong. But if the Titanic is halfway across the Atlantic, surrounded by sea and sky, everything starts to look the same. Blue above, blue below, no landmarks, no clear edges. That is what happens when you ask AI for isolated pieces without giving it the picture. The AI may still help, but it is guessing with you. So my biggest learning is this: Do not start by asking AI for one puzzle piece. Start by showing it the picture on the box. Say something like: “I am trying to build a simple workflow that helps me create, organise, and publish content without overwhelming myself. I am not a coder. I want low friction, clear steps, and reusable prompts. Success means I can use this every week without getting lost.”

Anyone else? Issues with Claude Code and Codex today

Is it me or are both of these platforms acting up today, badly? This is me right now in the middle of my first real build.

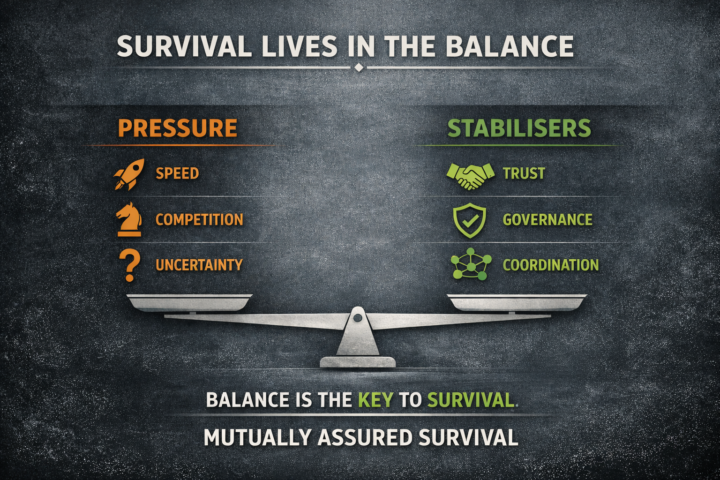

Why Governance Fails Without Verification

A lot of people say AI needs better governance. They are right. But governance without verification can become theatre. Before any powerful system can be coordinated well, regulated well, or trusted well, we have to know whether the signal inside it is actually reliable. Otherwise we are not building on truth. We are building on noise. That matters because weak signals do not stay small for long. In AI, a bad output is rarely just a bad output. It can become a bad decision, a bad workflow, a bad recommendation, a bad policy, or a bad escalation. And the faster the system moves, the faster that distortion travels. This is why verification matters so much. Verification is what tells us whether the model is grounded. Whether the data is credible. Whether the output is reproducible. Whether the confidence is earned. Whether the human system around the tool is seeing reality clearly enough to act on it. Without that, governance becomes mostly procedural. It may look serious. It may sound responsible. It may generate policies, committees, frameworks, and approvals. But if the underlying signal is unstable, none of that solves the real problem. It just gives error a more official route through the system. That is why verification comes first. Governance matters. Coordination matters. Trust matters. But all three depend on whether the signal is sound. In other words: - Governance sets boundaries. - Coordination aligns action. - Trust reduces friction. - Verification tells us whether we are even responding to reality. Without verification, governance can manage the appearance of control while losing control underneath. That is not safety. That is delay before failure. This is also why I keep coming back to a Mutually Assured Survival view of AI. The question is not just how fast capability scales. It is whether the systems around it can detect error, challenge false confidence, and stay coherent under pressure. Because once capability outruns verification, governance starts reacting to outputs it cannot truly validate.

Why Speed Is Not the Real Measure of Safety in AI

We live in an age that mistakes speed for strength. Faster models. Faster markets. Faster decisions. Faster escalation. Faster reaction. Faster deployment. Faster everything. And because speed looks like power, many people assume that the fastest system will also be the safest, the smartest, or the one most fit to lead the future. But that is not how real systems survive. A civilisation does not become safe simply because it can move quickly. In many cases, speed without structure does the opposite. It magnifies error. It compresses reflection. It rewards reaction over judgement. It turns competition into instability and instability into crisis. The real question is not how fast a system can go. The real question is whether the system can carry the weight of its own speed. That is the beginning of a Mutually Assured Survival mindset. Mutually Assured Survival does not start from fantasy. It does not assume that people suddenly become wiser, kinder, or morally perfected. It starts from a harder and more useful truth: human beings and institutions will continue to compete, improvise, protect their interests, and make mistakes. The challenge, then, is not to eliminate pressure from the world. The challenge is to build systems strong enough to stop pressure from turning into collapse. This is where much of modern thinking goes wrong. We often frame danger as if it were caused by acceleration alone. We say the world is moving too fast. AI is moving too fast. Politics is moving too fast. Technology is moving too fast. Sometimes that is true. But speed is only half the story. The deeper issue is whether trust, governance, and coordination are keeping pace with power. If they are not, then every gain in power increases fragility. A more powerful system with weak stabilisers is not truly stronger. It is more dangerous to itself. This matters because history shows that breakdown rarely begins with a single dramatic failure. More often, it begins when systems become too imbalanced to absorb stress. Pressure rises. Incentives harden. Communication narrows. Rivalry intensifies. Uncertainty spreads. The visible machinery still moves, but the invisible supports begin to weaken.

1-6 of 6

@kevin-brown-2649

✨ From Trauma to Transcendence ✨

Through Eterna Works Creative, I craft books, music, and worlds that help humanity remember who we truly are.

Online now

Joined Apr 3, 2026

North West England & Greece

Powered by