Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by Jesse

A creative lab exploring music, media, AI, and healing through real-world experiments, collaboration, and doing better—together.

Memberships

The AI Advantage

99.2k members • Free

23 contributions to The AI Advantage

AI Governance — Let’s Talk About Getting This Right

We’re all building fast. But we’re not having the right conversation at the same speed. Here’s the question: What if AI governance didn’t start with companies or governments…but with the individual? A different starting point: 1. Personal Guardian LayerEvery person has a private AI that: - protects their data - acts in their interest - controls how external systems interact with them No silent access. No hidden extraction. 2. Departments of Life (baseline for humanity)Before innovation, before profit: - Housing - Water - Power - Healthcare - Education These aren’t luxuries. They’re the foundation. What if governance ensured these first—globally, transparently? 3. Open Standards, Not Closed Systems Instead of competing in silos: - shared protocols - transparent operations - auditable systems Companies still innovate—but on top of a trusted, open foundation. 4. Distributed Oversight No single authority. Governance includes: - technical experts - medical + legal - lived experience (this part is missing today) Multiple perspectives. Shared responsibility. 5. Contribution Over Control People choose how they contribute: - build - create - support - explore Some go big. Some live simple. Both are valid. The shift: This isn’t about removing success, money, or ambition. It’s about asking: What if no one had to struggle for the basics…and everything else was built on top of that stability? AI is the first tool we’ve had that could actually help coordinate something like this. So the real question is: Are we building systems that compete for control…or systems that coordinate for humanity? I’m not claiming to have the full answer. But I do think this is the conversation we should be having—together. What am I missing?

The Layered AI World

A System That Finally Works for Humans Opening — Reality I went to the hospital after multiple seizures. They told me to sit under fluorescent lights in a waiting room. No dark space. No accommodation. No awareness of what my body was going through. So I left. Not because I didn’t need help—but because the system couldn’t adapt to me. That’s the problem we’re not talking about. AI is being positioned as the future of everything. Smarter systems. Faster decisions. Better outcomes. But if the system itself is broken, AI doesn’t fix it. It just makes the failure more efficient. The Breaking Point For years, I worked inside systems that were supposed to support recovery. Workers’ compensation. Healthcare. Structured pathways. On paper, everything existed. In reality, access didn’t. Support was conditional. Communication was fragmented. Solutions were delayed or removed entirely. At some point, the shift happens. Responsibility quietly moves: From system → to individual From support → to self-navigation From care → to compliance And once you see it, you can’t unsee it. That’s where this started. Not as an idea—but as a refusal to keep operating inside systems that don’t adapt when it matters most. The Pattern (It’s Not Just One System) This isn’t about one failure. It’s a pattern. In work systems, people are expected to perform at their best when they’re operating at their worst. In healthcare, environments can actively trigger the conditions they’re supposed to treat. In enforcement, medical realities get misinterpreted as resistance. Different systems. Same flaw: They require the human to adapt—even when the human physically can’t. That’s not support. That’s survival disguised as process. The Realization AI isn’t the answer by itself. Because AI dropped into a broken system just scales the problem. The real shift is this: The system must adapt to the human. And now—for the first time—we actually have the tools to do it. The Model — The Layered AI System What I’ve been building isn’t just AI.

Health4Real - Chapter 5: Fixing Socialized Medicine in Canada (Guardian AI Health)

Dick’s Case (Real-World Friction) Dick goes to the hospital after multiple seizures. His eye is in severe pain, light sensitivity is extreme, and he has no one to drive him—so he calls 911. He asks for low-light accommodation. The workaround? A blanket over his head in transport. At the hospital, he’s sedated with Ativan—no opioids, by choice. He finally starts to settle. Then, without warning, someone pulls the blanket off under fluorescent lights. Another seizure. Not because the system lacks care—but because it lacks design awareness. Dick loses count of seizures that day. Not from lack of treatment—but from environmental mismanagement inside the system itself. This is not an edge case. This is a systems failure. Dick also wants to be clear about something: the paramedics and hospital security showed care. They recognized he was agitated post-seizure, kept things as calm as they could, and even apologized that they couldn’t do more. When the people inside the system are saying “we know, we’re sorry,” that’s not a people problem. That’s a system problem. Healthcare in Canada was built on a simple promise: care based on need, not wealth. That promise still matters—but the system delivering it is under strain. Patients are waiting too long for diagnostics, emergency rooms are overloaded, and care is fragmented across disconnected services. This chapter is not about abandoning public healthcare. It's about modernizing it so it actually works again. The Problem Right now, the system operates like this: - Emergency rooms are the default entry point - Urgent care and clinics are inconsistent or under-equipped - Diagnostics like MRIs are bottlenecked and separated - Funding decisions are influenced by politics instead of performance The result: People don’t move through the system—they get stuck in it. The Model: A Better System 1. One Front Door - Create regional Health Access Hubs: - Single intake point for non-emergency care - Rapid triage (human + AI-assisted) - Immediate routing to the right level of care

3

0

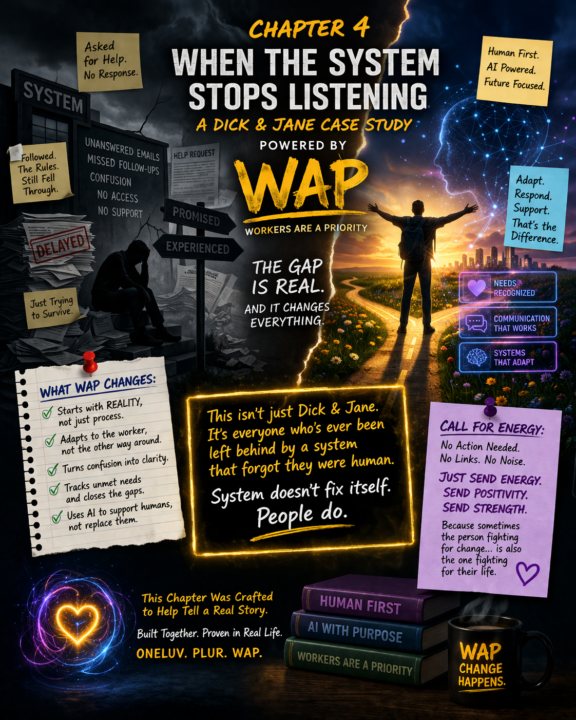

Chapter 4 — When the System Stops Listening

The Dick & Jane Case Study — Powered by WAP (Workers Are a Priority) Dick followed the rules. Jane trusted the system. They both believed that if something went wrong, there was a process —a path —a structure designed to help them recover, stabilize, and return to life. That belief held…right up until it didn’t. The Breakdown At first, it was small things. A delayed response. A missed follow-up. A form that led nowhere. Then it became something else. Requests for help — unanswered. Access to care — unclear. Support systems — conditional, fragmented, or out of reach. Not denied outright…just not delivered in a way that actually worked. And that’s the difference no one talks about. Because on paper, everything exists. But in reality, access is the system. And when access fails, the system fails. The Invisible Gap Dick wasn’t asking for special treatment. Jane wasn’t trying to break the rules. They were asking for something simple: Help us navigate this while we’re not at our best. But the system required: - clarity when there was confusion - mobility when there were limitations - persistence when there was exhaustion It expected peak performance…from people operating at their lowest point. That’s not support. That’s survival with paperwork. The Turning Point Eventually, something shifts. Not out of anger —out of realization. That the responsibility has quietly moved. From system → to individual. From support → to self-navigation. From care → to compliance. And once you see it, you can’t unsee it. The Birth of WAP Workers Are a Priority (WAP) wasn’t created in theory. It was forged in that gap. The space between: - what is promised - and what is actually experienced WAP asks a different question: What if the system adapted to the worker…instead of forcing the worker to adapt to the system? What WAP Changes WAP doesn’t start with process. It starts with reality. - If someone can’t drive → access must come to them - If someone is cognitively overloaded → communication must simplify - If someone is asking for help → the system must respond, not redirect

1

0

🧠 The AI Thought Partner, What It Is and Why You Need One

Most people are still using AI like a tool. Ask a question. Get an answer. Move on. That is useful, but it is also limited. Because one of the biggest advantages of AI is not just that it can produce content fast. It is that it can help people think better, decide faster, and work through ideas without getting stuck in their own head. That is what an AI thought partner really is. Not just a machine that gives outputs. A partner that helps sharpen thinking. This matters because a lot of modern work slows down long before execution. People get stuck trying to clarify ideas, organize messy thoughts, challenge assumptions, pressure-test decisions, or figure out the next best move. The bottleneck is often not effort. It is thinking friction. And that friction costs time. That is where an AI thought partner becomes powerful. It can help turn vague ideas into clear direction. It can help break down a problem when everything feels too big. It can help generate options, compare angles, surface blind spots, and speed up decision-making. Not by replacing human judgment, but by accelerating the process of getting to better judgment. That is the difference. Most people think of AI as a writing assistant, research helper, or productivity tool. And yes, it can do all of that. But the deeper value is in using it as a thinking companion, something that helps refine ideas before they become plans, content, offers, strategies, or decisions. That is why this is urgent. Because the people getting the most from AI are not just asking it to do tasks. They are using it to improve the quality and speed of their thinking. They are bringing it rough ideas, half-formed plans, messy notes, questions they cannot quite articulate yet, and problems they need help untangling. They are using AI to create clarity faster. And clarity changes everything. It reduces time-to-decision. It shortens time-to-first-draft. It lowers rework. It helps people move before overthinking turns into delay.

5 likes • 3d

You’re basically being handed a setup to say, “yeah… and here’s what that actually looks like in real life.” Here’s a response you can drop: This is the part that changed everything for me. Most people think AI saves time by doing things for you. What I’m seeing is it saves time by helping you get unstuck faster. An AI thought partner isn’t just: “write this”“summarize that” It’s: - “this idea doesn’t feel right, why?” - “what am I missing here?” - “help me sort this mess out in my head” That’s where the real value is. Because most delays don’t come from doing… They come from thinking friction. And once that friction drops? - decisions get faster - momentum comes back - execution actually happens For me, it’s not about replacing thinking. It’s about having something there that helps keep thinking moving. That’s the difference between using AI as a tool… …and using it as a partner. This lands clean, agrees with them, but adds your lived angle without going heavy.

1-10 of 23

@jesse-hudson-4432

DJ and media creator using music, AI, and real-world experiments to explore healing, learning, and doing better—no theory, just practice.

Active 20h ago

Joined Apr 5, 2026

ENTP

Canada

Powered by