Activity

Mon

Wed

Fri

Sun

Apr

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

What is this?

Less

More

Memberships

The Energy Data Scientist

513 members • Free

Rebel Economist Challenge

1k members • $10,000/year

Rebel Economist (Free)

1.3k members • Free

1 contribution to The Energy Data Scientist

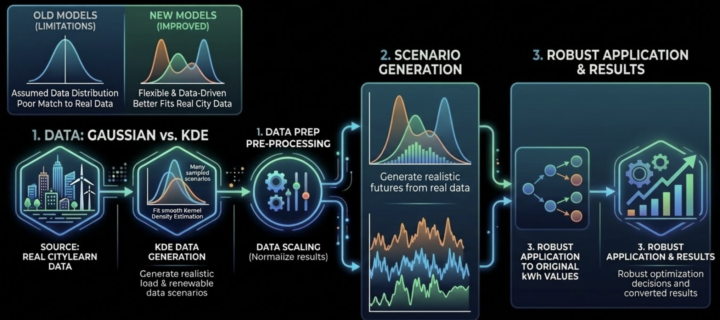

New Online Course: Kernel Density Estimation

A new online course has been published in Classroom. It is course 118. Its title is "Kernel Density Estimation". Kernel Density Estimation (KDE) is a highly effective statistical method used in the energy sector. It allows you to take an existing dataset and generate new, realistic values that follow the exact same underlying patterns. This is perfect for when you need to simulate multiple scenarios. Specifically, in this course: - we look at a smart building with uncertain electricity demand, using 8760 hourly values (one year of data). - we want to simulate 1000 unique days where the demand is different but strictly follows the "logic" of our original dataset. - we walk through generating KDE-based data and using it to solve Monte Carlo and two-stage stochastic optimization models. These methods are absolute standards in the energy sector. Best of all, this is a highly applied course. I show you exactly how I used these exact techniques in a real-world energy project, so you can move past academic textbook exercises and start applying this to actual problems. The attached screenshots show the step-by-step process of how KDE is applied in industry. And also the differences between using KDE and non-KDE approaches ; KDE is more realistic. Non-KDE approaches are easier to model but lack realism.

2 likes • 3d

What I like about KDE in this context is that it lets the data define the distribution rather than forcing a parametric shape. In energy and commodities, that matters because the underlying processes aren’t well-behaved—spikes, regime shifts, and structural breaks show up directly in the data. One question I’ve been thinking about is how far we can push this idea beyond distributions into structure. KDE gives you realistic scenario generation at the level of observed variables (like prices or demand), but those variables are themselves projections of underlying systems—physical networks in energy, or balance-sheet networks in finance. So there’s an interesting next step: - KDE for capturing the empirical distributions - Combined with models that reconstruct the underlying state and flows generating those distributions That way you’re not just simulating realistic outcomes—you’re anchoring them to the structure that produces them.

1-1 of 1

@gary-young-1814

Rebuilding macro from double-entry conservation laws and Z.1 flow geometry—regimes, not equilibria.

Online now

Joined Mar 1, 2026