Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Clief Notes

29.8k members • Free

123 contributions to Clief Notes

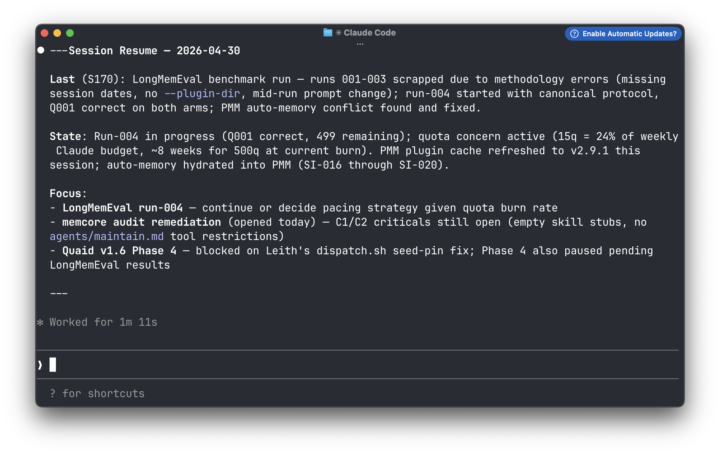

Helping your AI remember tasks between sessions (Session Aware Memory Management )

TLDR: LLMs forget everything between sessions. You re-explain yourself constantly, lose progress on long-running work, and have no reliable way to pick up where you left off — especially when switching between models or tools. I built PMM to fix that. @Deacon Wardlow helped me improve it by identifying a specific gap: session continuity — what changed, what’s still open, where you stopped. That’s now live in PMM v 2.8. Edit: I figured screenshots tell a better story => same memory on three different tools with 3 different models, where I've asked the LLM to basically recall outstanding tasks across the 4-5 current projects. Slightly different perspectives because I'm working a slightly different project with each model. I initially built PMM to remember stuff beyond the context window to fix an issue i had with the LLMs I use failing to remember and recall over long conversations. I used it to have the same conversation, while switching between Claude Code CLI and Co-work. Eventually it became a tool for helping me with continuity in my conversations with different LLMs on different apps and harnesses ( I switch a lot between Claude, OpenCode and GitHub Co-Pilot). I now use it to give multiple agents long-term memory (while they switch between different models on Claude, Gemini, Model Ark and a couple of smaller local models) without the use of model routing. I currently deploy it (along with with another agentic plugin I developed) to give agents long-term individual and collective organisaitonal memory in their conversations with multiple users over telegram in a small pilot. @Deacon Wardlow tested PMM in his own workflows, identified a gap in session memory management, and tried a couple of changes, which he outlined in another thread discussing Session Memory Layer + PMM

0 likes • 11d

@Justin Solomon sure. That works. But a context window is anything between 200K - 500K for most models and 1M on frontier models. What happens when you have a conversation history larger than context can hold? That’s when you see notices about compression and compaction. The longer that conversation and the more compression (summarising actually) happens, the more the quality of the conversation degrades. I preferred a system where the system can compact or clear context as needed while my LLM remembers and retains knowledge (not just facts) and tracks as they evolve. Couldn’t find one. So, I built one. And if anyone wanted to, the plugin can take whatever existing conversation you have from context or other files and hydrate it into memory. I’ve been using it to take stuff from older memory systems or transcripts to selectively hydrate into new memory files for new agents that perform specific tasks more effectively based on past lessons.

0 likes • 15h

@Pam Pam start small, aim small. What have you been trying to solve for recently that you could develop the smallest, simplest version of a solution for? Building in public means sharing successess and failures. You have an entire community of builders here who are more than eager to prompt you (pun intended) and nudge you in the appropriate direction.

Think like an engineer, not a hack!

Just had a perfect reminder while building out a client workflow. Yeah, you could throw an LLM at generating a thumbnail for a PDF. Or... a little bash command: "magick bulletin-050326.pdf[0] -background white -alpha remove -alpha off bulletin-050326-tb.png" One line. One battle-tested tool. Instant result. This is the difference between hacking around with AI and actually shipping like an engineer. The Unix/Linux world is full of these quiet, rock-solid one-liners that have been refined for decades. ffmpeg, pdftk, exiftool, jq, ripgrep, parallel... the list goes on. Before you reach for the shiny new wrapper, ask yourself: what's the battle-tested command line way?(Pro tip: ask Claude or Grok to show you the classics. You'll be amazed how often the 20-year-old tool still wins.) Simple. Reliable. Fast. That's engineering. 🚀

3 likes • 16h

LLMs are way smarter today. Not sure if any LLMs natively generates thumbnails from pdfs without actually making any tool calls. But the approach is spot on = reducing the surface area where LLM involvement is required, have the LLM build you the scripts that will mechanically get stuff done: resizing images, generating thumbnails, generating html from markdown files. Then, either call the scripts yourself or build a skill that calls these scripts.

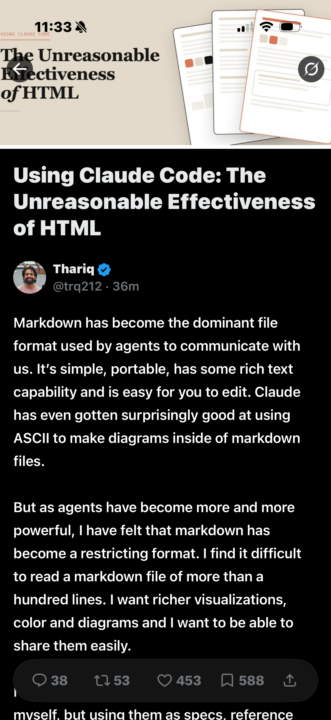

HTML OVER .md Files

A Claude code Eng just dropped this. Anyone been doing this switch yet or will try? Curious to see what @Jake Van Clief has to say. It’s a file still just a different format. Same thing right… thoughts? Pros and cons? Here to learn and discover https://x.com/trq212/status/2052809885763747935?s=46&t=Ayzo8Ebbgb8PZhLNm057bg

2 likes • 1d

It's a question of signal over noise actually. In simple declarations markdowns consumes fewer tokens thsan XML (or whatever ML have you), it requires fewer words to say "Bob ate cheese" than <entity name="Bob"/>ate cheese. XML however, provides a richer surface for storing context that is readily and easily understood by both humans and machines. From the perspective of an LLM reading context from a file inside the harness, it's easier go grep <tag1>.*<tag2>?(.+)</tag2>?</tag1> than to read by chunks in a large markdown. There is no one-size-fits-all rule for whether XML or Markdowns are better, and it depends on what the implementation requires. Small markdowns and simple context => markdowns generally perform better. Rich constructs or long file entries (logs, append-only file stores etc) => XML. You may use XML on one type of file, and choose markdown over another. It's about picking the construct best suited to achieve highest signal over noise at a reasonably low cost.

The struggle

I’m struggling with the concept that I’m not in myself running a business. I sit on the other end of the table, imagine I’m the municipality that orders construction drawings, rules for quality and much more, and I send out for tenders. It’s almost the reverse of a business but not at the same time. I apply for permits from the government, from environmental to building permits, and I hire companies to do the work. So when I’m looking at making my setups in section 3, it seems much more difficult to map my needs. Being that enough of my work can be deemed “sensitive” I will need to be selective in what an AI is able to read and get its hands on. So I’m getting really stuck. I can see how making letters to the public or checklists for quality control can be “automated” but that doesn’t save much time really. Most of those templates are easy to come by.

2 likes • 2d

Who benefits from what you do will give you some guidance on how to proceed. Municipalities serve a particular clientele, either the citizens or the businesses that rely on those permits. You may not be charging for the services you provide, but there’s definitely an upstream (supply) aspect and a downstream (consumer) aspect to the chain.

I'm dumb. Here's proof.

I was today years old when I realized I did not have some of the most important files that you need in the folder structure that Jake teaches. During today's video call with the VIP group, he went on a deep-dive rabbit trail about the ICM folder methodology that he teaches in his foundations course (free). As he was discussing it, I went to check what my root folder looked like and I did not have a Claude.md or context.md file!!! My productivity skyrocketed ever since I implemented his folder strategy over a month ago, but little did I know that I hadn't even implemented it correctly. 🤯 🤯 🤯 This goes to show that massive action beats over planning every time!

1 like • 2d

This is the key thing about working with LLMs that you’ve stumbled on. It worked even without the Claude.md instructions because there was sufficient contextual clues from everything else you’ve created in the structure to guide the AI on what you needed done. Shows two things: Claude.md gives the top level guidance on what the workspace or project is for. And the instructions in the different task folders provided procedural guidance on how each task is to be carried out. You need both.

1-10 of 123

Active 1h ago

Joined Mar 24, 2026

ENTJ

Powered by