Write something

🚨 Hidden LLM Issue That Can Break Your AI SaaS

While auditing a voice-driven AI eCommerce system, I discovered something alarming: 👉 A single request consumed 37K+ tokens — way beyond limits. ⚠️ The issue wasn’t the user input. It was poor architecture: • Full MongoDB documents returned in tool responses • Entire conversation history sent every time • Slightly heavy system prompts Result?Token usage exploded → costs increased → system became unstable 🛠️ What fixed it: • Limited tool responses to essential fields (max 35 records) • Smarter actions (combine steps into single calls) • Context control (last 8 messages for chat, 5 for agent tasks) • Reduced prompt size • Proper error handling for TPM / 413 issues 📉 Outcome: • Controlled token usage• Stable performance • Predictable billing• Production-ready system 💡 Lesson:LLMs don’t become expensive by default —bad architecture makes them expensive. If you're building AI systems, focus on: → Context → Data flow → Token control Curious — have you faced similar scaling issues in your AI apps?

2

0

Build Microservices Laravel 11 APP

I just built a microservices product using laravel 11, check the documentation and github repo here: https://www.linkedin.com/posts/aldols_microservices-laravel11-production-ready-aldo-latasobapdf-activity-7444814808249495552-KhxD?utm_source=share&utm_medium=member_desktop&rcm=ACoAACj4A_EBR8YqKvo2lSvQqDLY8_pHcBYH8_I

1

0

Senior Full Stack and AI Engineer

Hello, I'm a software engineer. My primary focus is website and mobile app development. I extensively use React/Next, NestJS, Python/Django, ReactNative, Flutter, and C++. Please feel free to contact me anytime for full-stack, AI, or Web3-related development.

0

0

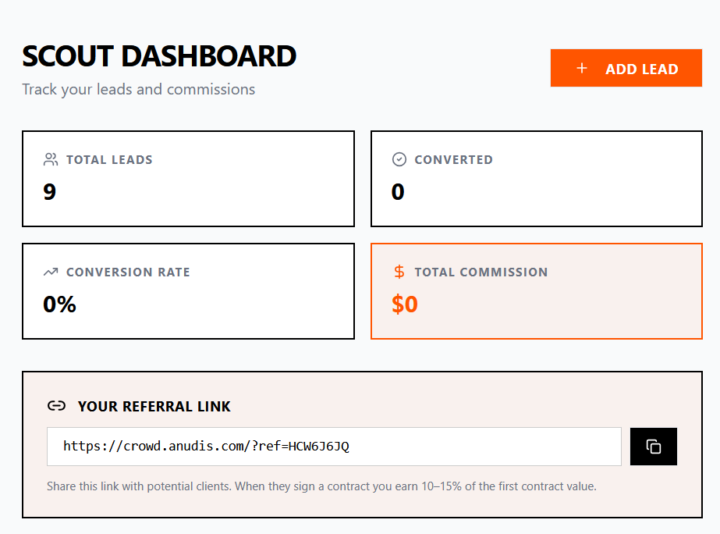

Scratching my on itch!

I started to use the system I'm building as if I were a beginner and I found issues from the start. If anyone is interested you can join here: https://crowd.anudis.com/

0

0

1-30 of 256

powered by

skool.com/citizen-developer-7163

This is a vibecoding community where we build, learn, and ship by momentum, and real-world experimentation. The fastest way to grow isn’t perfection.

Suggested communities

Powered by