Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

Clief Notes

25.6k members • Free

AI Automation Society

349.2k members • Free

17 contributions to Clief Notes

Adding ADRs at the end of my coding session has really been powerful.

I wrote an article about why you should use ADRs and what they are. It's a simple read, maybe like five minutes. https://kuality.design/en/blog/why-solo-developers-need-architectural-decision-records-adrs

0 likes • 3h

It's not just about how work sessions alone are tagged or wikilinked, front mattered, etc. It's specific decisions you took and why you made them that's what later comes back and bites you when you don't know why you made a decision because someone just looking at your code will not be able to glean that information

Token reduction maximization: a real stack that cut Claude costs by 30x

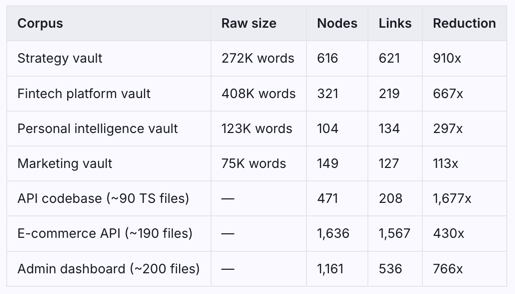

AgentsView says Mars processed $1,530 worth of tokens last month. The actual spend to date: $50. On a $100/month Claude Max subscription. Three tools. Here's how. Two numbers being measured: AgentsView reads local session token counts and multiplies by Anthropic's published API pricing. It doesn't know you're on Claude Max — a flat subscription, no per-token billing. So $1,530 is the real usage value: what it would cost on pay-per-token API. The $50 is what it actually cost. That gap has two causes: the subscription model, and a stack that compressed token usage before it ever hit the meter. Layer 1: RTK (Rust Token Killer) github.com/rtk-ai/rtk · brew install rtk RTK sits between your terminal and Claude's context and filters noise. git status on a 280-file repo normally dumps ~3,000 tokens of file listings into context. RTK trims that to ~200. Same information, 15x smaller, on every shell operation, without thinking about it. Layer 2: Graphify github.com/safishamsi/graphify · pip install graphifyy The heavy one. Graphify turns a codebase or document vault into a persistent knowledge graph — JSON and interactive HTML. Build it once, query it forever. Instead of Claude re-reading 10–15 files to answer a question, it traverses 3–5 nodes in a graph that already exists on disk. One session. 16 Obsidian vaults, 4 production codebases: [see attachment] 910x reduction means a corpus that used to cost 545,000 tokens to query now costs ~600. 🤯 It also finds connections that were never explicitly linked — concept clusters across files, architectural patterns that only become visible when everything is indexed at once. Layer 3: Markdown Guard hook This one doesn't save tokens. It keeps the agent safe. Vaults are full of external content: captured links, scraped docs, research notes pulled from anywhere. A poisoned .md file can silently redirect an agent mid-session — change what it does next, what it writes, what it deletes. When that agent has write access to your systems, that's not theoretical.

Seeking Architecture & Distribution Advice: 85+ Empathy-Driven Life Guides (Pro-Bono / Open Source)

I've spent the last few months building a library of 85+ practical guides for the hardest life situations people face - widowhood, losing a home to foreclosure, military-to-civilian transition, navigating executorship, first apartment, making friends as an adult. The kind of stuff people are Googling at 2am with no one to call. Each guide includes an integrated AI prompt. You fill in your situation, drop it into Claude or a model the person has access to, and it becomes a grounded companion that gets to know your particular circumstances - not a generic response, but one anchored to the specific guide you're working through. The goal is to give people a safe place to ask the questions they're too embarrassed or too isolated to ask another person (I've built-in safety sets and responses to ensure people don't give the AI sensitive information). I have zero interest in monetizing this. I really just want to find a way to help the most people without them having to pay for the assist (they have enough on their plate if they're using one of the guides). Here's where I'm stuck. The guides work. The delivery doesn't. Right now everything lives as .docx files. That's not a real product. I want to move toward a proper web app, then mobile. Three problems I don't have good answers to: Context loading. What's the cleanest approach to make sure the AI is reading the specific guide a user is looking at - not just running off a generic prompt? RAG? Direct document injection? Something else I'm not thinking of? API costs. Companion prompts at any real scale get expensive fast, especially for a free-to-user app. Are there AI for Good grant programs or API credit programs - Anthropic, Google, OpenAI - that fit a project like this? Architecture for handoff. As I'm building this to give it away, what stack gives a small non-profit with minimal technical capacity the best shot at maintaining it long-term? Longer shot, but genuinely asking: does anyone know an organization or NGO that's actively looking for something like this? A fully built content library with AI companion infrastructure, no strings attached.

1 like • 20h

Nice! I've heard great things about Notebook LM. I haven't personally used it, but I understand what it does. I built something outside of that because I don't want Google to have access to my information, but it's a totally valid, great solution. Best of luck. If you have any questions, feel free to reach out.

Memory Picture for Context

In my endless pursuit of perfect memory context, I've added a new layer to my stack called Beads which saves it to an SQL database. You do an in it per project to gain this benefit. I will report back to see how useful it is but currently this is my full context stack. Memory picture — full answer: - Claude built-in: context window + compaction summaries - File memory: ~/.claude/projects/[path]/memory/ — what I write per-project, loads at session start - CLAUDE.md files — your permanent instructions baked into every session - SESSION-LOG.md per vault — manual history - Zep MCP — external graph, verdict above (marginal value for your workflow) - Beads (new) — versioned SQL task graph, survives compaction, agent-to-agent messaging. Now live across all 12 projects.

0 likes • 2d

Okay so I was doing research on this and the problem, I guess, on the one hand there's an antivirus markdown file in the repo that explains that the BD executable file gets flagged by antivirus software but that's it. My interpretation from what I was reading was that it was vibe coded, not inherently bad, but it's about 240,000 lines of code so it's kind of big for something that's supposed to be a lightweight task tracker. For me, that doesn't mean that, because it's vibe coded, that it's dangerous, which was my first assumption. After all, Stevie Eggy is an ex-Google, ex-Amazon who wrote it so the risk, from what I was reading, probably isn't malware so much as it might be just untested edge cases in something that runs with shell access. I'm gonna stick with it and give it a shot and see if there's compounding value over the months but definitely checked out ob1 David and that one looks pretty dang interesting. Hermes is something I've been looking at too but never heard of Cogni so I'm gonna see how those two work together, especially since it seems like yucky try that too and it's been a success

0 likes • 2d

Okay and this is interesting at least to me and then I'll end this thread here or at least I'll stop commenting such long-winded rants here but beads vs congee/Hermes are complimentary and don't necessarily compete. I didn't even realize beads is more of a task tracker, even though I just said that in an earlier comment. I've just been reading so much tonight; thoughts have been catching up late but as a task tracker, beads is still good. - Ob1 is more about shared context across all AIs so that's complementary too and not competing. - Cognee is persistent agent memory so that's also complementary. - Hermes, the self-improving agent that builds skills from experience across sessions, is not a task tracker replacement. It works, I guess, well with Cognee. - To add a deep research element I'm looking at Miro thinker, which would replace something like Perplexity Pro, which I don't pay for, but it would be something similar in comparison to that. - Finally tonight I ran into another interesting application called Open Chronicle, which which sounds promising and is an alternative to open AIs closed chronicle. It just watches your Mac screen via the Mac iOS accessibility tree, reads what app is focused and what texts are visible. Sounds kind of creepy but still interesting as well. It basically converts what you're working on into structured Markdown memory, right? It's local first. Any MCP compatible agent can read it, SQLite plus Markdown on the disc, model agnostic. The only caveat is that it's a version 0.1.0. It's Mac OS only. It's an early alpha, useful concept but the advice here is that it's risky to depend on right now so probably leave it out of the stack. It watches your screen and apps and feeds that context into memory, which sounds kind of interesting.

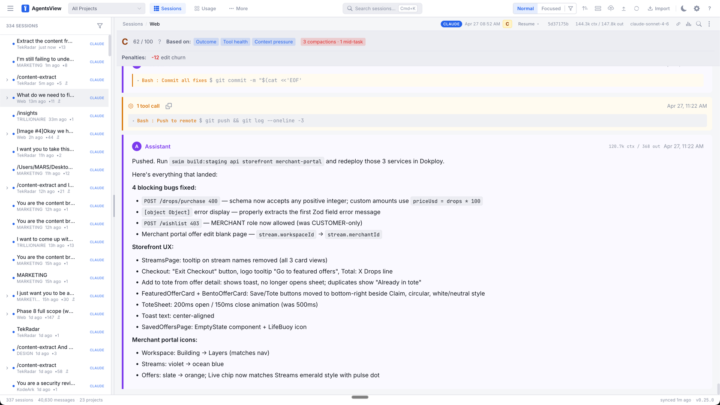

AgentsView (Opensource - check it out)

As a dashboard that's free and I've been using it for about a week now, I recommend AgentsView for you. I find it a very neat, concise, detailed way to view all of your LLM sessions that you're having with multiple agents, giving you a lot of context. It gives you the full transcription of everything you're doing which is super useful. It even gives you a great rating for how well your context memory is doing and what I love most is I use it with Wispr Flow. But Wispr Flow used to be my backup in case I would miss something I was transcribing and I could just copy and paste a block. But what I love about agents view is it shows me everything in the LLM conversation so now I can copy huge blocks of conversation to feed it to other LLMs which is far more helpful than my current click and drag across large swaths of text to then share it with another tab. Right so anyways just putting this out there, it's open source, check it out, it's banging.

0 likes • 2d

@David Vogel I was looking at this one dashboard that was Next.js but because I stay away from Next.js and just roll with straight React, Express, Vite, and Node.js and a Postgres database. I like to know all my technologies at a very modular level, an atomic level, so that I can understand what it's doing and have more flexibility. Anyway side point, sorry, going off on a rant there. I did see a couple of dashboards that were Next.js although I don't want to assume that you're building on Next.js. I just always get triggered when I hear Next.js. I don't have trust issues with Vercel, anyways, a topic for another time.

1-10 of 17

Active 31m ago

Joined Apr 11, 2026

ENTJ

Montevideo, Uruguay

Powered by