Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

66 contributions to AI Automation Society

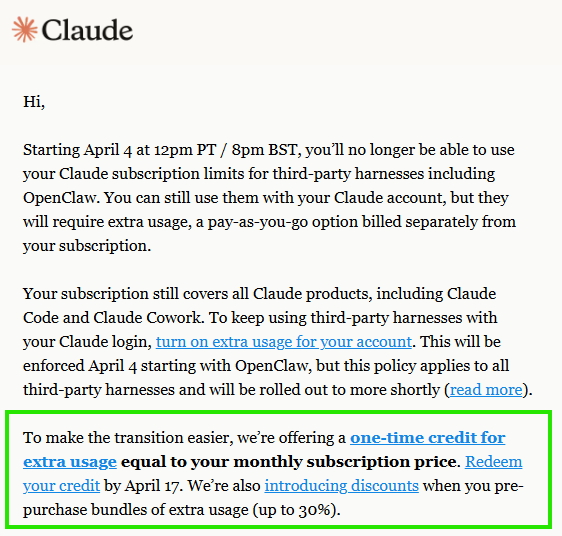

Claude Code just got computer use in the CLI - here's the architecture detail nobody's explaining

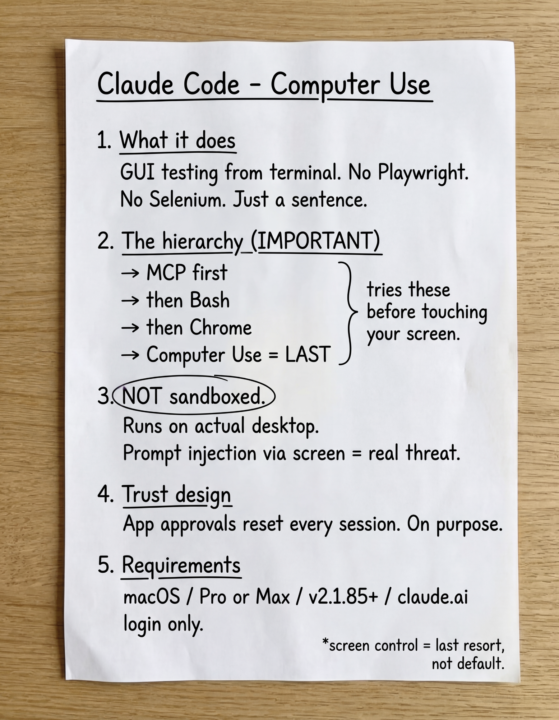

Most posts about this are just "wow Claude can control your mouse now." That's not the interesting part. The interesting part is the fallback hierarchy. Claude tries MCP servers first. Then Bash. Then Claude in Chrome. Screen control only activates when all three fail to reach the task. That tells you exactly when and how to use it. The security model is also different from what people assume. Unlike the sandboxed Bash tool, computer use runs on your actual desktop with no filesystem isolation. On-screen prompt injection is a real threat vector, not a hypothetical one. Two more things worth knowing: App approvals reset every single session. That is intentional trust architecture, not a UX bug. Your terminal window is excluded from Claude's screenshots the entire time. It cannot see its own output while controlling your screen. Hard requirements if you want to try it: macOS only, Pro or Max plan, CLI v2.1.85+, and you must authenticate through claude.ai directly. Drop any questions below. Happy to go deeper on any part of this.

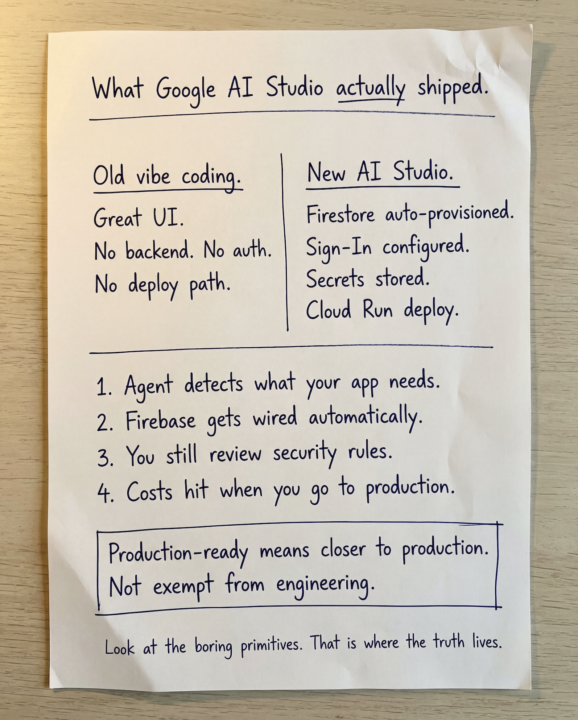

The "Vibe Coding" Honeymoon is Over(Here is the New Baseline)

Let’s get real about the latest Google AI Studio update. I spent time tearing down the actual mechanics of what they just released, and 90% of the timeline is completely missing the point by focusing on "better prompting." Here is the actual strategic shift: Google is officially closing the gap between generating a pretty UI and deploying a usable app stack. AI Studio is no longer just a toy for styled components. It is actively provisioning the boring primitives like Firestore, Google Sign-in, secrets handling, and direct deployments to Cloud Run right from the browser. Meanwhile, they are clearly positioning AntiGravity for the heavier, deeper agent workflows. The new baseline is a functional, full-stack prototype in minutes. If you are just generating React components and calling it a day, you are already behind. But a word of warning to the smart builders: Do not fall for the "production-ready" marketing trap. AI gets you velocity, but you still have to be the engineer. You still have to review security rules, validate auth flows, and manage cloud costs. Use the speed. Do not outsource your judgment.

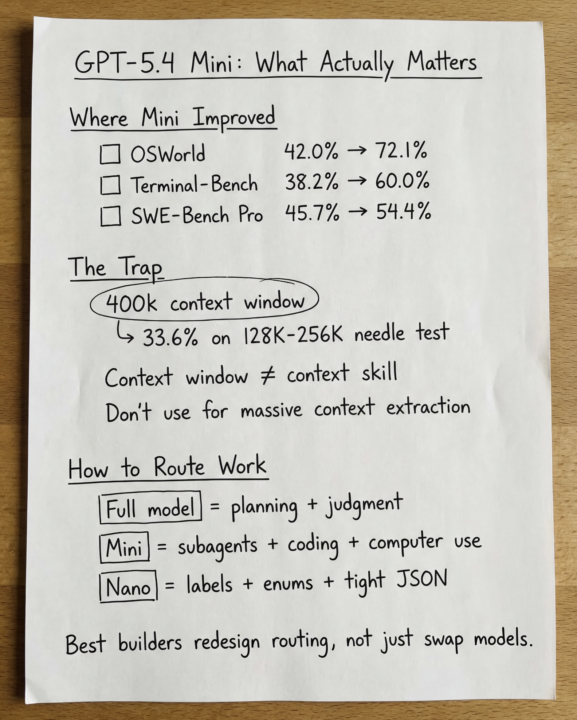

GPT-5.4 Mini and Nano just dropped

Everyone in the AI space is talking about the new small models from OpenAI. Most people are reading the marketing. I read the benchmarks. Here is what actually changed: Mini went from 42% to 72.1% on OSWorld-Verified. Terminal-Bench jumped from 38.2% to 60%. SWE-Bench Pro moved from 45.7% to 54.4%. That is not a minor upgrade. That changes where you draw the line between your main model and your worker model. Now here is the part most people will miss. The 400k context window is a trap. Mini scores 33.6% on the 128K to 256K long-context needle test. Stuff 200,000 tokens of logs into it and it fails roughly 66% of the time. Context window and context skill are not the same thing. The routing decision is actually straightforward once you see it clearly: Full model handles planning, ambiguity, and final judgment. Mini runs parallel subagents, tool calls, and screenshot-heavy workflows. Nano handles classification, extraction, ranking, and tight JSON tasks only. One hard rule: do not put nano anywhere near UI navigation or computer use. It scores 39% on OSWorld while mini scores 72.1%. The builders who win here will not just swap models. They will redesign routing. Would love to hear how others in this community are thinking about model tiering in their agentic stacks. Drop your current setup below.

Why Codex Subagents Actually Matter

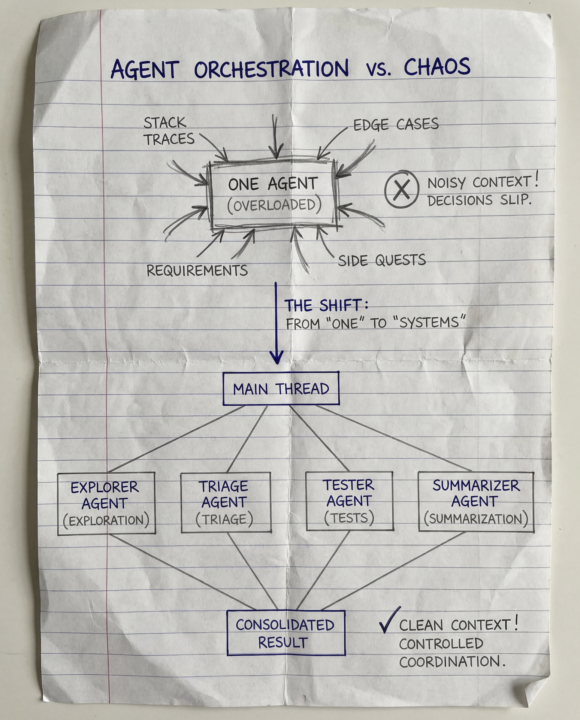

One of the most useful ideas in the new Codex subagents feature is not “more agents.” It is cleaner thinking. A lot of people assume the main bottleneck with coding agents is speed. In practice, the bigger problem is usually noise. Stack traces, test logs, edge cases, exploration notes, and random side quests all get dumped into one thread. The result is that decision quality starts slipping long before the model feels unusable. That is why Codex subagents stood out to me. The design is not really about flashy parallelism. It is about keeping the main thread focused on requirements, decisions, and final output while pushing noisy intermediate work into smaller, scoped agent threads. That is also why this feels more like a systems design improvement than a prompting trick. A strong starting point is to use subagents for read-heavy tasks like exploration, tests, triage, and summarization. That gives you the upside of parallel work without turning your workflow into a coordination mess. The warning is important too: if you use multiple agents to write code at the same time, things can get chaotic fast. More agents do not fix fuzzy thinking. They just create faster confusion when the task itself is unclear. The bigger shift here is that we are moving from one smart assistant to small systems of agents with clear roles, scoped context, and tighter coordination. That is where a lot of practical leverage is going to come from.

🔥

1 like • Mar 16

This is the LinkedIn post where I broke this down in more detail: https://www.linkedin.com/posts/karthikeyan-rajendran07_ai-codingagents-developertools-activity-7439439510448386048-KS_7 If you found it useful, I’d really appreciate your support there with a like, comment, and repost.

1-10 of 66

🔥

@karthikeyan-r-5062

I'm a Software Engineer by profession based in India. I'm trying to learn more about AI Automation and its usages

Active 9h ago

Joined Dec 7, 2024

Powered by