Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

AI Agent Automation Lab

59 members • $9/m

6 contributions to AI Agent Automation Lab

Best Practices for Secure, Per-User Data Access in a Chatbot (N8n & Airtable)

Hi everyone, I'm developing a chatbot using N8n for the backend logic, which needs to retrieve client-specific information from our Airtable base. My core requirement is to ensure that when a client interacts with the chatbot, they can only access their own data, and absolutely no one else's. This access will be determined by a unique client identifier (similar to a business registration number or SIRET) which will be used to filter all data queries in Airtable. I'm looking for advice on the most secure and robust strategies or architectural patterns to implement this kind of granular, per-user data segregation. Specifically, with N8n and Airtable in mind, what are the recommended best practices for: - Securely authenticating the client via the chatbot interface? - Ensuring that all N8n workflows making calls to Airtable strictly filter data based on the authenticated client's unique ID? - Effectively handling authorization within N8n to prevent any unauthorized data access to Airtable records? Thanks!

0

0

n8n & Supabase/pgvector: Date Range Filter on Metadata for Vector Search?

Hi all, I'm working within an n8n workflow, using the "Supabase Vector Store" node to perform semantic searches against a Supabase/pgvector database. My vector store setup follows the standard SQL provided in the Supabase documentation – the basic documents table (with a metadata JSONB column) and the match_documents function that uses the pgvector extension. Document dates are stored within the metadata column (e.g., {"date": "YYYY-MM-DD", ...}). The challenge I'm facing is filtering results by a date range (e.g., documents from the last 7 days). The n8n "Supabase Vector Store" node calls the standard match_documents function, which filters metadata using the metadata @> filter operator. While this works perfectly for exact key-value matches entered in the node's "Metadata Filter" options, it seems unsuitable for range comparisons on values (like dates) stored inside the JSONB. Given these constraints with the standard function and the n8n node, has anyone found an effective alternative or workaround? I need to combine the vector similarity search with filtering by a date range stored in the metadata, preferably within the n8n context. Looking for suggestions – perhaps SQL function modifications that are compatible, or different n8n approaches beyond the basic Supabase node? Thanks for any insights!

Exciting Announcement 🥁🥳

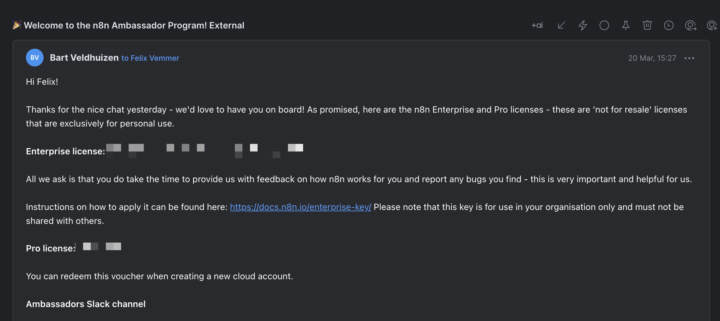

Hey everyone, I am super excited to share that I am officially the second Berlin n8n ambassador 😎 I just got the confirmation from Bart and I am super excited that I can now work even more closely with Bart, Max and the n8n team. I think this is a great way to channel some of our community ideas, questions concerns directly with the n8n team. I'll also be organizing some workshops (hopefully live and in Berlin) so I would love to see you there! Happy Flowgramming!

MCP & RAG

The Future of AI: Will MCP Replace RAG? Here’s What You Need to Know. AI is evolving fast—so fast that today’s most advanced models might be outdated sooner than we think. Right now, a big debate is happening in AI: Will Model Context Protocol (MCP) replace Retrieval-Augmented Generation (RAG)? Some say MCP is the future. Others argue RAG is here to stay. Here’s what you need to know (explained simply, without the jargon). What is RAG? Retrieval-Augmented Generation (RAG) is an AI technique that helps models pull in outside knowledge to generate better answers. Instead of relying only on what they were trained on, AI models can search external data sources in real-time to improve accuracy. 🔹 Example: If you ask an AI about the latest stock prices, it uses RAG to fetch the most up-to-date numbers from financial databases. Why is this powerful? Because AI models can’t remember everything—RAG helps them stay current and relevant. What is MCP? Model Context Protocol (MCP) is a new standard for how AI models access and use external data. Instead of just retrieving random bits of knowledge, MCP sets rules for how AI connects to different systems, ensuring that information is: ✅ More structured (not just loose fragments of text) ✅ More secure (because it follows strict access rules) ✅ More efficient (by reducing unnecessary searches) 🔹 Example: Imagine AI in a hospital. Instead of searching the web for medical advice, MCP would securely connect to official hospital databases, ensuring that doctors get accurate, real-time patient information. So, Will MCP Replace RAG? No—but it might change how RAG works. MCP is like a powerful new highway for AI models to access data. RAG is still useful, but MCP could make it more structured, secure, and reliable. The Future? A Combination of Both. MCP could work with RAG to create AI that is: 🔹 Smarter – because it retrieves data in a structured way 🔹 Faster – because it doesn’t waste time searching everywhere

Automating Follow-Ups: Capturing Conversations and Scheduling Reminders with One Click

Hey guys ! I’m looking to automate follow-ups across Gmail, LinkedIn, and WhatsApp. Ideally, I want a system where, with one click, I can schedule a reminder to a specific date if I don’t get a response. It should automatically capture the recipient’s name, platform, and conversation link, then let me select a follow-up date. On the scheduled date, a calendar event is created at 7:45 AM with a list of people to recontact, including links to the conversations so I can just click on the link to access the LinkedIn conv for example. Their details should also be sent to the CRM for tracking. Has anyone set up something similar? How would you approach this?

0 likes • Feb '25

Hey @Felix Vemmer Just wanted to update you on my progress. I ended up going with a Chrome web extension, and I used Lovable to help generate it. The extension automatically captures the link from where I am—whether it’s a LinkedIn conversation, a Gmail thread, or WhatsApp—and with just a few clicks, I can send the details to an Airtable. I also set up a workflow where every morning, I receive an email with a list of people to follow up with. The web extension sends a webhook to n8n, which then adds the info to Airtable, keeping everything organized. Right now, I’m exploring ways to scrape different platforms to automatically extract the contact’s name and email, so I won’t have to input them manually. Here’s a quick demo video

1-6 of 6

Active 329d ago

Joined Feb 13, 2025