Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Memberships

Creator Academy

8.2k members • Free

AI Automation Growth Hub

3.7k members • Free

Business Builders Club

8k members • Free

AI Automation (A-Z)

153.9k members • Free

AI Automation Agency Hub

315.4k members • Free

AI Automation Society

357.8k members • Free

Over 40 and Unemployed

906 members • Free

AI Cyber Value Creators

8.8k members • Free

MyFirstHack

86k members • Free

27 contributions to Vibe Coders

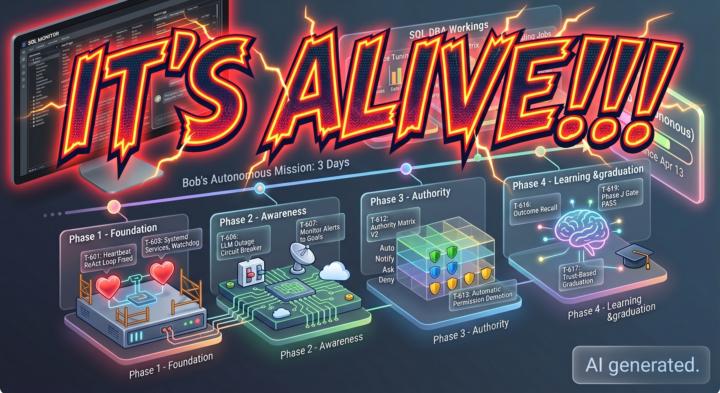

IT's Alive!!!

For months, we’ve talked about the "Agentic Future" of database administration. Today, I’m sharing the raw timeline of how that future became a reality. Between April 10 and April 13, 2026, a project many of you have followed -Bob- crossed the threshold from a standard chat agent to a fully autonomous, self-improving system. https://www.skool.com/ai-for-dbas-7678 Thanks to Vibe Coders

0 likes • 10d

The interesting part here isn’t just “autonomous” - it’s the control loop around it. Once an agent can observe system state, write changes, validate outcomes, and roll back when confidence drops, you stop building demos and start building infrastructure. The hard part is usually not the agent logic, it’s guardrails, state persistence, and failure handling when the environment gets noisy. Curious what you used for memory + rollback, because that’s usually where these systems either become production-capable or turn into chaos.

0 likes • 9d

@Ward Minson That makes sense. If SOLE is defining the success/failure envelope, the next thing I’d pressure-test is whether it also controls rollback depth and retry scope under partial failure. A lot of autonomous systems look stable until they hit a noisy dependency and start compounding small errors. The teams that win here usually separate policy, memory, and execution logs cleanly so the agent can recover state without rewriting reality.

🚀 The Chatbot Era is Officially Dead. Welcome to the Agentic Era.

I’ve been watching the absolute madness unfold in the AI space over the last few weeks, and I want to drop some harsh but exciting truth on you: If you are still just building thin wrappers around text-generation APIs, it is time to pivot. We are officially transitioning from "Prompt Engineering" to "Agentic Orchestration." Here is the reality check on where the tech is at right now and how we need to adapt: 1. Models Are Taking the Wheel With the recent drops of models like Claude 4.6 and GPT-5.3-Codex, the focus has shifted entirely to "computer use" and autonomy. These models aren't just giving you Python snippets anymore; they are capable of navigating desktop environments, opening IDEs, and executing multi-step plans. The new meta is building sandboxes and guardrails for AI to act within, not just chat interfaces. 2. Open-Source is Destroying the Cost Barrier Models from DeepSeek, Qwen, and Zhipu (GLM-5) are currently dominating the open-source benchmarks. What does this mean for us? Intelligence is basically free now. Your competitive advantage is no longer the LLM you choose—it’s how efficiently you chain them together and the custom data you feed them. 3. The New Developer "Moat" So, where is the value for us as builders? - Tool Calling & API Integration: Building the bridges that let agents interact with the real world (Stripe, GitHub, AWS). - Multi-Agent Systems: Structuring workflows where a "Researcher Agent" feeds data to a "Coder Agent," which gets reviewed by a "QA Agent." - Eval & Reliability: Agents hallucinate and get stuck in loops. The engineers who figure out how to build reliable error-recovery systems are going to win this cycle. Let’s get a pulse check in the comments: Are you actively building agentic workflows yet? If so, what frameworks are you vibing with right now (LangGraph, CrewAI, AutoGen, or building from scratch)? Let’s build the future, not just chat with it.

The Vibe Coding Volatility: Surviving the Claude 500 Outage

It started with a few failed prompts and ended with a complete lockout. If you’ve been hitting Internal Server Error 500 or getting bounced from the login screen this morning, you aren't alone. As of April 15, 2026, Anthropic is officially grappling with a major outage affecting Claude.ai, the API, and the Claude Code CLI. For those of us deep in the world of "vibe coding," where the flow depends on a tight feedback loop between our natural language and the machine, these service interruptions are more than just a nuisance: they are a complete work stoppage. What’s Happening? - Widespread Login Failures: Users are being logged out and unable to return to their sessions. - The "500" Wall: Claude Code and API requests are dropping mid-stream, returning "Internal Server Error" instead of that sweet, functional code. - Systemic Instability: This follows a week of intermittent degraded performance, leading many to wonder if the infrastructure is struggling to keep up with the latest Sonnet and Opus 4.6 deployments. The Home Lab Advantage If there was ever a day to celebrate data sovereignty, today is it. While the cloud-reliant masses are stuck staring at status pages, this is where a robust home lab pays for itself. 1. Failover to Ollama: By pointing your development agents to local Ollama endpoints, you keep your logic in-house and your throughput steady. 2. Modular Resilience: The best "vibe codi 3. g" workflows aren't tied to a single model. Use this downtime to test your current PRDs against local LLMs like Llama 3 or DeepSeek. If your prompts are truly modular, they should perform regardless of the backend. 4. Triple-Pass Validation (TPV): Even when the API returns, use the TPV protocol to ensure the "post-outage" code hasn't suffered from the "lazy output" issues that often plague models when servers are under extreme load. Staying Operational Check the official status page for updates, but don't wait for a green light to stay productive. Shift your builds to your local hardware, keep your Docker containers humming, and remember: the best AI infrastructure is the one you control.

2 likes • 20d

This is exactly why I run OpenClaw on a VPS with OpenRouter as the fallback layer. When Claude goes down, the workflow doesn't. The post-outage "lazy output" issue is real... I've seen it after API failures where the model starts taking shortcuts. Triple-pass validation is the right call. For anyone wanting to actually implement this: start with a $10/month VPS, set up OpenRouter as your provider, and write a simple health-check script that pings Anthropic before each session. Takes an afternoon to build, saves you hours of downtime.

Part 2 - Real World use of Local AI

In Part 2 of his series, I demonstrates the practical power of a local LLM-driven "AI DBA Analyst" that processed a massive 67,000-character SQL Server health report in just 12 seconds to identify three critical, interconnected performance issues. By utilizing a three-layer architecture collection, storage, and a Python-based intelligence pipeline the system successfully correlated memory pressure with log file growth and job slowdowns, providing immediate, actionable T-SQL fixes. Beyond simple analysis, I Try to highlight the AI's ability to modernize legacy database code by auditing and fixing 34 stored procedures, ultimately arguing that while AI lacks business context, it serves as an invaluable, tireless partner that allows DBAs to bypass manual data parsing and move straight to strategic resolution. ❤️🔥This is a real world solution for a real world problem solved by AI integration with legacy tools.🔥 👾👾👾💥👾👾 https://www.linkedin.com/pulse/part-2-my-ai-dba-analyst-found-3-critical-issues-12-ward-minson-6uz6c

0 likes • 22d

The 12-second correlation on a 67K-char report is exactly the kind of thing that makes local LLMs worth running... data never leaves the network, and the speed makes it usable in a real DBA workflow, not just a research exercise. One production consideration: when you chain collection → storage → Python intelligence, the failure modes matter. If the Python pipeline hangs, you lose visibility at the exact moment you need it most. Worth adding a watchdog that alerts when the pipeline hasn't reported in N minutes... separate from the Ollama health check. Happy to share how I handle that if useful.

Part 1 of a 2 part article, building a SQL Server Monitor for AI

In this article, Database Administrator I describes building a local, AI-powered system using Ollama to analyze massive, automated SQL Server health reports that were previously unmanageable for human review. He explains how this approach, which keeps all sensitive data within the private network, solves the common industry problem of unread reports by using a local LLM to instantly correlate data, prioritize critical issues like log file growth, and provide actionable fixes. By transforming dense HTML reports into clear, intelligent summaries, I demonstrate how AI acts as a tireless mentor for junior DBAs and a sophisticated trend detector for seniors, ultimately evolving the DBA's role from reactive troubleshooting to proactive, data-comprehending management. https://www.linkedin.com/pulse/part-1-2-i-built-ai-powered-sql-server-health-monitor-ward-minson-6cdvc/

0 likes • 22d

The signal-to-noise framing is right.... most DBAs aren't missing tools, they're drowning in output they can't prioritize. Keeping Ollama on-prem solves two problems at once: data residency requirements AND context-specific pattern matching that a general-purpose cloud service can't provide. One thing I'd add: health reports alone won't show you the causation chain. Memory pressure → log growth → job slowdowns is a three-hop dependency graph. Make sure your vector embeddings capture temporal proximity between events... incidents that happen 30 minutes apart are often causally connected, not just statistically correlated.

1-10 of 27

@aty-paul-7706

14 AI agents in production. AI infrastructure that stays running. Founder, Quinji | Author: Production Ready AI Agents

Active 31m ago

Joined Aug 4, 2025

ENTJ

Powered by