Unlock at Level 1

12-Week AI Certification Program

Starting May 11.

Announcing our 12-Week AI Certification Program—your complete path from beginner to building production-ready AI systems. In 60 focused sessions, you’ll master Python, machine learning, deep learning, LLMs, and AI agents, while gaining hands-on experience deploying real-world solutions.

Designed for ambitious builders, this program transforms you into a confident AI practitioner ready for modern industry challenges.

0%

Unlock at Level 1

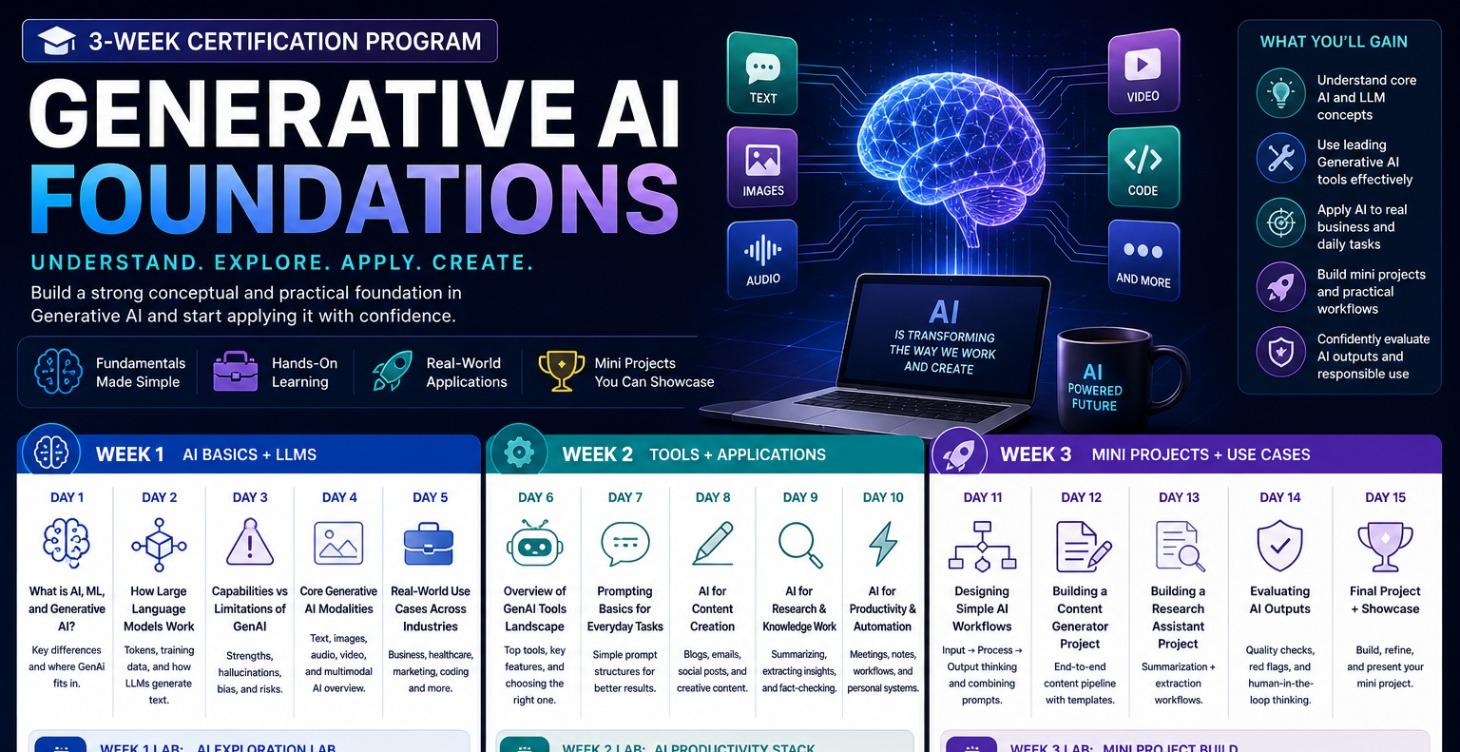

3 Week - Generative AI Foundations Certification

Starting May 11.

Build a strong foundation in AI and start applying it immediately. Learn how LLMs work, explore powerful tools, and create real-world mini projects. Designed for beginners, focused on practical outcomes.

Understand. Apply. Create with confidence.

Start your journey into Generative AI today.

0%

Unlock at Level 1

4 Week AI Agents & Agentic Workflows Certification

Starting May 18.

Go beyond prompts—build intelligent systems that think, act, and deliver results. Learn agents, memory, RAG, and multi-agent orchestration through hands-on projects.

Design. Build. Orchestrate. Deploy.

Create production-ready AI systems and showcase a powerful capstone.

Start building the future of AI today.

0%

Unlock at Level 1

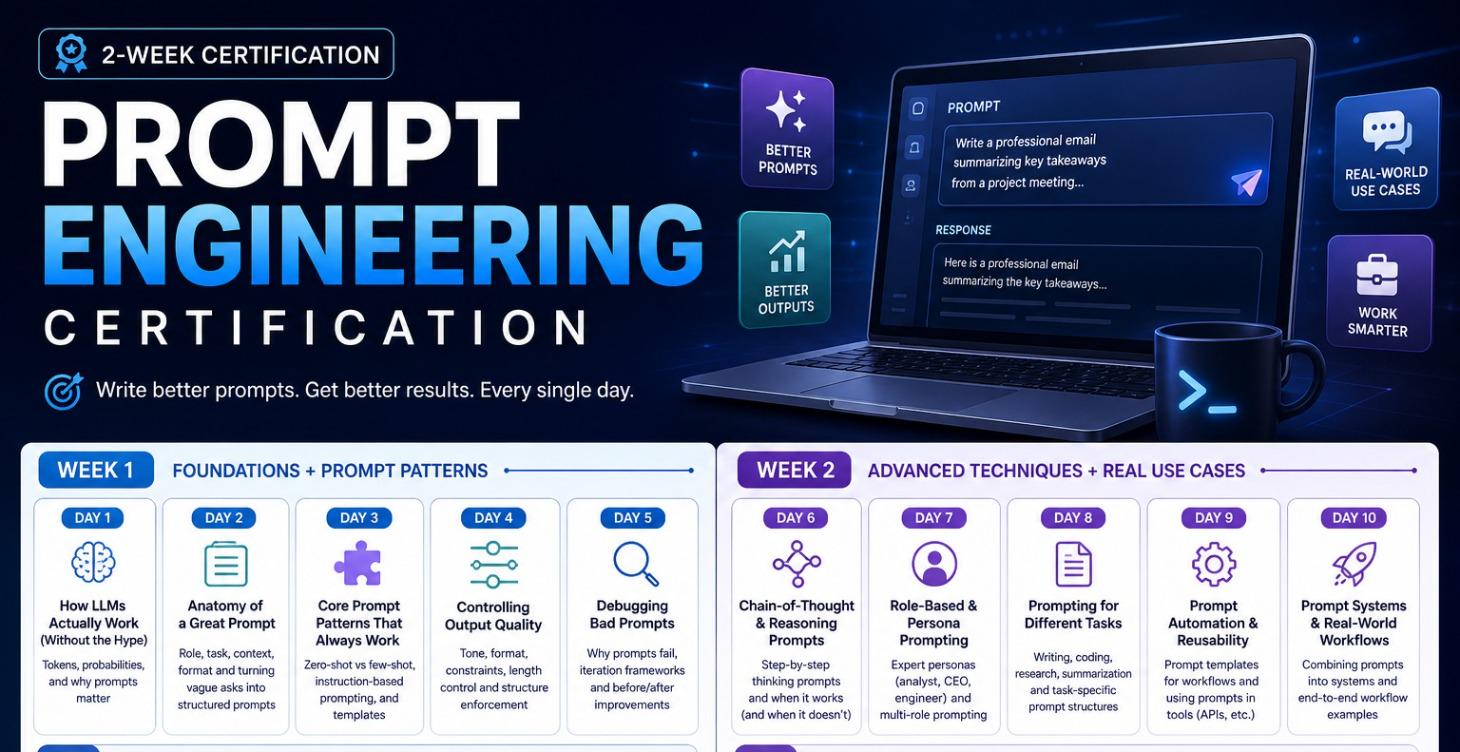

2 Week - Prompt Engineering Certification

Starting May 18.

Master the skill that powers AI success. Learn how to write high-impact prompts, improve outputs, and build reusable workflows you can apply immediately at work. Includes hands-on labs and real-world use cases.

Build your Prompt OS. Get better results—every single day.

Start your AI advantage now.

0%

Unlock at Level 1

2 Week No-Code AI Automation Certification

Starting May 18.

Automate your work without writing a single line of code. Learn Zapier, Make, and AI-powered workflows to streamline emails, content, and data processes. Build real automations you can use immediately.

Work smarter. Save time. Scale faster.

Start building powerful AI automations today.

0%

Unlock at Level 1

2 Week AI for Marketers Certification

Starting 18 May.

Create high-converting content, automate campaigns, and analyze performance using AI. Learn copywriting, ads, SEO, and marketing workflows with hands-on labs and real-world use cases.

Create. Automate. Analyze. Grow.

Build AI-powered marketing systems that deliver measurable results.

Start transforming your marketing with AI today.

0%

Unlock at Level 1

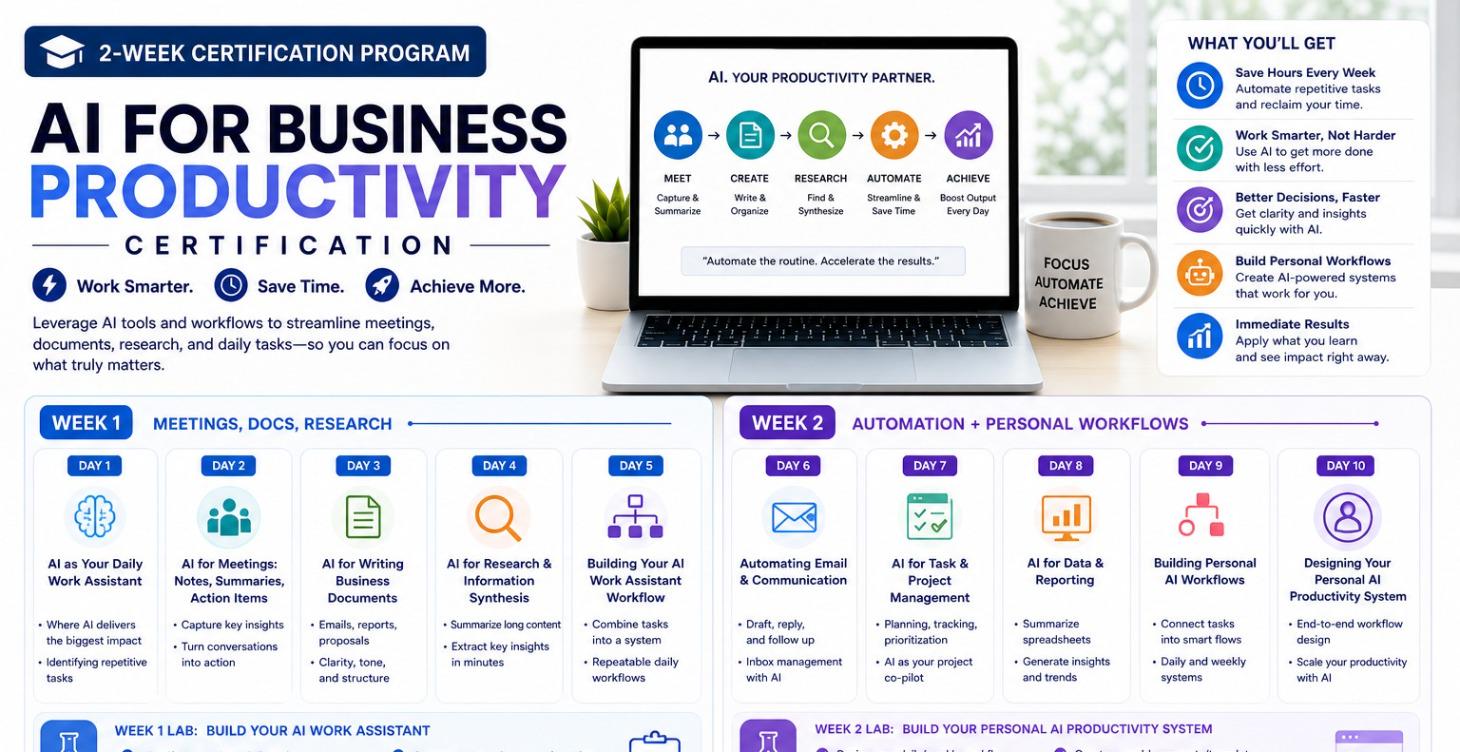

2 Week AI for Business Productivity Certification

Starting May 25.

Get your time back with AI. Automate meetings, documents, research, and daily workflows to work faster and smarter. Build your personal AI productivity system with hands-on labs and real-world use cases.

Work smarter. Save time. Achieve more.

Start transforming your daily workflow with AI today.

0%

Unlock at Level 1

3 Week AI for Cybersecurity Certification

Start May 25.

Master how AI transforms threat detection and defense. Learn to identify risks, build detection models, and design AI-powered security systems. Gain hands-on experience with real-world scenarios and labs.

Detect. Defend. Secure.

Build practical skills to protect systems and stay ahead of evolving cyber threats.

0%

Unlock at Level 1

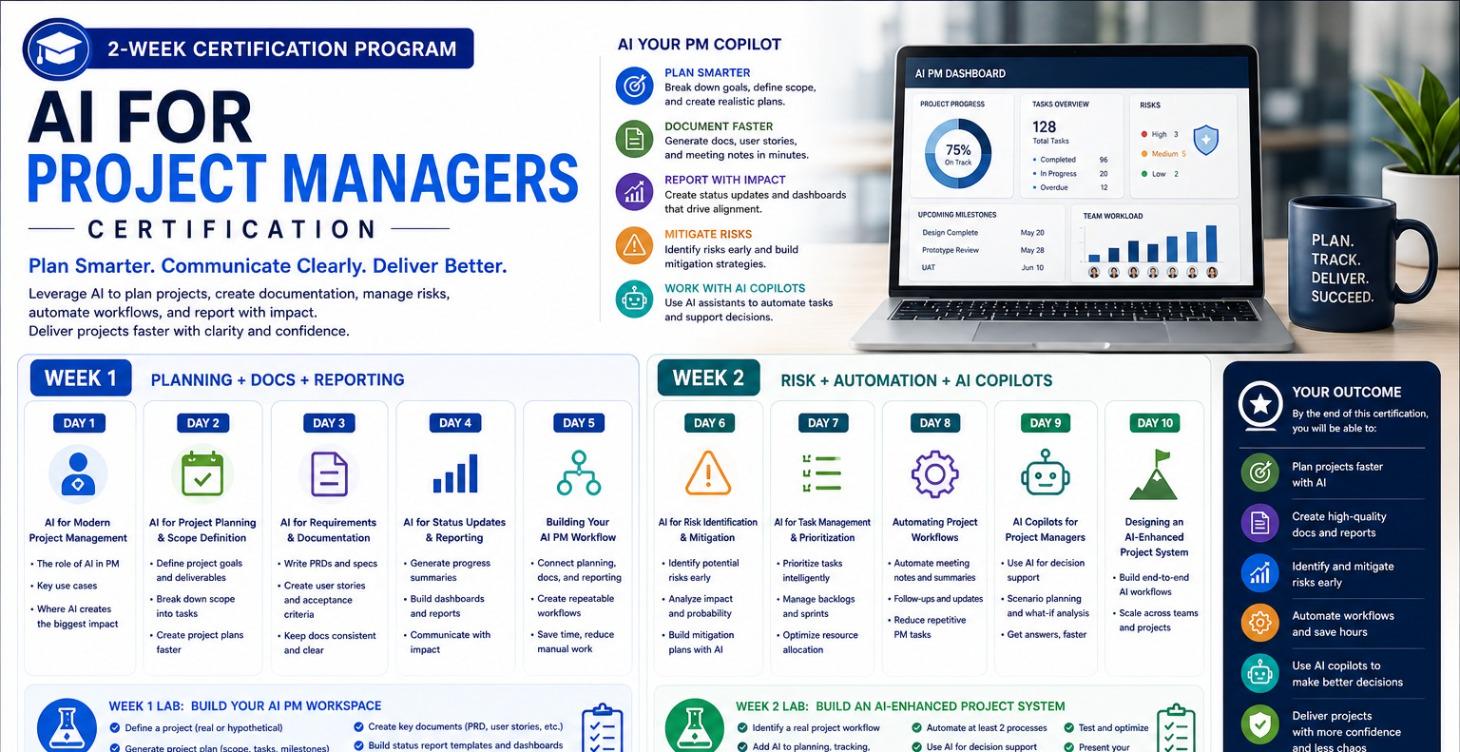

2 Week AI for Project Managers Certification

Starting May 25.

Plan smarter, communicate clearly, and deliver projects faster with AI. Learn to automate documentation, reporting, risk management, and workflows using AI copilots and tools.

Plan. Track. Deliver.

Build an AI-powered project system that improves efficiency, reduces risks, and helps you lead projects with confidence.

0%

Unlock at Level 1

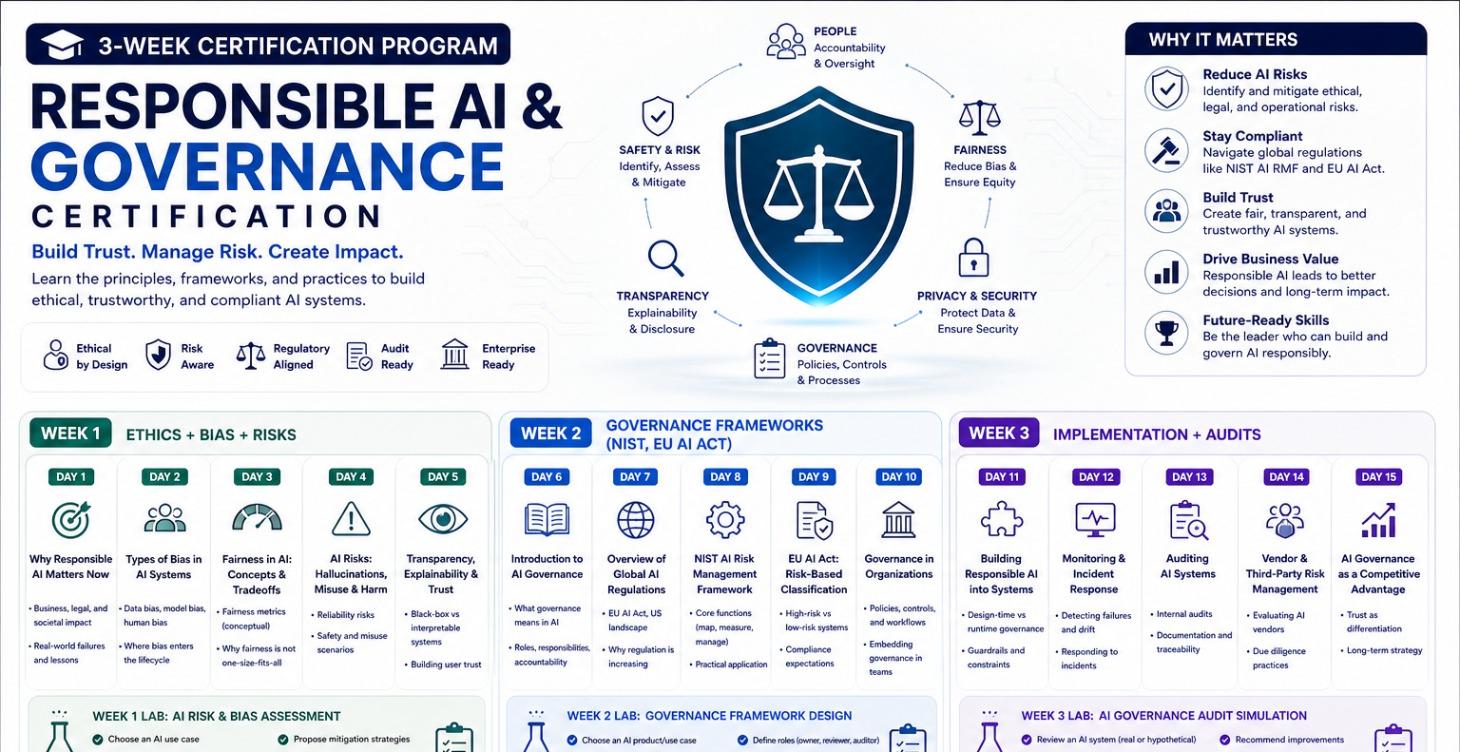

3 Week Responsible AI & Governance Certification

Starting May 25.

Build ethical, trustworthy, and compliant AI systems. Learn bias detection, risk management, and global frameworks like NIST and EU AI Act. Gain hands-on experience designing governance strategies and conducting audits.

Build trust. Manage risk. Lead responsibly.

Become the leader who drives safe and scalable AI adoption.

0%

AI Product Owner & Business Leadership Program

AI Product Owner & Business Leadership Program prepares non-technical leaders to design, launch, and scale AI-powered products responsibly. Learn how AI works conceptually, evaluate feasibility, manage data and model risks, govern autonomous systems, and drive real business value. This program focuses on product judgment, strategy, ethics, and leadership—so you can confidently lead AI initiatives without writing code.

0%

Unlock at Level 1

or Upgrade to Standard

AI Fundamentals for Business

What AI can and cannot do — without math or code

Duration: 21 weeks · 105 teaching days

Audience: Product Owners, Product Leaders, Business Stakeholders

Outcome: You develop AI judgment, not technical skills

0%

Unlock at Level 1

or Upgrade to Standard

How Machine Learning Really Works

Mental Models for Models

Duration: 21 Weeks · 105 Teaching Days

Audience: AI Product Owners, PMs, Business & Tech Leaders

Style: Conceptual, visual, analogy-driven, zero math

0%

Unlock at Level 1

or Upgrade to Standard

Data Literacy for Product Owners

Understanding Data, Quality, and Limits

Duration: 21 Weeks · 105 Teaching Days

Audience: Non-technical Product Owners, AI PMs, Business Leaders

0%

Unlock at Level 1

or Upgrade to Standard

Product Thinking & Problem Framing

Duration: 5 Months · 21 Weeks · 105 Teaching Days

Audience: AI Product Owners, PMs, Business Leaders

Outcome: Consistently identify high-value, feasible, responsible AI problems—and avoid costly AI mistakes.

0%

Unlock at Level 1

or Upgrade to Standard

AI Use Cases Across Industries

Duration: 5 Months · 21 Weeks · 105 Days

Audience: Product Owners, PMs, Business Leaders

Goal: Build industry-agnostic AI intuition by recognizing repeatable patterns

0%

Unlock at Level 1

or Upgrade to Standard

Ethics, Bias & Trust in AI

Duration: 5 Months · 21 Weeks · 105 Teaching Days

Audience: Non-technical Product Owners & Business Leaders

Philosophy: Ethics as a business capability, not a philosophy class

0%

Unlock at Level 1

or Upgrade to Standard

Principal AI Security Engineer Internship Program

A Principal AI Security Engineer is someone who can:

- Design AI-native security systems

- Combine machine learning, LLMs, and agents

- Understand attackers, defenders, and governance

- Automate decisions—not just generate reports

This internship is designed to train interns at that level.

0%

Unlock at Level 1

School of AI 10 Week Internship Program

We’re excited to open applications for our AI Internship Program, starting 15th January 2026.

Congratulations and welcome to the School of AI.

As an AI Intern, you will:

- Build 25 end-to-end AI projects

- Work hands-on with real-world AI systems

- Create a strong GitHub + project portfolio

- Learn how to showcase your work to future employers

This is a build-first, execution-driven program designed for motivated learners who want real experience—not just theory.

0%

Unlock at Level 1

School of AI — Web Developer Internship Program

Duration: 10 weeks

Projects: 25 Web Development Projects

Format: Self-paced

Outcome: A complete web dev portfolio + GitHub proof-of-work + completion certificate

Who this is for

- Beginners who can do basic HTML/CSS and want real projects

- Career switchers building a portfolio

- Developers wanting modern full-stack practice

0%

Unlock at Level 1

School of AI - Machine Learning Internship Program

The Machine Learning Internship Program at School of AI is a hands-on, project-based experience where learners build 25 real-world machine learning projects end to end. Interns receive step-by-step guidance on data analysis, model building, evaluation, and deployment-ready code, while learning how to document and publish professional projects on GitHub. The focus is on practical skills, strong portfolios, and industry-ready ML workflows.

0%

Unlock at Level 1

School of AI - Data Scientist Internship Program

The School of AI Data Scientist Internship Program is a hands-on, project-driven experience where participants build 25 real-world data science projects from scratch. Interns learn data analysis, machine learning, storytelling, and deployment by writing code step by step and publishing every project to GitHub—graduating with a strong, job-ready data science portfolio.

0%

January 2026 Build 30 AI Projects in 30 Days

January 2026: Build 30 AI Projects in 30 Days is a hands-on, fast-paced challenge designed to help you master AI by building real projects every day. From chatbots and AI agents to automation tools, data analysis, and generative AI apps, you’ll gain practical experience using modern frameworks and tools. Each day delivers a complete, independent project—helping you sharpen skills, build a strong portfolio, and gain confidence applying AI in real-world scenarios.

0%

Unlock at Level 1

Build a $15K/Month Course

Live: January 17th, 2026 - 9 AM EST

I built a $10K–$15K/month income by creating online courses from scratch. In this live session, I’ll show you the step-by-step process, tools, and mistakes to avoid. No prior experience needed—just a computer and willingness to execute.

0%

Unlock at Level 1

AI Engineer Bootcamp

7 Day AI Engineer Bootcamp is a hands-on, beginner-friendly program designed to help you understand and build real-world AI systems in just one week. You’ll learn how AI works under the hood, use LLMs, build AI agents, work with embeddings and RAG, and deploy AI-powered applications. Perfect for developers, professionals, and AI enthusiasts who want practical skills—not theory. Starts January 4th. The first batch is completely free.

0%

Unlock at Level 1

5-Day AI Evaluations Bootcamp

AI Evals for Engineers & Product Managers is a 5-day hands-on bootcamp focused on designing, measuring, and improving real-world AI systems. Learn how to evaluate LLMs, RAG pipelines, and AI agents using meaningful metrics, human feedback, and automated LLM-based evals. You’ll build production-ready evaluation frameworks, catch regressions, support model upgrades, and make confident product decisions using data—not guesswork.

0%

Unlock at Level 1

5-Day AI Product Manager Bootcamp

From Idea to Scalable AI Product

Audience

- Product Managers & Product Owners

- Technical Program Managers

- Founders & Startup PMs

- Engineers transitioning into PM roles

- Business leaders managing AI initiatives

Outcome

By the end of Day 5, participants will design, validate, and present a production-ready AI product plan with metrics, risk controls, and execution strategy.

0%

Unlock at Level 1

Jan 12 2026: 5-Day Agentic AI Bootcamp

From LLMs to Autonomous, Multi-Agent Systems

* 5 Days | ~6–8 hours/day

* 40% theory, 60% hands-on

* Daily mini-projects + cumulative system build

* Tool-agnostic (works with OpenAI, Anthropic, open-source LLMs)

Outcome:

By Day 5, participants build a fully autonomous, multi-agent AI system with memory, tools, orchestration, and guardrails.

0%

Unlock at Level 1

Harvard Series- GenAI: Foundations, Risks & Future

This curated Harvard playlist delivers a clear, practical understanding of Generative AI—from how deep neural networks and large language models work to how they should be used responsibly. Topics include prompt engineering, system design beyond chatbots, alignment with human values, real-world case studies, and key risks such as bias, misinformation, and intellectual property. Designed for professionals and leaders seeking strategic, real-world AI insight.

0%

Unlock at Level 1

Stanford CME 295: Transformers & Large Lang Models

Explains the evolution of NLP methods, the Transformer architecture, attention mechanisms, and how large language models are built, trained, tuned, and deployed.

Topics Covered Include:

- NLP basics (tokenization, embeddings)

- Transformer architecture & attention heads

- Variants like BERT, GPT, T5

- Training fundamentals

- Fine-tuning techniques (SFT, LoRA)

- Reinforcement-based tuning (RLHF)

- Retrieval-augmented generation & agent systems

- Evaluation and advanced LLM workflows

0%

1-30 of 45