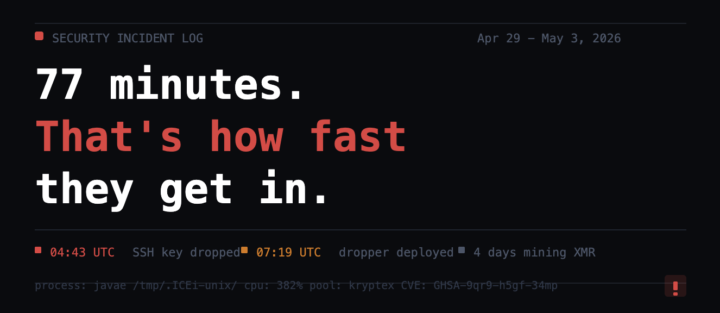

77 minutes. That's how fast an attacker gets in through an unpatched Next.js container.

77 minutes. That's how fast an attacker got inside our server. A crypto miner ran silently for 4 days before I caught it. Mining Monero. CPU pegged at 382%. Hiding as a Java process in /tmp/.ICEi-unix/. Hit a container I was running with an outdated Next.js build. Critical RCE vulnerability, CVE GHSA-9qr9-h5gf-34mp, patched in 15.5.15+. Bots are scanning for it constantly. Did the forensics properly before touching anything. Verified the attacker never escaped the container. ClawMarket was never affected. Users were never at risk. Then I built a heartbeat cron that runs every 10 minutes checking for known miner signatures, sustained CPU spikes, and unapproved containers. Fires an admin alert on detection. Found out via CPU graphs this time. Never again. The lessons that stuck: Docker doesn't mean safe. An outdated package on a public port is an open door. Your git config is not a safe place for credentials. Forensics before cleanup, always. Building in public means sharing the ugly parts too. This is one of them.

0

0

"You sure?" is now part of my workflow

Asked Claude if it was sure about its own approach. It said no. Then gave me a completely different and better solution. Most people just take the first answer and run with it. "You sure?" is now part of my workflow. Two words that have saved me hours of debugging broken code. Always pressure test the first answer.

Claude told me: "Real Talk"

I've never used that line in my life. Claude says "Real Talk" I respond because i'm laughing "Real Talk" Now Claude is throwing "Real Talk" at me about once a day.

0

0

What 53 Claude Code sessions taught me about building with AI

53 sessions in. Live product. No spaghetti. Here is what actually keeps a vibe coded project from falling apart. AI adds fast and never refactors. Every session it layers on top of whatever exists. Structure debt compounds invisibly until touching one thing breaks three others. That is not a vibe coding problem. That is an architecture ownership problem. Three things that kept it clean: One change at a time. Smoke test after every deploy. Two site outages taught us this the hard way. A living spec document. Claude Code reads it before every session. No drift. No re-explaining decisions made three weeks ago. The builder owns the architecture. Always. The AI executes inside it. The speed came from the AI. The structure stayed ours. What is the biggest mistake you have made letting AI drive a build?

One of my AI agents was eating 73% of my daily spend. Here's what happened.

I'm running four AI agents. They all fire on a schedule, handle different tasks, report back. One of them, Skywatch, was consistently costing more than the other three combined. Turned out it had a stale WhatsApp session bloating its context window to 256k tokens on every single run. It was dragging that dead weight into each execution without me knowing. The fix was simple once we found it. Clear the session. Add a 200 word output limit. Force fresh sessions per run instead of inheriting old context. Cost dropped immediately. The lesson: when one agent is way more expensive than the others doing similar work, the problem is almost never the task. It's usually stale context, missing session limits, or accumulated history nobody cleared. Audit your agents the same way you'd audit your code. The inefficiency is usually already there, just invisible. Anyone else running multi-agent setups and tracking spend per agent?

0

0

1-14 of 14

powered by

skool.com/neon-aliens-ai-1514

We help founders build AI content systems so they stop being the bottleneck in their own marketing. No hype. Just what works. 👽

Suggested communities

Powered by