Write something

Ai resilient assessment

Designing assessments in the age of AI requires more than just tougher questions—it demands smarter ones. Here’s how I approach AI-resistant assessment design using a topic like photosynthesis: Instead of asking students to recall definitions, we:🌱 Place them in real-world scenarios (e.g., diagnosing plant growth issues in a greenhouse)🧠 Require explanation, justification, and decision-making—not just answers📊 Use novel data sets for interpretation and experimental design🔄 Build in reflection and error analysis to uncover misconceptions🗣️ Incorporate live or in-class components to capture authentic thinking The goal isn’t to “outsmart AI”—it’s to elevate what we value in student learning:✔️ Critical thinking✔️ Application of knowledge✔️ Clear communication✔️ Original reasoning When assessments prioritize these skills, academic integrity becomes a byproduct—not a battle. Curious how this could look in your classroom? Let’s connect. #Education #AssessmentDesign #AIinEducation #TeachingStrategies #STEMeducation #AcademicIntegrity https://chatgpt.com/g/g-NfaHq7dcT-ai-resilient-assessment

1

0

Skills Gap Analysis Assistant

https://chatgpt.com/g/g-6981d4f996488191b08dd73125696c19-skill-gap-analysis-assistant Skill Gap Analysis Assistant By M Rollins A GPT that analyses workforce skills against role requirements, identifies skill and evidence gaps, flags risks for critical skills, and produces clear training plans from a single spreadsheet. I’m your Skill Gap Analysis Assistant—built specifically to help with structured workforce analysis for administrative roles. Here’s exactly what I can do for you: 🔍 1. Analyse Skill Data - Compare individual skill levels vs role requirements - Use a consistent 0–4 skill scale - Calculate:Skill gapsWeighted gaps (based on importance) 📊 2. Produce Structured Reports For each person (using Person ID by default), I generate: Individual Skill Gap Report - Skill-by-skill breakdown including:Target vs current levelGap and weighted gapEvidence statusRisk flags (e.g. critical gaps, outdated evidence) 👥 3. Create Team-Level Insights - Identify:Common skill gaps across the teamCritical risk areasPatterns in missing or weak evidence 🧭 4. Build Training & Verification Plans - 3-month structured plan including:What to train vs what to verifyPriority levels (High / Medium / Low)Suggested formats (e.g. practice, review, assessment) - Focus:Critical skills firstEvidence gaps vs capability gaps treated differently - ⚠️ 5. Flag Risks Clearly (Without Judgement) I highlight risks such as: - Critical skill gaps (≥2 levels) - Missing assessments - Low-confidence evidence - Outdated assessments (over 12 months) Language is always: - Neutral - Factual - Action-focused 📁 6. Work With Your Data I can analyse: - Uploaded spreadsheets - Skill matrices - Assessment records I will: - Use only skills defined in your Skill Library - Ignore anything outside that list - Treat missing data carefully (no assumptions)

0

0

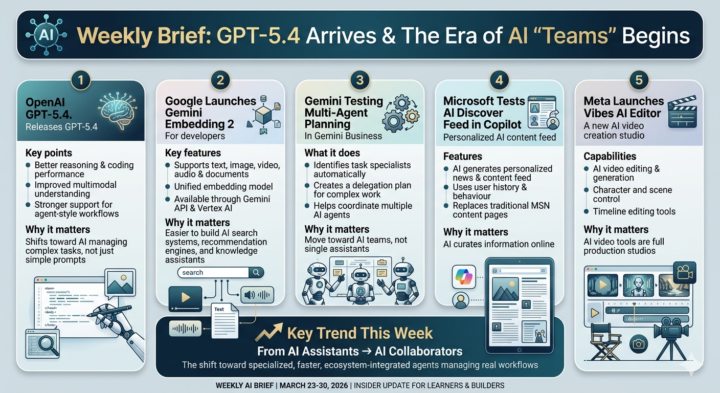

AI Weekly: GPT-5.4 is Here & The Rise of AI Agents

1. OpenAI GPT-5.4 (Flagship & Mini) - Status: The flagship GPT-5.4 launched on March 5, 2026. - Latest: Just last week (March 24), OpenAI rolled out GPT-5.4 mini and nano to the API. These are specialized for the "agent-style workflows" mentioned in your brief—specifically for developers who need high-speed coding assistants. 2. Google Gemini Embedding 2 - Status: Officially released in Public Preview on March 10, 2026. - Current Catch: Developers are currently using this to build "unified" search. Before this, you had to use different models for text and video; now, one model (Embedding 2) handles both, which is a massive win for speed. 3. Meta "Vibes" & "Avocado" - Status: Vibes was quietly pushed to mobile app stores on March 19, 2026. - Insight: It’s being positioned to compete directly with TikTok’s CapCut. Also, as of yesterday (March 29), leaks confirmed Meta is testing the Avocado (text) and Mango (video/image) models internally to power the next version of Vibes. 4. Microsoft Copilot "Cowork" - Status: This is the most recent "big" update, with major rollout details appearing as recently as March 24–30. - Key Detail: It’s moving Copilot from a "sidebar" to a "background agent." It can now resolve calendar conflicts and draft project plans while you aren't even looking at the screen. What to watch in the next 7 days: - Google I/O 2026 Previews: Watch for more "Multi-agent planning" features moving from "Testing" to "Beta" for Gemini Business users. - Sora's Pivot: There are reports today that OpenAI may be winding down standalone video products (like the Sora app) to focus entirely on "World Simulation" for robotics.

0

0

AI Weekly Brief – Latest AI Updates

March 10 – March 17, 2026 1. Mistral Releases "Mistral Small 4" (Apache 2.0) While everyone was distracted by GPT-5.4, Mistral dropped a massive 119B parameter model yesterday (March 16) that changes the math for enterprise AI. Key points - 40% Latency Reduction: Optimized for "Small" hardware but performs at "Large" model levels. - Fully Multimodal: Native support for text-to-image and document understanding built-in. - Apache 2.0: Unlike GPT-5.4, you can host this yourself, modify it, and own your data. Why it matters It provides a high-performance alternative to OpenAI for companies that cannot send their sensitive data to a 3rd party cloud but still need "Agent-grade" reasoning. 2. OpenAI "Sora 1" Sunset & Sora 2 Rollout On March 13, OpenAI officially retired the original Sora 1 in favor of Sora 2, which is now being integrated directly into the ChatGPT interface. Key points - Native 4K Generation: Clips have moved from 720p to native 4K with significantly better physics. - Audio-to-Video Sync: You can now upload a voice track, and Sora will generate a character that lip-syncs to it perfectly. - Pro Tier Exclusive: Access is being tightened; "Sora 2" now requires a Pro or Team subscription for high-resolution output. Why it matters The era of "one-off AI clips" is over. Sora 2 is designed to be a professional asset generator for marketers and YouTubers, not just a playground toy. 3. Apple Intelligence: "Visual Screen Intelligence" Beta Apple quietly expanded its AI beta on March 13, adding the feature we’ve been waiting for: Cross-App On-Screen Actions. Key points - Contextual "See & Do": Siri can now "see" a tracking number in a text and automatically open the delivery app to track it. - Short-Term Memory: You can ask "What was that address Eric sent?" and it will search across Mail, WhatsApp, and Notes simultaneously. - Private Cloud Compute: All complex processing happens on Apple’s "black box" servers, keeping your data private. Why it matters This is the "Agent" experience for the masses. It doesn't require a prompt; it just understands what you are doing on your phone.

1

0

1-15 of 15