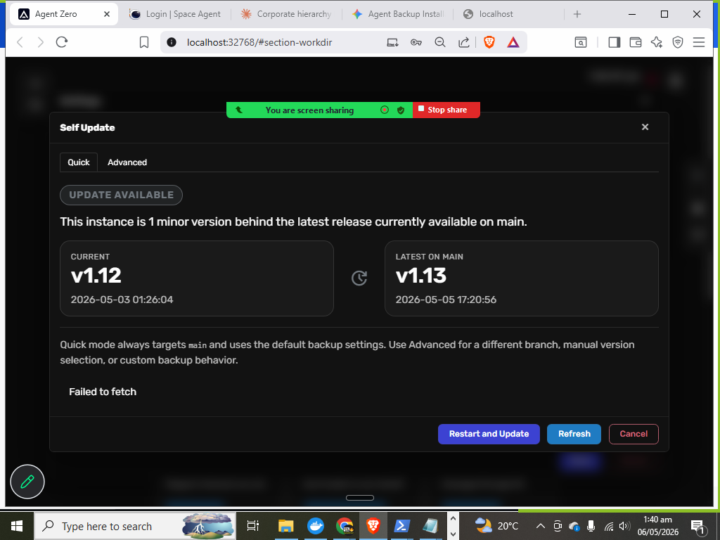

What's up everybody, this is the error that I am receiving. It will not fetch.

Greetings everyone, If anyone can help me with this error, it's not fetching from 12 to 13. I also have wiped and refreshed the image. I have it on my PC, it's local, and it's not updating to v1.13. Has anyone had the same problem or issue? Please can you let me know what the solution was or what the problem could be? Really appreciate it.

2

0

I'm a die-hard Agent Zero fan from the beginning. I need help back up.

Hi there everybody, I'm Digital King. I do content and make apps. I've been using Agent Zero for a very long time now, before the two companies merged. I also build apps every day. I have over 53 apps. I also use the new Closed Mythos with my Agent Zero. It's been a problem trying to upgrade from version 9 (1.9, 9, I think it is) all the way to 13. I've had to actually delete and re-pull the image in order to get 12, and now it's doing the same thing. It's not going to 13. I just did all this today. My question is, how do I upload my backups? Before, we had a place where you could go and just do a restore from a backup. I don't see that option now in the new layout. If somebody can help me, greatly appreciated. Thank you all for your help and your kindness.

2

0

Can you use your Agent Zero Token API with Space Agent?

Can we use our api key from our agent zero token api dashboard with space agent? I've not been able to get it to work.

I NEED HELP TO INSTALL AGENTZERO

HELLO EVERYONE. I NEED HELP TO INSTALL AGENTZERO AI IF SOMENONE WOULD BE KIND ENOUGH I WOULD BE GREATFUL HERE IS THE LINK irm https://ps.agent-zero.ai | iex

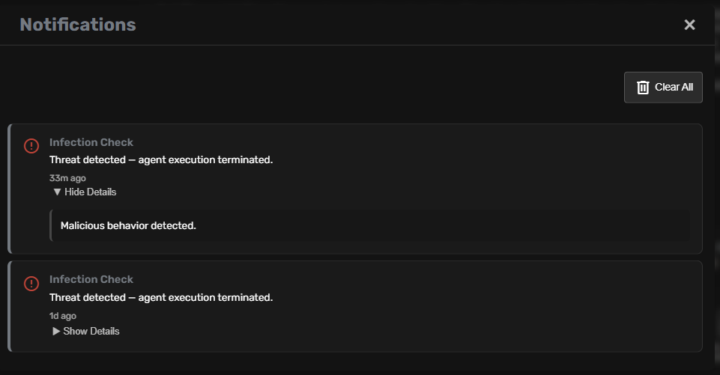

How to get rid of it "Infection check: TERMINATED"

How to get rid of it "Infection check: TERMINATED" shall i keep all in "secret store" as even then it recall secret and fails to use it? Can i bypass it somehow?

1-30 of 122