Activity

Mon

Wed

Fri

Sun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Owned by Rachel

AI for non tech professionals: understand the language, confidently evaluate what’s real and position yourself to stay relevant and ahead of the curve

Memberships

The AI Advantage

124.1k members • Free

Clief Notes

35.3k members • Free

AI Automation Society

386k members • Free

Free Skool Course

69.2k members • Free

Skoolers

183.9k members • Free

11 contributions to AI Without The Hype

👋 Welcome!

Hi everyone, I’m Rachel :) I’m a doctor and entrepreneur by background, now working in health leadership and innovation, and like many of you… I’ve spent many years hearing about AI everywhere and felt lost in the hype, anxious about the future and overwhelmed with the constant information. This is where this space came from. I’ve partnered with Bassam (AI expert, builder with PhD in machine learning, and the person who actually understands the deep layers of this technology 😄) to create something simple: 👉 A place to understand AI without the hype 👉 A place to ask questions and get real answers from real humans 👉 A place to figure out how this actually applies to your work 👉 And a place to start using it in a way that genuinely helps A lot of what’s out there is either very technical or very abstract and not useful. This space sits in the middle. It’s especially relevant for people working in healthcare, public services, or complex organisations - but if you work anywhere that feels a bit busy, layered, or inefficient, you’ll recognise a lot of what we talk about here. At its core we will help you use AI to reduce unnecessary work, so more of your time and energy go where it actually matters. A quick ask from you🙏🏼 Drop a comment below and tell us: 1️⃣ What do you do? 2️⃣ What’s one thing about AI that confuses or worries you? 3️⃣ What are you hoping to get out of this course? We’ll be in here with you along the way, answering questions, sharing ideas, and supporting each other. Now go ahead and say hello! We would love to hear from you! — Rachel & Bassam

You don’t need to learn 50 AI tools

✨You just need to understand enough to use it in your actual work/life!✨ We’ve had a wave of new people in here so hello 👋🏽 If you’ve just joined and you’re thinking “ok…now what?” 🤔 you’re exactly where you’re meant to be. Most of what’s out there either overcomplicates things, makes it sound like you need to become a data scientist overnight, or overwhelms you with the gazillion tools you feel like you should know 🫠 You don’t. You just need to understand the basics of AI to use it in your actual work. I’ve dropped a short clip below from the course on computer vision 👇🏽 It’s one of those moments where things start to shift and where you stop seeing AI as this vague, abstract thing…and start recognising what it’s actually doing. The full course is here in Skool if you want to go further. Dip in, skip around, come back to it - all yours! If you’re a founding member, you’ve got access. ‼️That won’t be the case forever, so if someone comes to mind who’d benefit, bring them in‼️ And before you disappear into the content say hello 👋🏽 What do you do, and what made you join? Always interesting to see who’s in the room😃 Rachel

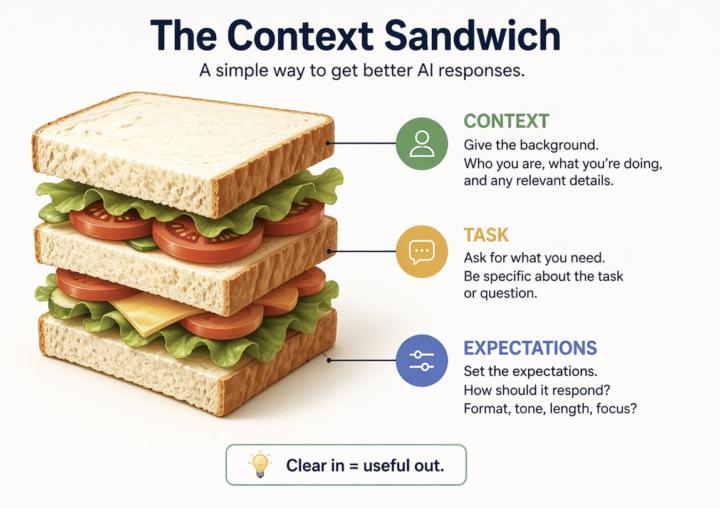

The Context Sandwhich

✨Quick tip that will make a big difference in the quality of the responses you will get from AI. Most people use AI like this: “Summarise this” “Write this” “Help with this” The answer you will get is usually… fine! Fine… but not that useful, because you’re missing is context. 🗣️Context (first) —> (then) Ask —> (make sure you) Shape the response ‼️This means, you need to tell AI: - What you are doing - What you need - How you want it back Think of it like briefing a colleague. If you’re vague, you will get something vague back. If you’re clear, the tools become much more useful. Example: “I’m preparing for a meeting with X as a [your role]. From this document, what are the key points I need to understand? Focus on decisions, risks and anything I’d need to act on. Keep it brief.” Try it out and let us know in the comment what changed for you!

The 4 parts of a good Copilot prompt

Happy 50+ members!!! 🎉🎉🎉 Huge thank you to all of you early founding members! it’s been great seeing you join, explore, comment and share🙏 I know quite a few of you are using Copilot, so here’s a quick bonus tip when you’re writing prompts: 💡Always include: 1. A GOAL: What are you trying to get out of it? 2. The CONTEXT: What are you working on / why does it matter? 3. The SOURCES: Where should it look? (e.g. emails, Teams chats, documents, websites) 4. Your EXPECTATIONS: How should it respond? ➡️Quick Example: Goal: generate 3–5 bullet points Context: preparing for a meeting with Manager X to update them on work progress Sources: focus on emails and Teams chats since June (if you want me to show you how to access your emails/chats, comment on this post!) Expectations: use simple language so I can get up to speed quickly It sounds simple, but it makes a big difference. 👇Let me know if you want more of these! And if you know someone who would find these tips useful, 🗣️ invite them over!

3 likes • Apr 16

@Sean McLoughlin good question and I do! While the principles remain largely the same, there are differences in delivery that are contingent on really “small” factors. But also on the type of the prompt. Instruction based prompts regardless of detailed structures deliver replicable results across the board. But role based prompts benefit from a slightly varying structures depending on the LLM. And of course, there is the variation in responding to things other than structure. For example, Copilot is more sensitive to formatting and grammar than other LLMs. Claude responds reasonably well regardless. @Bassam Farran don’t know if your thoughts are similar?! What’s been your experience @Sean McLoughlin ?

2 likes • Apr 17

@Sean McLoughlin yes agree, in that clear instructions narrow the possible outputs so you get more consistency across all models. Introducing a role however will automatically introduce variations as the prompt pulls in the broader set of learned patterns. I think with Copilot it’s a case of both. Take what makes copilot the tool for example (ie GPT variant from open AI, the system prompts, retrieval instructions, safety filters etc). Part of it will be the tuning of the model and the rest is all the hidden backstage stuff. And context layering is the most interesting part to me. I haven’t tested it but others have in academic settings mostly. You may be interested in the paper “Lost in the Middle" 2024 by Liu et al. - which shows that LLMs can fail to retrieve info from the middle of long inputs! We’ve relied on some of this early knowledge when designing our prompts! Hard to keep up with all the rapid changes but feel it’s a good place to start 😊

NEW GUIDE + Resources and Tools!

💡Quick update!! Following the poll last week, you asked for a guide on "How to make ChatGPT sound like you" and happy to let you know it's now live! 🎉 ➡️There’s a new folder in the classroom: "Working with AI". You'll find the resources you need within! It's become very easy to start sounding slightly… off when using AI. The guide is here to help with that. There’s a PDF downloadable version with full explanations and the prompts are also added in the classroom so you can copy and paste them. Also… I saw the votes for making sense of complex policies. That one clearly isn’t going away, so I’ll work on that next! 😄 Have a look when you get a chance. Let me know what makes sense and what doesn’t.🙏

1-10 of 11

@rachel-dbeis-9592

Doctor and Healthcare Leader collaborating with AI engineer and Tech leader to help professionals make sense of AI in medicine, healthcare and beyond

Active 1d ago

Joined Mar 15, 2026