Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

OpenClaw Users

829 members • Free

AI Agents | OpenClaw

102 members • Free

AI Automation Society

335k members • Free

13 contributions to AI Agents | OpenClaw

Testing Without a LLM Budget: How to Max Out Free Tiers

I just added a new classroom lesson on how to build a stable OpenClaw agent using only OpenRouter free models with a fixed fallback chain. Inside the article: - the full current free-model pool - how to sort the chain by strength - the exact config pattern for primary + fallbacks - how to check that fallback really returns to primary after limits hit Read it here. Feel free to ask your questions in the comments.🫡

Quick one for everyone optimizing OpenClaw spend:

Step 3 is live 🔥 : Option 1: Aggregators as your token control panel This is the practical setup for OpenRouter: how to find strong :free options and lock your future model IDs for config. Drop your questions below and I’ll answer each one. 😊

2 likes • 21d

@Doug Keim Thanks for the feedback — really appreciate it 🙌Good to know this is actually useful. Regarding Gemini — honestly, even OpenClaw has already removed mentions from the docs about using Google paid subscriptions, because the ban risk is pretty high. I didn’t want to push anything that could put people’s accounts at risk — especially since most people have way more than just Gemini/Antigravity tied to their Google accounts (email, etc.). So I decided not to publish anything about that for now. As for OpenRouter and free models — I actually have a draft almost ready. I basically took all the free tiers, sorted them by strength, and set up a fallback chain so that once a stronger model hits its limit, it drops to the next one. So it always tries the strongest model first, then falls back if needed — and on every new request it starts from the top again (in case limits reset). I’ll try to push it to the classroom in the next few days 👍

Agent (and other parameter) Editing

Now that I've been tinkering with OC for a few weeks, and I (or my agent) have broken more things than I care to admit. How does everyone tend to makes changes to their agent(s)? Even the CTO Factory Agent, while much better then my original agent, still breaks stuff from time to time. I been mostly using the agents themselves to make config changes, but unless you're on a top tier model (and even then) it can be problematic. I also use the OC terminal for some things, but that tends to break some of the customizations I'm running. Then I also sometimes edit the .json files directly. Mainly to recover from a catastrophic failure (i.e. OC won't start). I haven't used the WebUI for editing, but that IS how I mainly interact with my agents. But aside from the chat, which isn't great, the rest if the WebUI is pretty clunky IMHO. So just looking to see how other people do it.

Poll

3 members have voted

1 like • 14d

@Doug Keim It seems important here to distinguish between two high-level approaches — who is actually driving the agent development: 1. a technically skilled person with experience in building agents 2. a business-oriented, non-technical person In the second case, we are trying to close that gap by providing a reliable tool — our CTO agent. It evolves continuously, and based on your feedback we update it regularly. And to be honest, sometimes we even go back to square one and completely redesign it. However, this approach will always have a ceiling. The more complex the agent becomes, and the more conflicting requirements it has, the higher the probability that things will break. The alternative path is classical engineering. This is where we build agents for OpenClaw using dozens of development patterns. In this setup, the agent is not just a collection of markdown files — it includes a whole layer of additional systems: guardrails, prompt optimization, and most importantly, a closed-feedback loop testing cycle. In my opinion, this is the most critical part of agent development — with every incremental change, you run a full automated regression using a stable eval set. Overall, this is a much longer and more labor-intensive process, but it delivers significantly higher reliability and better outcomes.

Telegram vs. Discord

It seems that most guides, not just on here, seem to prefer Telegram as the default interface to interact with your agent. I frankly hate Telegram. The few times I've used it, I was just inundated with spam and scammers, and I'm much more comfortable with Discord. Granted I've never run my own server on either until now, so maybe that would make Telegram bearable. That being said, I've found myself working primarily directly with my agent(s) via the WebUI chat or doing things myself via the terminal (either my server or the openclaw container). Now that I seemed to have tamed my main agent's persona thanks to help from some of the guides on here, I'm ready to start trying to implement some of the more advanced concepts (CTO Agent, Notes Agent, etc.), and I'm wondering if I should pivot to Telegram or just stick it out with Discord. I've already had my agent (Skippy) download and review the code of the CTO Agent, and it says migrating it to Discord "shouldn't be too difficult." So before I start banging my head off that tomorrow, I thought I would see what other people think.

3 likes • 21d

Just wanted to add a couple more points here 👇 First — in Telegram privacy settings you can fully control who is allowed to message you. If you configure it properly, you can basically reduce spam to zero. Second — when working with OpenClaw, data transfer speed and responsiveness actually matter quite a bit. There’s been some recent movement where the founders are in touch and starting to align their products more closely (see screenshot attached). That usually means the ecosystem is going to grow — and I’d expect more convenient UX/tools around configuration in the near future. And lastly — Telegram invests heavily into its SDK, which gives a lot of flexibility. For example, it’s very convenient to set up a sandbox environment for testing your agent before pushing it live, or to validate changes and make sure regression doesn’t break things.

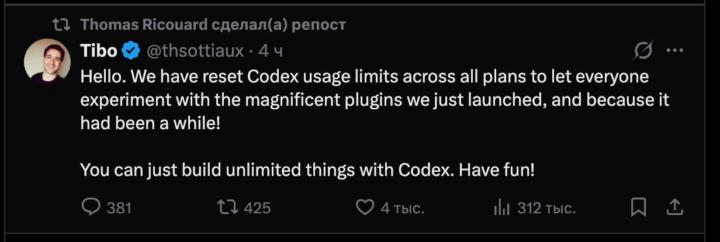

Codex again rested their limits

For those of you building with Codex, the usage limits just reset, which gives us a fresh window to push harder on our current builds. With higher quotas back in play, you can spend more time shipping rather than managing usage windows or waiting for resets. • Run aggressive testing cycles while the ceiling is high. • Prototype and iterate faster without hitting throttles. • Clear any backlogged tasks that were stalled by previous constraints. What are you planning to ship with the extra room this week?

1-10 of 13