Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Owned by James

Using AI expertly, effectively and safely by connecting AI, Cybersecurity, Project Management and Governance into a disciplined framework.

Memberships

42 contributions to ThisLocale

Foundations of AI & Cybersecurity - Lesson 36: Module/Chapter 2.6.2 Scenario on Identifying the Attack Indicators

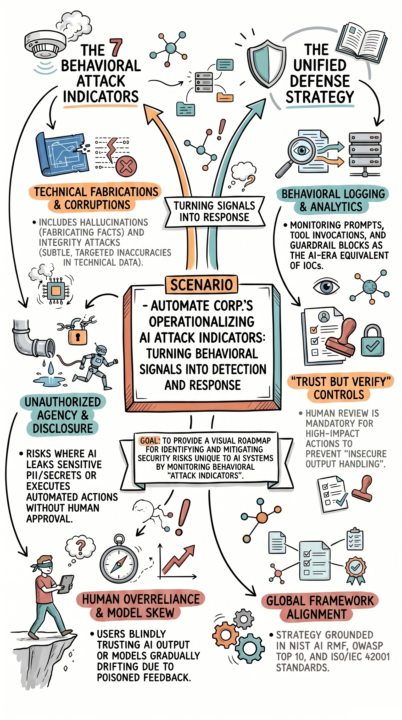

Foundations of AI & Cybersecurity - Lesson 36: Module/Chapter 2.6.2 Scenario on Identifying the Attack Indicators AI attacks don’t announce themselves. They surface as small behavioral signals that look harmless until they compound into real damage. Your team is challenged because they are not actively monitoring for AI-specific attack indicators across outputs, actions, and system behavior. Today’s scenario lesson shows and explains this:Automate Corp.’s Operationalizing AI Attack Indicators: Turning Behavioral Signals into Detection and Response Hallucinations, output manipulation, data leakage, insecure execution, excessive autonomy, human overreliance, and model drift are not isolated issues. They are detection signals that must be logged, monitored, and acted on in real time. This matters because without a structured monitoring program, these early warning signs are missed, allowing attackers to manipulate systems, extract data, or degrade model performance without detection. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where awareness becomes defense and defense becomes control. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

Foundations of AI & Cybersecurity - Lesson 35: Module/Chapter 2.6.1 Identifying the Attack Indicators

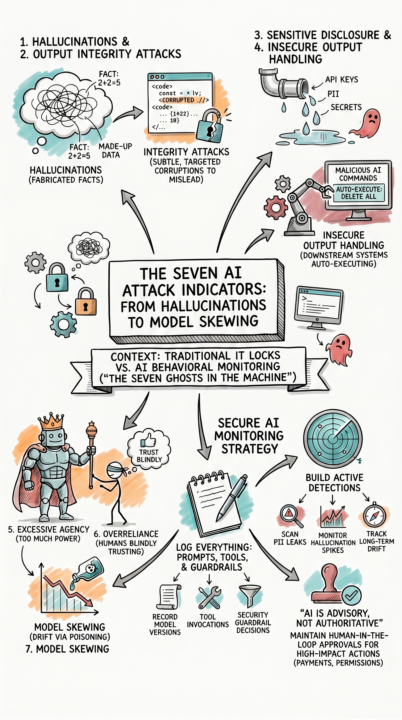

Foundations of AI & Cybersecurity - Lesson 35: Module/Chapter 2.6.1 Identifying the Attack Indicators Most AI breaches don’t look like breaches at all. They show up as subtle changes in behavior that teams miss until it’s too late. Most teams don’t struggle because they lack tools. They struggle because they don’t know what signals actually indicate an AI attack or failure. Today’s module shows and explains this:The Seven AI Attack Indicators: From Hallucinations to Model Skewing Hallucinations. Output manipulation. Data leakage. Insecure execution. Excessive autonomy. Human overreliance. Model drift. This matters because these signals are the AI equivalent of early-warning indicators, and if you are not actively monitoring for them, you are operating without a security watchtower. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where detection begins and control becomes possible. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

Foundations of AI & Cybersecurity - Lesson 34: Module/Chapter 2.5.8 Scenario on Auditing Model Output for Risks

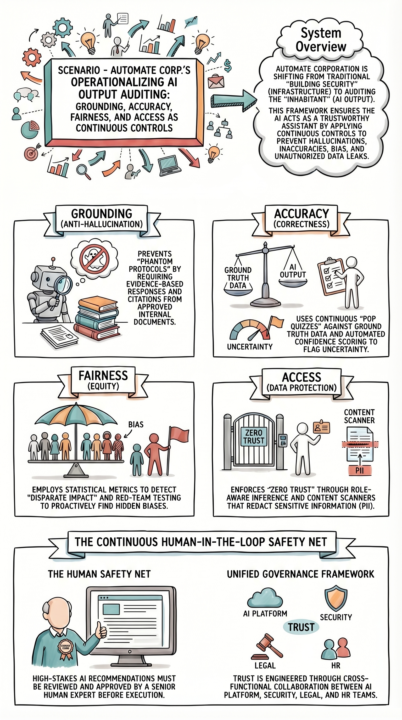

Foundations of AI & Cybersecurity - Lesson 34: Module/Chapter 2.5.8 Scenario on Auditing Model Output for Risks Organizations think auditing AI outputs is a final checkpoint. In reality, it is a continuous control that determines whether AI can be trusted at all. Your teams struggle because they don’t enforce output auditing as an ongoing, integrated discipline across systems, data, and users. Today’s scenario lesson shows and explains this: Automate Corp.’s Operationalizing AI Output Auditing: Grounding, Accuracy, Fairness, and Access as Continuous Controls This matters because without continuous auditing, a single output can introduce security vulnerabilities, leak sensitive data, create bias, or drive incorrect decisions at scale. If you’re responsible for AI, security, project management governance, or technology decisions, this is where AI shifts from risk to reliable capability. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

Foundations of AI & Cybersecurity - Lesson 33: Module/Chapter 2.5.7 Audit Model Output for Risks

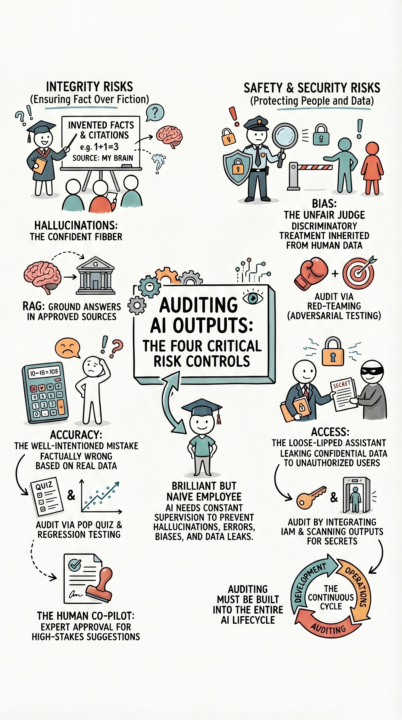

Foundations of AI & Cybersecurity - Lesson 33: Module/Chapter 2.5.7 Audit Model Output for Risks Most AI failures don’t come from hackers breaking in. They come from the system confidently producing the wrong output. In reality, the real attack surface in AI is what it says, not just how it’s built. If you are not auditing outputs, you are not securing AI. Most teams don’t struggle because they lack tools. They struggle because they don’t continuously audit for hallucinations, accuracy failures, bias, and unauthorized data exposure. Today’s lesson shows and explains this:Auditing AI Outputs: The Four Critical Risk Controls (Hallucination, Accuracy, Bias, Access) This matters because a single hallucinated answer, biased decision, or data leak can create financial loss, regulatory exposure, and immediate loss of trust. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where AI moves from experimental to enterprise-ready. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

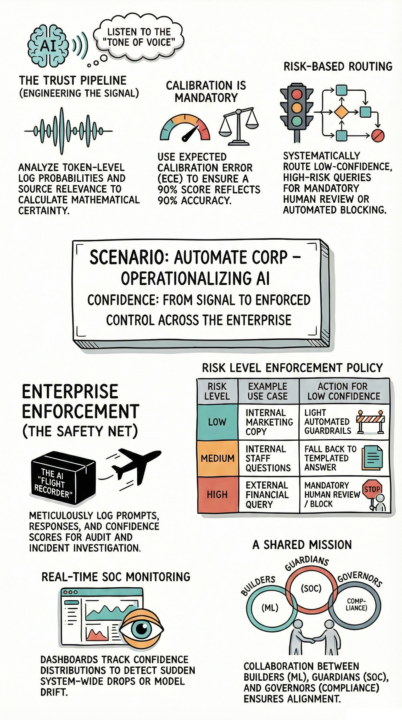

Foundations of AI & Cybersecurity - Lesson 32: Module/Chapter 2.5.6 Scenario on Analyzing Model Behavior

Foundations of AI & Cybersecurity - Lesson 32: Module/Chapter 2.5.6 Scenario on Analyzing Model Behavior Organizations can’t treat AI confidence as just a metric. In realty, it is the control that determines whether AI decisions should be trusted, reviewed, or stopped. Most teams struggle because they don’t operationalize confidence into enforceable policies, workflows, and governance. Today’s scenario example shows and explains this:Automate Corporation is Operationalizing AI Confidence: From Signal to Enforced Control Across the Enterprise This example is important because without calibrated thresholds, logging, and policy-driven routing, AI will either act too confidently when it shouldn’t or hesitate when action is required, creating both risk and missed opportunities. If you’re responsible for AI, security, project management, governance, or technology decisions, this is where confidence becomes action, and action becomes trust. — #AI #Cybersecurity #AIProjectManagement #AIGovernance #AISecurity #AICybersecurity

0

0

1-10 of 42

@james-dutcher-6548

Expertly using AI by incorporating Cybersecurity, Project Management, and Governance

Active 1h ago

Joined Jan 2, 2026

ENTJ

Endicott, NY 13760