Activity

Mon

Wed

Fri

Sun

May

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

AI Accelerator

18.2k members • Free

Marketer Courses (by: CEO Lab)

1.7k members • Free

The Gemini AI Mastery Lab

49 members • Free

AI Automation Society

329.1k members • Free

AI READY Skool™

30 members • Free

Wizard Ai Video

1.4k members • $49/month

AI Freedom Launch Accelerator

2.8k members • Free

Marketing & Ai Academy

1.4k members • Free

Ai Filmmaking

6.9k members • $5/month

1 contribution to AI Builders Lab

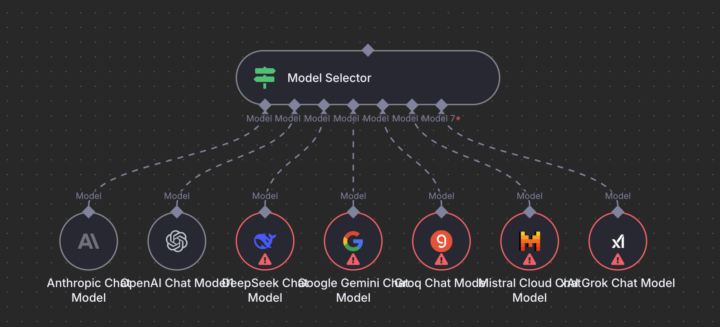

n8n Model Selector

Just found out that n8n has released a new node called "Model Selector" last week! It allows you to pick a chat model based on conditions. There are multiple benefits with this: - Optimization: Route tasks to the most cost-effective or fastest model depending on your needs—save on API costs or boost response times. - Resilience: Automatically fall back to alternative models if your primary service is down or rate-limited, ensuring your workflows stay robust. - Experimentation: Easily A/B test different models and compare outputs, helping you find the best fit for your use case. Track release updates here: https://github.com/n8n-io/n8n/releases

1-1 of 1

@felix-rodgers-4735

ASPC + AI-Native Change Agent building EvolvedAgile, helping businesses adopt AI + SAFe, improve flow, win outcomes. Here to learn, network & grow.

Online now

Joined Aug 16, 2025

Lubbock, Texas