Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

What is this?

Less

More

Memberships

The AI Skills-Forge

86 members • Free

AI Automation Base

43 members • Free

AI Created This

1.6k members • $7/month

Claude Code Architects

1.1k members • Free

The AI Builder Lab

154 members • Free

AI Creator Labs

786 members • Free

AI Ads Mastery

1.7k members • Free

FundFlowMastery Starter

175 members • Free

Project Biohacked

12k members • Free

6 contributions to MindX Academy

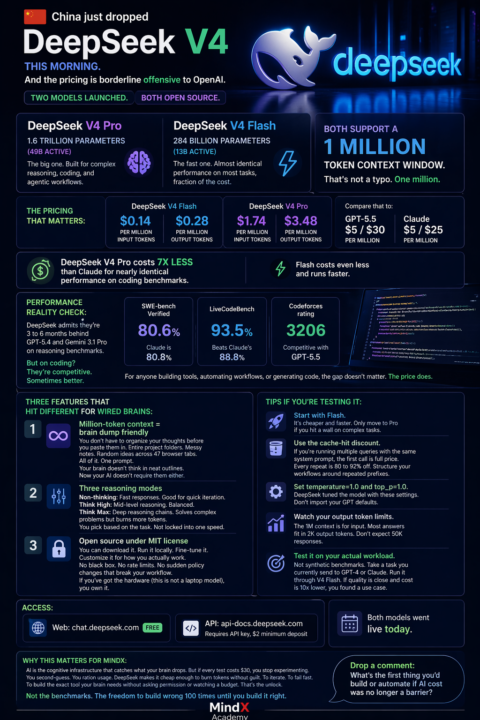

DeepSeek V4 Dropped

China just dropped DeepSeek V4 this morning. And the pricing is borderline offensive to OpenAI. Two models launched. Both open source. DeepSeek V4 Pro: 1.6 trillion parameters (49B active). The big one. Built for complex reasoning, coding, and agentic workflows. DeepSeek V4 Flash: 284 billion parameters (13B active). The fast one. Almost identical performance on most tasks, fraction of the cost. Both support a 1 million token context window. That's not a typo. One million. The pricing that matters: Flash: $0.14 per million input tokens, $0.28 outputPro: $1.74 input, $3.48 output Compare that to GPT-5.5 at $5/$30 per million. Or Claude at $5/$25. DeepSeek V4 Pro costs 7x less than Claude for nearly identical performance on coding benchmarks. Flash costs even less and runs faster. Performance reality check: DeepSeek admits they're 3 to 6 months behind GPT-5.4 and Gemini 3.1 Pro on reasoning benchmarks. But on coding? They're competitive. Sometimes better. SWE-bench Verified: 80.6% (Claude is 80.8%)LiveCodeBench: 93.5% (beats Claude's 88.8%)Codeforces rating: 3206 (competitive with GPT-5.5) For anyone building tools, automating workflows, or generating code, the gap doesn't matter. The price does. Three features that hit different for wired brains: 1. Million-token context = brain dump friendly You don't have to organize your thoughts before you paste them in. Entire project folders. Messy notes. All of it. One prompt. Your brain doesn't think in neat outlines. Now your AI doesn't require them either. 2. Three reasoning modes Non-thinking: Fast responses. Good for quick iteration.Think High: Mid-level reasoning. Balanced.Think Max: Deep reasoning chains. Solves complex problems but burns more tokens. You pick based on the task. Not locked into one speed. 3. Open source under MIT license You can download it. Run it locally. Fine-tune it. Customize it for how you actually work. No black box. No rate limits. No sudden policy changes that break your workflow.

Go F*ck Stuff Up

Stop Reading. Start Breaking. 🛠️ Here's your challenge this week: Block out 30 minutes on your calendar everyday. Lock it in. Then use those 30 minutes to actually PLAY with your tools. Not watch a tutorial. Not read documentation. Not bookmark "10 best practices" for later. Actually open the thing and start clicking around. Try building something. Test a workflow. See what happens when you push buttons you're not sure about. You will learn 10x more in 30 minutes of hands-on experimentation than in 3 hours of passive consumption. And yeah, you're probably going to break something. That's the point. Break it. Figure out how to unbreak it. Break it differently. Learn what makes it tick. The best developers, the best creators, the best problem-solvers didn't get there by being afraid to f*ck stuff up. They got there by f*cking stuff up repeatedly until they understood the system. Your tools won't explode. Your computer won't catch fire. You can always undo, reset, or start over. So stop overthinking it. Stop waiting until you "know enough." Put 30 minutes on your calendar and just dive in. See you in there. 🚀

🚀 It’s Official: My Book Is LIVE

My new book just went live on Amazon, and you’re the first people I wanted to tell. 👉 Grab it here: https://a.co/d/0gmAtK4c Here’s how you can help this book take off: Grab a copy on Amazon https://a.co/d/0gmAtK4c Leave an honest review after you read it (this matters a lot for visibility). Share the link or a screenshot inside your socials and tag me. Thank you for being here from the beginning. This book is just the start of what we’re building together. *** There is a resource app built to accompany the book. You'll find that in the Classroom section.

Small Victories Add Up

Building a strong foundation of knowledge takes time, patience, and dedication. Remember to celebrate the small victories along your journey. Every new concept you grasp and every problem you solve is a significant step forward toward your ultimate goals. Keep up the great work!

1-6 of 6

@m-smith-5972

“Embracing years of wisdom as my guide, I launch my A.I. journey. Blending timeless elegance and proving it’s never too late to start a new chapter.”

Active 53m ago

Joined Mar 19, 2026