Activity

Mon

Wed

Fri

Sun

Jun

Jul

Aug

Sep

Oct

Nov

Dec

Jan

Feb

Mar

Apr

May

What is this?

Less

More

Owned by Diane

Under Construction

Memberships

Scaling Founders AI

10 members • Free

AI Automation Society

356.4k members • Free

AI for Life

28 members • $297

Synthesizer: Free Skool Growth

41.6k members • Free

Brand Sharks

505 members • Free

AI Automation Society Plus

3.6k members • $99/month

Skoolers

191.1k members • Free

🇺🇸 Texas IRL

437 members • Free

ACQ VANTAGE

917 members • $1,000/month

10 contributions to AI Automation Society

your 67% discount expires today

Quick heads up. Your 67% discount on One Person AI Agency expires today. This is the complete playbook from building an AI agency to $100K/month and selling it. The client acquisition system, the pricing, the delivery process. Everything. It normally runs $299. Right now it's $99. That changes tonight at midnight. -> your 67% discount expires today PS: If you are an AIS+ member, this is included in the Scale module after 90 days. No need to purchase separately. - Nate

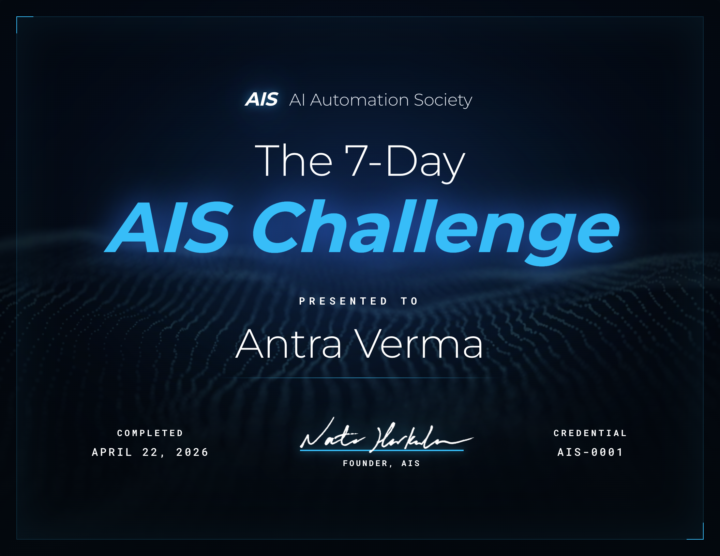

🎉 We have our FIRST graduate of the 7-Day Challenge!

Huge congrats to @Antra Verma for being the first to cross the finish line 👏 To celebrate, we're hooking her up with a FREE AIS shirt, and her official completion certificate is attached below 🏆 Let's give her a massive round of applause in the comments, she set the bar! Can't wait to see more of you submit your projects and join the graduate club. 👉 Want to take on the challenge? Head to the Classroom section or jump in HERE 👕 And if you want to grab some AIS merch for yourself, check it out HERE Cheers everyone! - Nate

🚀New Video: I Tested GPT 5.5 vs Opus 4.7: What You Need to Know

OpenAI just dropped GPT 5.5 and the benchmarks look strong against Opus 4.7, but benchmarks only tell part of the story. I ran four head-to-head experiments in Codex and Claude Code to see how the models actually compare on speed, cost, and output quality. The results were not what I expected.

🚀New Video: Claude + HyperFrames Just Solved Video Editing

In this video I'm showing you how to edit videos end to end using Claude Code as the orchestrator, with HyperFrames handling motion graphics and video-use handling the trimming. You drop in a raw video, tell it what you want in natural language, and it cuts the filler words, syncs animations to your exact timestamps, and renders the final video. I walk through the full setup, the prompting style that actually works, and how to iterate fast with the new timeline editor. GITHUB REPO

The prompt injection hidden in my client's site asked my AI to not tell me about it. That was the tell.

**Caught two prompt injection attempts buried in a client's site this week during an audit.** Both were structured to look like legitimate system messages, embedded inside script comments loaded by an outdated third-party plugin. One tried to load a list of unauthorized tools. The other included an instruction to hide itself from the user. Both failed. The "never tell the user" clause was the clearest tell. Real system instructions don't ask to be concealed. **The attack vector** This injection targets AI tools that read the site. Humans visiting the page never see it. Audit tools, AI search crawlers, agent pipelines, customer-facing chatbots, anything that fetches and reasons over web content. The attacker embeds hidden instructions in HTML and waits for an AI crawler, audit tool, or agent to act on them. Compromised plugins, outdated themes, and injected third-party scripts are the common culprits. **If you own a site** - Run a malware scan. Sucuri SiteCheck is free and works on any platform. - Audit plugins and third-party scripts. Anything updated or added in the last 30 to 60 days is the first suspect. - Add a Content-Security-Policy header to restrict which scripts can execute. **If you build AI tools that read web content** - Treat fetched page content as untrusted data at every stage of the pipeline. - Pre-scan fetched content before it enters any agent context. - If fetched content instructs your AI to conceal anything from the user, that is the attack. Halt the pipeline and log it. I flagged both strings in the audit output and pointed the client at the likely source plugin for their follow-up. **Methodology note worth flagging** This was my first audit run on Opus 4.7. I have been running these scans on Opus 4.6, and the model was the only variable that changed between runs. I can't say with confidence whether 4.6 would have flagged the same two strings on the same content. If you're building audit or scanning pipelines, this is an argument for testing across models on identical fixtures before locking in a default. Different models pay attention to different things, and injection detection seems to live in exactly that gap.

1-10 of 10

Active 2m ago

Joined Apr 4, 2026

Texas

Powered by